In today’s digital world, we live in a strange contradiction. Everything is more connected than ever people, platforms, and data yet trust has become more fragmented.

You can spend years building your reputation on one platform, earning trust and proving credibility. But the moment you move to another platform, it almost resets to zero.

It feels like your history does not exist.

This is not just a technical issue. It is a human problem about identity, memory, and continuity.

Today, identity exists, but it is scattered. Trust exists, but it is isolated.

Each platform builds its own system, sets its own rules, and evaluates people differently. As a result, one person ends up with multiple identities and reputations that are not connected.

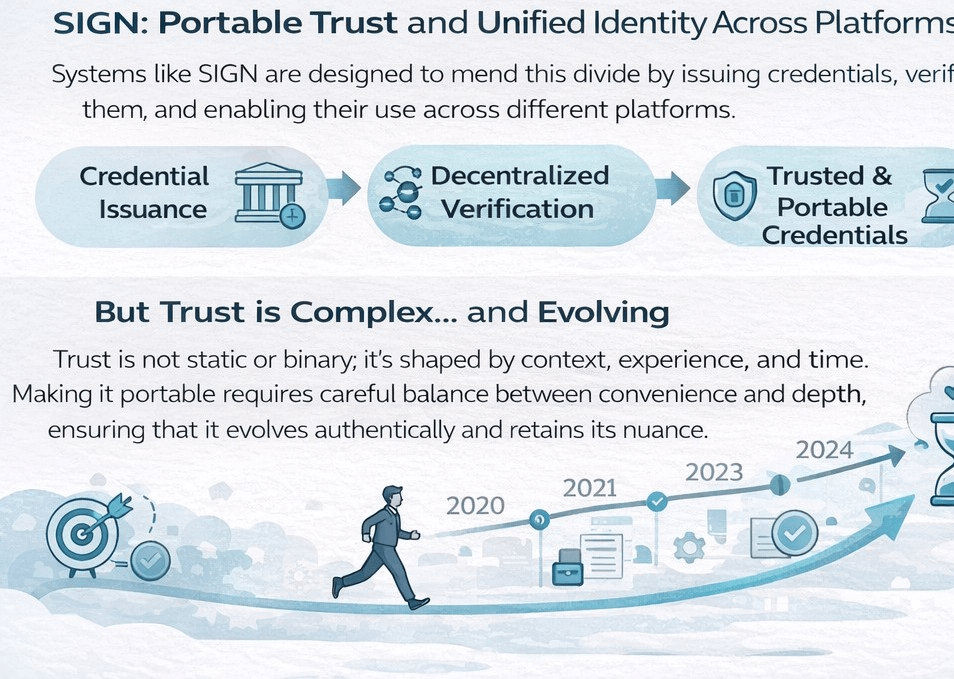

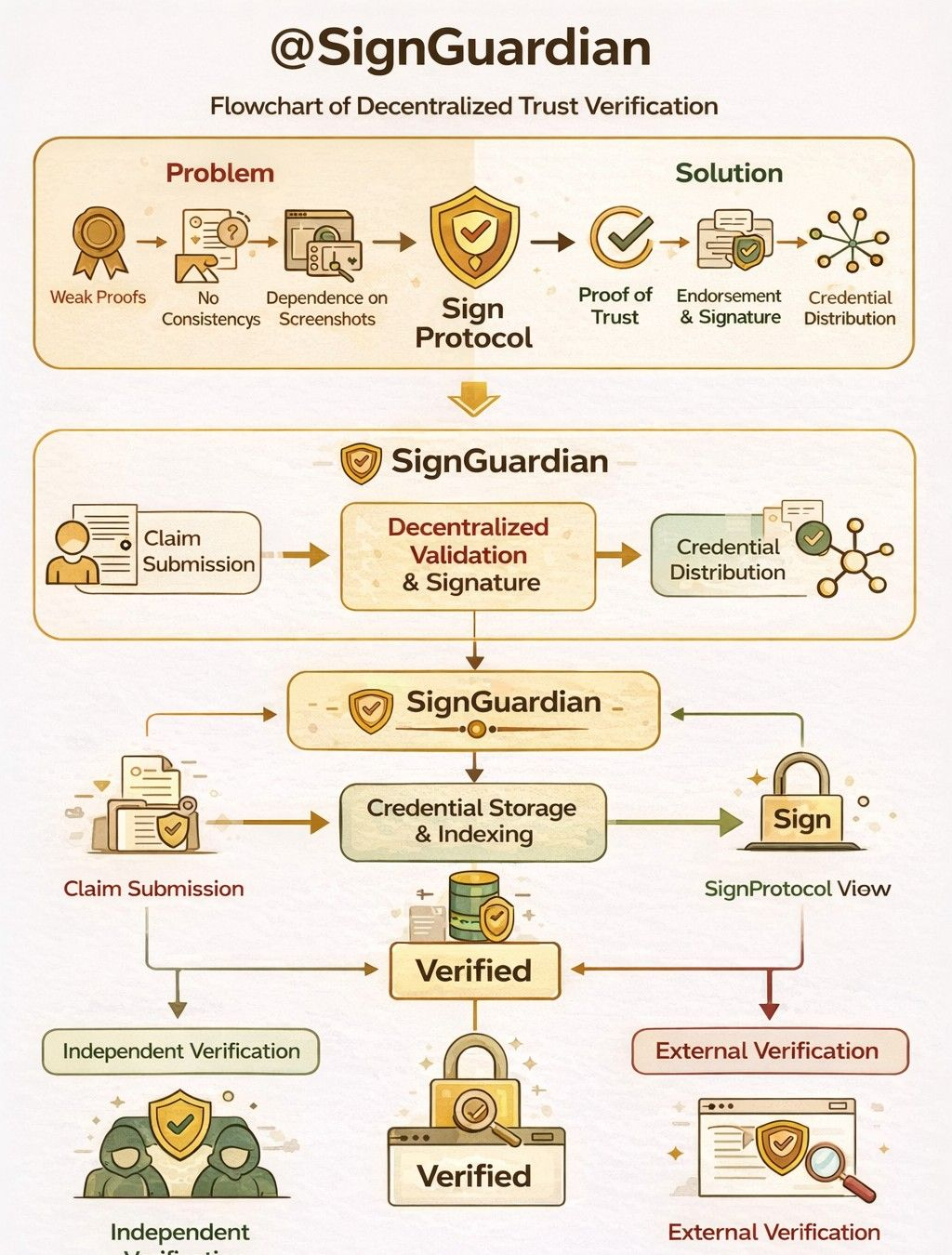

This is the gap systems like SIGN aim to solve. The idea seems simple: issue credentials, verify them, and make them usable across platforms.

But in reality, the challenge is much deeper

because what is being transferred is not just data, but trust.

And trust is not simple.

It is not something that either exists or does not. Trust evolves over time. It depends on experience, consistency, and perception. Turning something this complex into badges or scores can remove its true meaning.

This raises an important question:

Is convenience worth the loss of authenticity?

Another key question is: who defines trust?

If anyone can issue credentials, the system may become full of claims.

When everything is easy to verify, real credibility can lose its value. Separating real trust from noise becomes difficult.

Systems like SIGN must balance openness and control. They must allow participation while protecting the quality of trust.

But as participation grows, managing noise becomes harder.

Time adds another layer.

Trust is not static it grows, weakens, and changes. A system that only captures single moments cannot represent true trust.

Real trust is about long term consistency.

Incentives also play a major role. Tokens can encourage participation and growth. But they can also change behavior.

When rewards are introduced, people start optimizing for rewards instead of meaning. Actions become performative rather than authentic.

People begin asking, “

What is rewarded?” instead of “What is meaningful?”

This can slowly distort the system.

There is also a psychological effect. When people know they are being measured, their behavior changes.

Some become more responsible, while others focus more on appearance than reality.

We already see this on social media, where likes and followers influence behavior. Authenticity often becomes secondary.

SIGN must work within this reality. Human behavior does not change easily.

Usability is also critical.

Most people adopt systems not because they believe in them, but because they are easy to use. If a system is complex, it will struggle to grow.

For success, SIGN must feel natural and seamless.

Ownership is another key issue. Today, platforms control identity and reputation. If a platform changes or shuts down, users can lose everything.

SIGN attempts to change this by giving control back to users through decentralization.

But decentralization comes with responsibility. Not everyone is ready to manage their own data and security.

Many people still prefer convenience.

This shift from centralized to decentralized systems is complex and uncertain. It will require experimentation and time.

There is also the question of resilience.

As the system grows, can it maintain integrity? Or will it be manipulated?

Systems based on metrics are often vulnerable to exploitation. If incentives are misaligned, people may game the system.

Despite these challenges, the potential is huge.

A system that enables portable and verifiable trust could transform digital interaction. It could reduce friction and create new opportunities.

In the end, this is not just a technical challenge

it is a philosophical one.

What is trust in the digital world?

How should it be measured?

Can it be preserved without losing its meaning?

These questions do not have simple answers.

If SIGN can balance usability, authenticity, and incentives, it could become a fundamental part of digital infrastructure.

But if it reduces trust to simple scores and badges, it risks losing depth.

The biggest question remains:

Can trust truly be digitized without losing its complexity?

The answer will emerge over time

through real use, misuse, and continuous improvement.