I’ll admit my first reaction to real world asset tokenization campaigns is hesitation. Not because the idea lacks merit but because I have seen how easily complex coordination problems get reduced to neat diagrams and confident promises. Turning land titles benefits or cultural assets into digital tokens sounds efficient on paper. In practice it runs into the messy reality of institutions people and trust.

This particular campaign around tokenized distribution and asset registries held my attention for a different reason. It does not pretend those challenges disappear. Instead it tries to work through them. The core idea behind something like TokenTable is not only to digitize assets but to organize how different actors governments local agencies communities and even automated systems coordinate around them without relying on a single identity system.

That detail shapes everything.

Most systems I have come across try to solve trust by centralizing it. One database one ID one authority. It sounds clean but it is fragile. If that system fails or is misused everything built on top of it inherits the same weakness. What this campaign attempts instead is a layered approach to verification. Eligibility or ownership is not proven once. It is built over time. It is cross checked. It depends on context.

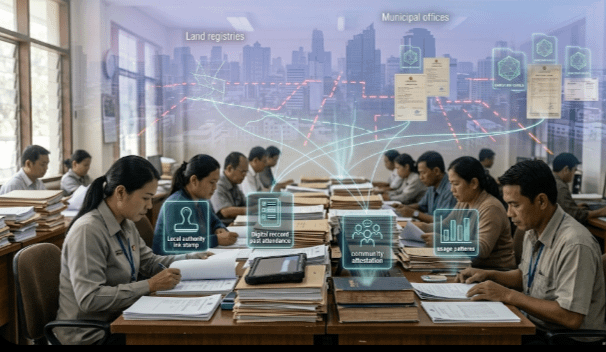

Think about social benefits. Distributing welfare pensions or emergency aid sounds simple until you look at how eligibility is actually decided. Records are often incomplete outdated or inconsistent across departments. Instead of forcing everyone into a single identity pipeline this system allows multiple forms of proof to contribute. Local authority confirmations past participation in programs community level attestations and even patterns like consistent engagement with services all become part of the picture.

It is not fast. It is not simple. But it feels closer to how trust works in reality. People and institutions rarely rely on one source. They rely on a mix of signals that build confidence over time.

The same pattern appears in asset tokenization. Real estate is a good example. Digitizing ownership is less about putting deeds on a blockchain and more about reconciling years of fragmented records. Land registries tax authorities and municipal offices do not always agree. A tokenized system does not erase those disagreements. What it can do is make them visible and track how they are resolved.

In one pilot I followed ownership was not treated as a single fixed truth. It was represented as a collection of claims. Each claim had its own backing from different sources. Transfers did not rely on one approval. They required enough aligned confirmations to move forward. It felt less like a smooth digital transaction and more like a structured process of agreement. Slower but more transparent.

The distribution side brings its own challenges. Agricultural subsidies education stipends and healthcare benefits often struggle with leakage and misallocation. Tokenized systems can improve targeting but only if the underlying eligibility logic is sound. In this campaign automated agents are used carefully. They do not replace decision making. They support it. They scan for inconsistencies track patterns and flag unusual behavior for human review.

That balance matters. Full automation in sensitive systems often creates new risks. Here the goal seems to be assistance rather than control.

Cross border assistance is another area where expectations are usually too high. Many projects talk about seamless global coordination but avoid the reality of incompatible systems and regulations. This campaign takes a narrower path. It focuses on interoperability at specific points. If ownership or eligibility can be verified through shared standards or compatible formats then cooperation becomes possible without full system alignment.

It is a modest goal but a practical one.

Financial inclusion programs add another layer. Creating accounts and distributing funds to unbanked populations sounds straightforward but it requires careful onboarding and verification. Without a single identity system the process depends on combining different proofs over time. Community validation local records and usage patterns all play a role. It is slower at the start but it reduces the risk of exclusion or fraud that comes from rigid systems.

What stands out to me is that this campaign does not assume uniform adoption. Some regions will move quickly. Others will resist. Some data sources will be reliable. Others will remain messy. The system is built to operate within that uneven environment rather than replace it.

There are still serious questions. Governance is one of them. Who decides which proofs are strong enough to count. How are conflicts handled when different sources disagree. What prevents the system from gradually centralizing as certain institutions gain more influence over time.

These are not minor issues. They sit at the center of whether the system can remain fair and functional.

Even so there is something quietly encouraging in this approach. It does not treat tokenization as a shortcut to trust. It treats it as a way to organize trust more clearly. It accepts that verification is ongoing. It accepts that coordination takes effort. It accepts that systems built for real people will always carry some level of imperfection.

I am not fully convinced yet. Real world deployments tend to reveal problems that early pilots cannot show. But this effort feels more grounded than most. It does not promise to fix everything. It tries to make existing processes more visible more traceable and slightly more reliable.

That may not sound dramatic but it is probably closer to how meaningful change actually happens.

#SignDigitalSovereignInfra $SIGN