I think about something as simple as receiving a package. When a courier arrives at my door, I don’t question the entire logistics network behind it—I trust that the address was recorded correctly, that the sender is legitimate, that the delivery system has tracked the parcel honestly, and that the person handing it to me is part of a chain that can be held accountable. None of this works because of a single piece of technology. It works because multiple layers—identity, verification, incentives, and reputation—quietly coordinate in the background. And when even one of those layers breaks, the whole experience becomes unreliable.

That’s the lens I find useful when thinking about S.I.G.N as a proposed sovereign digital infrastructure for money, identity, and capital in a verifiable world. On the surface, the idea feels intuitive: if we can standardize how credentials are issued and verified, then we can build financial and economic systems on top of that shared truth. Instead of fragmented databases and slow manual checks, there would be a unified layer where identity and proof become portable, composable, and machine-readable.

But I’ve learned to be cautious with systems that sound clean in theory. The real question isn’t whether such an infrastructure can exist conceptually—it’s whether it can operate reliably once exposed to real-world behavior.

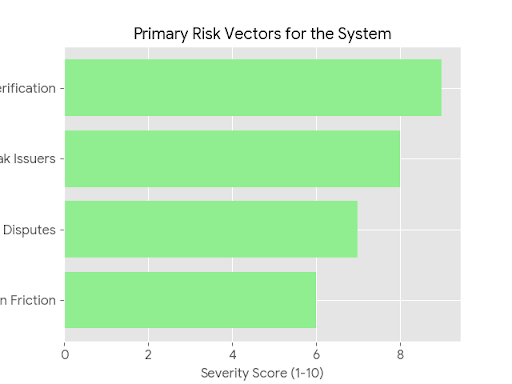

The first pressure point is always the source of truth. In any credential system, the integrity of the output depends entirely on the integrity of the input. If a university issues a degree, or a platform verifies user activity, the system downstream is only as trustworthy as those issuers. S.I.G.N can standardize how credentials are recorded and transferred, but it cannot fully control the quality of what gets issued in the first place. That introduces an unavoidable dependency on institutions, and institutions are uneven—some are rigorous, others are not.

This reminds me less of software and more of supply chains. You can build the most efficient logistics network in the world, but if your suppliers cut corners, your final product still suffers. Standardization helps with coordination, but it doesn’t eliminate variability at the edges.

Then there’s the question of incentives. The moment credentials are tied to financial value—whether through token distribution, access, or capital allocation—the system becomes a target. Participants will optimize for whatever the system rewards, not necessarily for what it intends to measure. If credentials unlock value, people will try to acquire them in the cheapest possible way. That might mean gaming verification processes, exploiting weak issuers, or coordinating behavior that looks legitimate on the surface but isn’t meaningful underneath.

This isn’t a flaw unique to S.I.G.N; it’s a property of any system that links identity to economic outcomes. Financial incentives don’t just encourage participation—they also expose weaknesses. A system like this doesn’t need to be perfect, but it does need to be resilient under pressure, especially when participants actively test its boundaries.

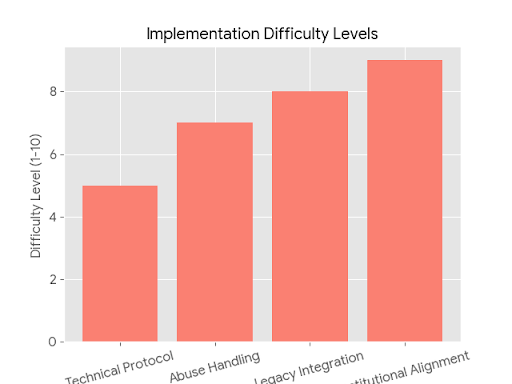

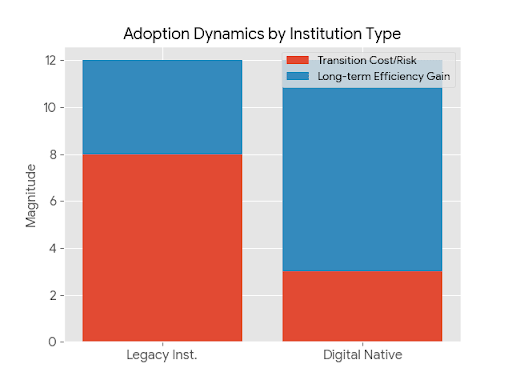

Another layer to consider is operational reality. It’s one thing to design a protocol that works in controlled environments; it’s another to integrate it across institutions that have their own legacy systems, policies, and constraints. Real-world adoption doesn’t happen because a system is elegant—it happens because it reduces friction without introducing new risks. If using S.I.G.N requires institutions to change how they operate, the cost of transition becomes a major barrier.

In infrastructure terms, this is similar to trying to upgrade a national rail network while trains are still running. You can’t just replace everything at once. Compatibility, gradual integration, and reliability during transition matter more than theoretical improvements.

There’s also the issue of verification latency and dispute resolution. In a verifiable system, what happens when credentials conflict, or when they’re challenged? Who arbitrates? How quickly can errors be corrected? These questions don’t always show up in design documents, but they define whether a system can function at scale. Trust isn’t just about correctness—it’s about how systems handle being wrong.

What makes S.I.G.N interesting to me is not the ambition—it’s the attempt to formalize something that has always been informal and fragmented. Identity, reputation, and value have always been connected, but loosely. If this system can tighten that connection without becoming brittle, it could reduce inefficiencies that exist today.

At the same time, I don’t think this is purely a technical problem. It’s an institutional one. For S.I.G.N to work as intended, it needs credible issuers, aligned incentives, and mechanisms to handle abuse. Technology can support these things, but it can’t replace them.

So when I step back, I don’t see S.I.G.N as a finished solution. I see it more as a coordination layer that could become useful if enough reliable participants adopt it and if it proves itself under real conditions. The real test won’t be in whitepapers or early demos—it will be in moments of stress, when bad actors try to exploit it, when institutions disagree, and when economic incentives start pulling the system in different directions.

My own view is cautiously neutral. I think the problem it’s trying to solve is real, and the direction makes sense at a high level. But I also think the hardest parts—trust at the source, incentive alignment, and operational integration—are not things that can be fully engineered away. If S.I.G.N succeeds, it will be because it manages these realities better than existing systems, not because it avoids them.

Because in the end, infrastructure doesn’t prove itself when everything goes right—it proves itself the first time something goes wrong, and still holds together.

@SignOfficial #SignDigitalSovereignInfra $SIGN