I once sat there staring at my screen, watching a simple airdrop claim turn into a frustrating loop. The wallet was connected, the transaction looked ready, but the dApp kept asking for fresh proof of eligibility another signature, another verification step, another few seconds of loading that stretched into minutes as the network felt the weight. It wasn’t a fancy DeFi exploit or high stakes trade. Just an everyday moment where the infrastructure underneath revealed its cracks. I remember thinking: we’ve gotten really good at moving value across chains, yet proving something basic about ourselves still feels like starting from zero every single time.

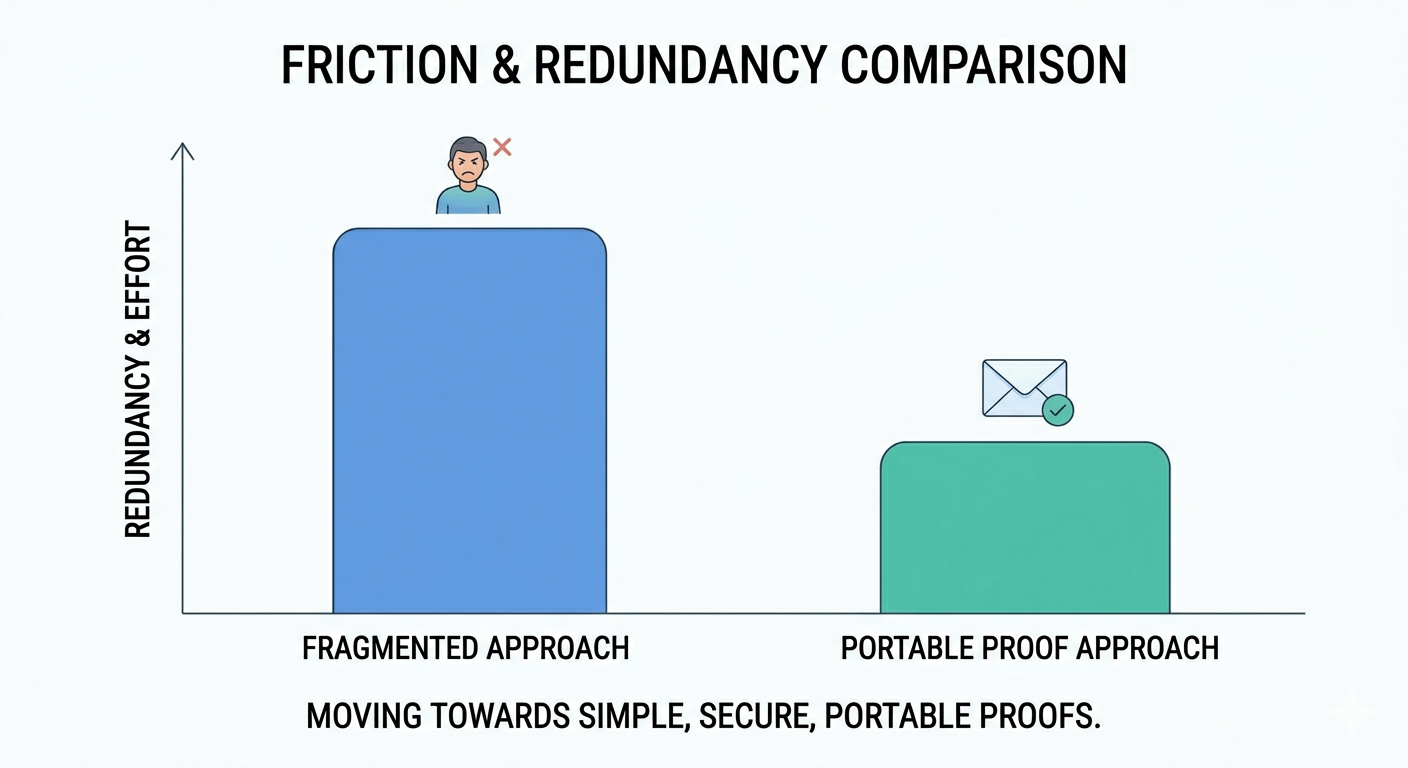

That experience stuck with me because it highlights a quiet but persistent problem in crypto. We have fast bridges, efficient execution layers, and wallets that handle assets smoothly. But when it comes to identity, eligibility, or any verifiable claim whether it’s “I completed this task,” “I hold this credential,” or “I meet these conditions” everything fragments. Users re upload documents, repeat KYC flows, or expose more data than needed just to satisfy one dApp or protocol. Networks get clogged not only by transaction volume but by this constant re verification overhead. Privacy erodes quietly, control slips away, and what should feel seamless starts to feel brittle as usage grows. In my experience watching these systems over time, the real friction often hides in these coordination and proof layers rather than raw speed.

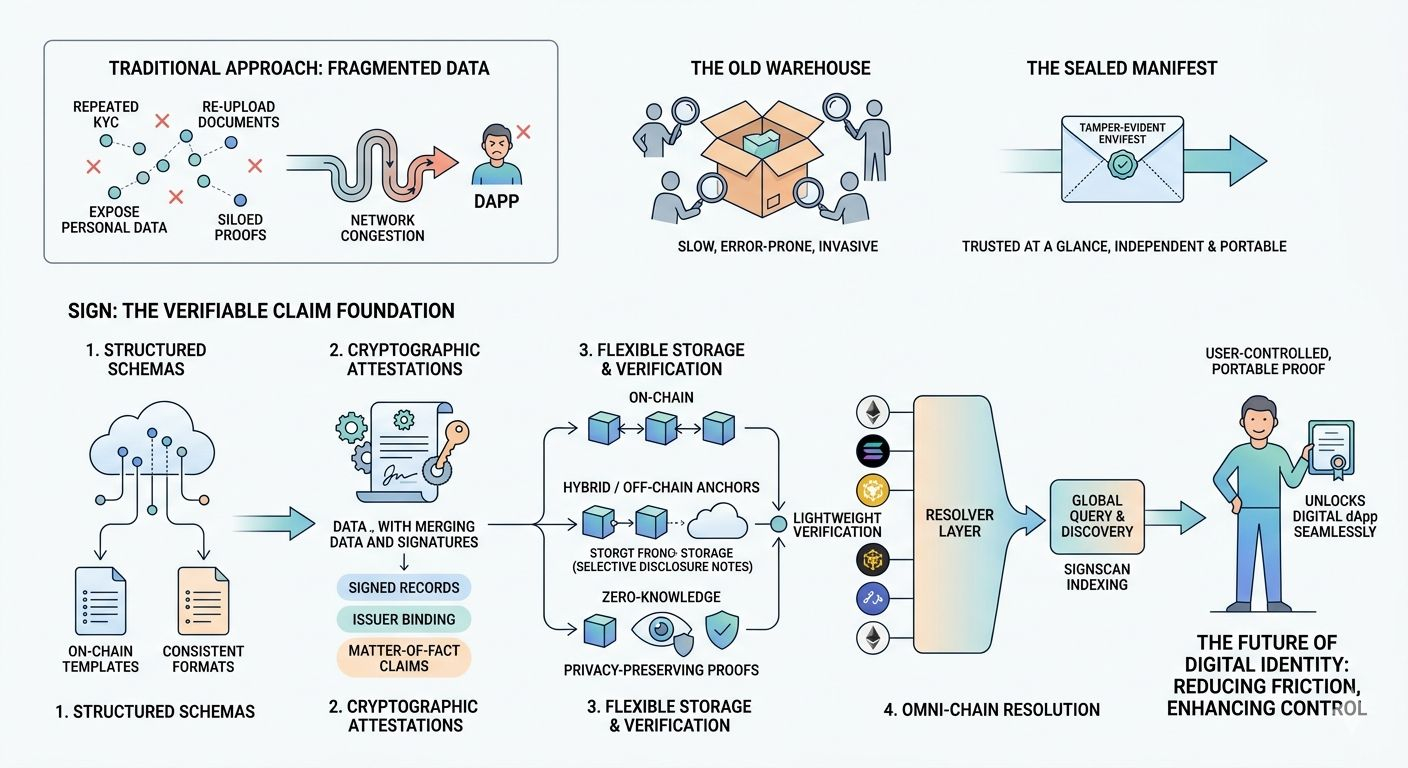

It makes me think of those old warehouse operations before modern supply chains took over. Every incoming shipment had its contents fully unpacked and inspected at every checkpoint slow, error prone, and invasive. The breakthrough wasn’t hiring more workers or buying faster forklifts. It was introducing standardized, sealed manifests: tamper evident documents that carried just enough structured information to be trusted at a glance, without reopening the entire box each time. The system became more resilient because verification turned independent and portable.

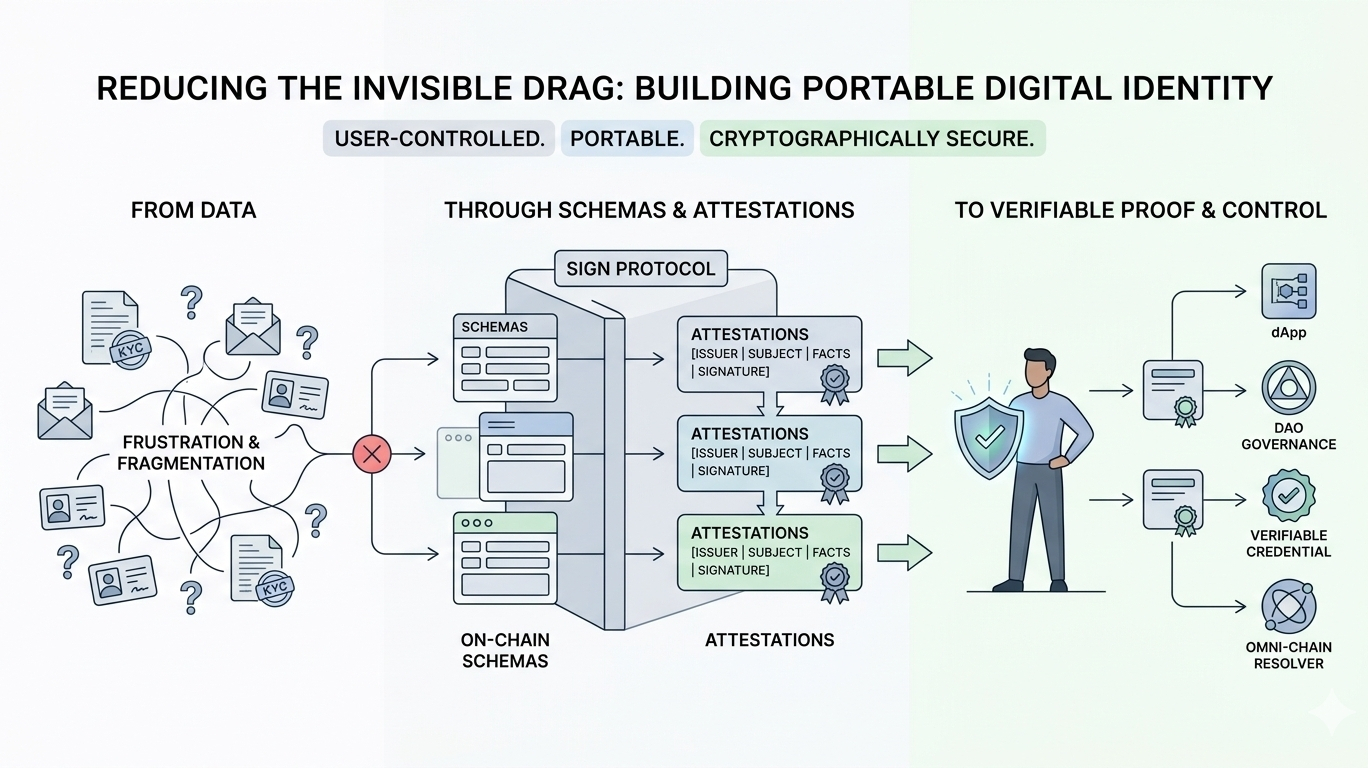

When I look at how Sign approaches this space, the design feels grounded in that same practical logic. It doesn’t chase flashy features but seems to focus on building a cleaner foundation for verifiable claims that users can actually own and carry forward. Instead of scattered data points that need constant re-proving, the protocol centers on structured attestations cryptographically signed statements that stand on their own once issued.

What catches my attention from a system perspective is the deliberate separation of concerns. It starts with schemas: on chain templates that define consistent formats for different types of claims, so everyone works from the same readable structure without reinventing the wheel for each use case. Then come the attestations themselves signed records that bind the issuer, the subject, the facts, and necessary metadata in a verifiable way. The verification flow stays lightweight: a checker doesn’t have to loop back to the original issuer or re-fetch everything. They validate the signature and schema compliance, which can happen across different environments.

I’ve spent time observing how resilient infrastructure handles real load, and a few elements here feel thoughtfully considered. Storage options provide flexibility fully on chain when maximum transparency matters, hybrid setups with on chain anchors pointing to off chain data for scale or sensitivity, and even zero knowledge approaches for selective disclosure. That kind of backpressure mechanism helps prevent the core ledger from getting overwhelmed while keeping proofs intact. The omni chain aspect adds another layer of practicality: attestations can originate on Ethereum, Solana, BNB Chain, or others, yet a resolver layer lets queries resolve smoothly without manual bridging or chain specific gymnastics. Parallel issuance doesn’t force everything into a single sequential bottleneck, and ordering is maintained through cryptography where it counts rather than global consensus on every detail.

SignScan, the indexing and querying service, acts like a quiet worker layer that aggregates information across chains and storage options, making discovery and verification more efficient without forcing every participant to run their own full node or custom scraper. In practice, these choices point toward a system that aims to stay operable even when demand spikes or when both privacy and compliance need to coexist.

After thinking through these kinds of designs for a while, I’ve come to believe that strong infrastructure rarely announces itself with big claims. It simply reduces the invisible drag that users feel day to day. A reliable system isn’t the one promising the absolute highest speed in perfect conditions, but the one that keeps functioning quietly, consistently when networks get busy, requirements get complex, and real human needs show up. Turning repeated verification theater into portable, user controlled proof feels like one of those under the hood shifts that could let the broader ecosystem breathe easier.