DADABOTS is a musical duo whose creations blend art, code, and machine learning. They are known for merging underground music genres such as death metal, drum and bass, and hardcore punk with neural synthesis, developing tools that generate live music in real time, instantly creating new musical genres and redefining the meaning of performance.

Their work spans viral live streams, academic research, and global performances, all rooted in their belief that human-machine collaboration is key to advancing creativity.

This interview was conducted at Hotel Saint George Hall during the Art Blocks Marfa Weekend. DADABOTS shared how generative tools are reshaping music, why they embrace lo-fi sounds and unpredictability, and what continually brings them back to the Art Blocks community.

Note: For brevity and clarity, this interview has been edited.

OpenSea:

First, please introduce yourself.

DADABOTS:

Hello everyone, I am DADABOTS. I formed a duo with another music hacker, and we come from the world of music hackathons. Our musical roots trace back to metal, punk, and electronic music. We are very interested in extreme music and hope to push it into new realms.

We started trying to make music with code, which led us to machine learning, deep learning, and generative art. It has truly been a wonderful journey.

OpenSea:

You describe DADABOTS as a combination of a band, a hackathon team, and a research lab. What is your creative process like?

DADABOTS:

Our creative process consists of those crazy ideas in Zach's and my heads that make us laugh out loud. Most of our hits, which are also our most famous works, were initially jokes. For example, we did a 24-hour continuous death metal music generation live stream on YouTube, which ended up being the longest live stream ever. This was just a whimsical thought: What would happen if we took the band members out of a metal band? It sounded too 'metal,' and everyone got the joke. On a macro level, this is our creative process.

On a micro level, we have done a lot of programming and research work. We are also a research lab, publishing papers as independent researchers. Our first paper was titled (Generative Black Metal and Math Rock), published at the NeurIPS conference. After that, we joined a formal research lab called Harmonai, which is a branch of Stability AI. Now, our full-time job is to develop neural synthesis technology.

Biosynthesis technology has a long history, evolving from classic synthesizers to samplers. Today, when you push the statistical properties of synthesizers to the limits and train these distributions with existing music data, you get the most flexible synthesizers. We have developed and open-sourced these synthesizers, published the code on GitHub, and released the models on Hugging Face. We hope to see the whole world using it and developing it into a new type of instrument.

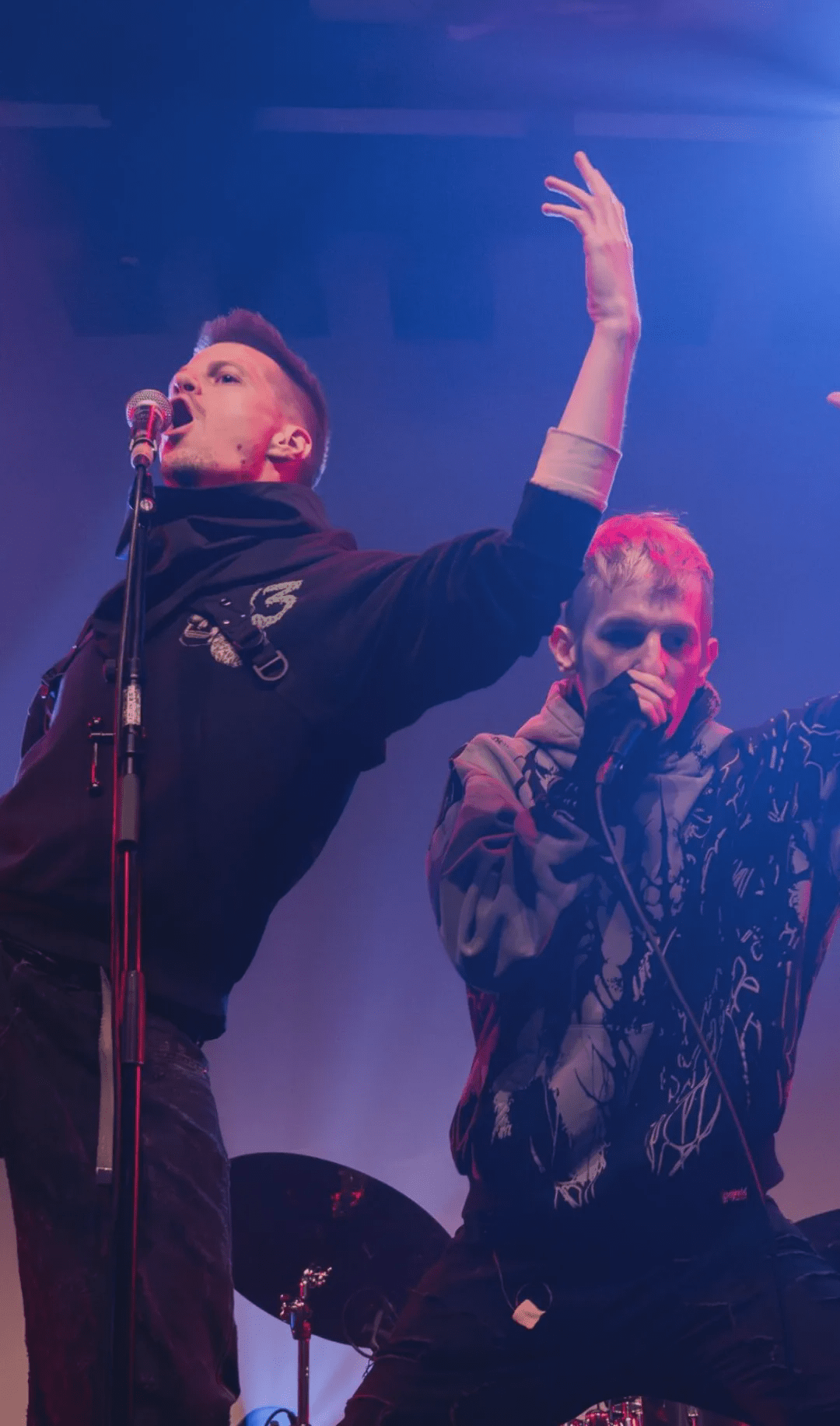

At the same time, we use it as an instrument. Our favorite is live music. We have performed across the United States and Europe, from underground rave parties in Berlin to metal performances in bars, and even at cocktail parties at the United Nations. Our models are trained based on all existing music genre data, allowing us to generate, mix, and combine any genre combinations, even create new genres. It is essentially a music genre creator and reliable enough for live improvisation.

In our performances, we ask the audience to shout out various music genres, such as African rhythm, electronic music, country music, deathcore, etc. Then we try to blend them on the spot. We coined the term 'instant DJ' for this, which is like a DJ performance, only the tracks aren't prepared. Our model is incredibly fast, capable of generating a three to four-minute song in three seconds, so we can take suggestions and immediately get a complete track. Usually, a DJ would bring a USB filled with music, but here, the model constantly fills the library during the performance. The audience knows what we are doing and participates, so even if the performance is bad, it's still fun.

OpenSea:

I love this. I try to imagine what you look like in all these different settings. How did you eventually come to the United Nations?

DADABOTS:

Music hackers have extensive networks and help each other find performance opportunities. My dear friend and long-time collaborator LJ Rich is incredibly talented; she possesses a certain synesthesia for taste and hearing and can improvise piano pieces on any theme. We have collaborated on some hackathon projects, and later she hosted a United Nations event called 'AI for Good.' She invited us to be the opening DJs and told us, 'You can perform at the UN, but no metal music, only peace music.' The most extreme type of music we could play is drum and bass.

Everyone at the United Nations loves African rhythm music. The most interesting part of working at the UN is meeting people from all around the world and learning about the music from their countries. Some come from Colombia and love joropo music, while others from Nigeria love African rhythm music. We ask, 'What would happen if we fused these styles together?' This model can find the balance through statistics and create new music.

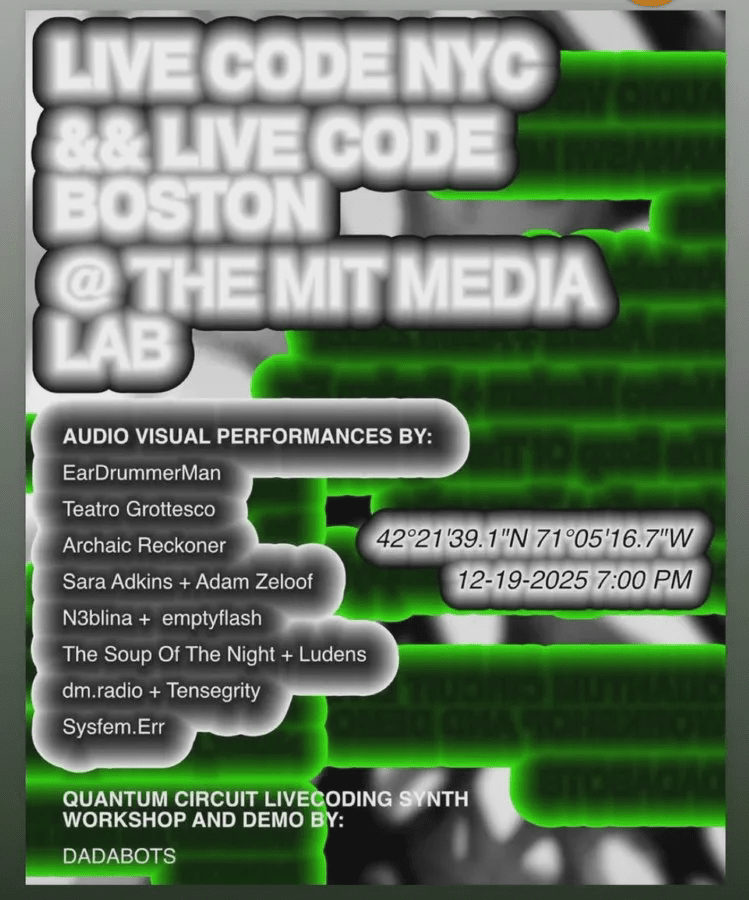

My favorite music fusion recently is the Ghost Pepper Salsa we will perform tomorrow night at Planet Marfa. Salsa itself fuses styles from Latin, South America, the Caribbean, and African Cuba. We mix it with the music that Zach listened to growing up: Northeast math core, brain core, drum and bass, and hardcore punk. The combination of hardcore punk and hand drums will be even more explosive, wilder than punk drummers, and adding bossa nova jazz harmonies will make the music more complex.

Whenever Brazilian elements are added, the rhythm and harmony of the music become richer, and that's the fun of it: creating an entirely new musical style. Perhaps musicians will eventually create it themselves, but here, we can listen to its sound from a statistical perspective and realize that it's a brilliant idea.

OpenSea:

I greatly appreciate your real-time work and the use of your own developed algorithms to achieve this goal. You once mentioned that the aim is to enhance human capabilities, not to completely replace humans. Do you still believe that? What do you think good human-machine collaboration should look like?

DADABOTS:

When it comes to artificial intelligence, each of us faces a choice: take shortcuts or use it to enhance ourselves. One path is to take shortcuts, while the other can make us better. The aversion people have towards artificial intelligence stems from the realization that many only want to take shortcuts, which may lead to regression. What we want is an AI system that makes music creation more challenging. With Prompt Track, we can make DJ performances more difficult because the tracks haven't even appeared yet.

Now that we have code, we are no longer limited to singles or albums; we can have a generative process that can generate music indefinitely. No band would play continuously for six years without stopping, but algorithms can, and that itself is a new form of art. We are very curious to see where this technology can take us - a whole new, challenging field.

I've always loved extreme metal because it is incredibly difficult to play. Take Necrophagist, for example; their music is extremely fast, rhythmically dense, and incredibly precise. Playing this kind of music requires tremendous effort, and the challenge itself is highly appealing. This drive is what pushed us into the field of machine learning - one of the hardest parts of programming - as well as deep learning - the pinnacle of computer science.

How to reshape people's perception of AI music is a challenge. Most people think AI music is just applications that can create songs at the push of a button. While that is cool, it gives the illusion that it can only produce low-effort art. Our challenge is to showcase the tremendous effort required behind AI music.

OpenSea:

I completely agree. I love how you compare AI to a shortcut or a tool for enhancing works. You often leave some flaws and lower fidelity in your works. Why does this roughness become part of your aesthetics?

DADABOTS:

Good question. In the early days of quasi-synthesis technology, due to various reasons, only lo-fi audio sounded good. For example, the essence of black metal music is to pursue extreme bad audio quality, recorded with the cheapest, worst microphones, making it sound like the band is playing from a distance. This effect creates the unique atmosphere of black metal, which was initially ideal training data. The higher the fidelity, the harder it is to learn. The lower the fidelity, the more limited the expressiveness, and the learning patterns become easier. For generative black metal, noise, chaos, and lo-fi audio are all good choices.

In the end, it became one of the earliest high-quality generative music works. When we put the generative black metal album on Bandcamp, people really listened to it as music. That was in 2017, and now, in 2025, the model is capable of handling various tasks: high-definition electronic music and sound design, but lo-fi audio still has a unique charm that requires your brain to fill in the blanks.

Gestalt psychology theory suggests that if you cover part of a face, the brain will automatically fill in the rest. When you listen to lo-fi music, the brain fills in the parts that can't be heard, which is completely different from hi-fi music. Hi-fi music presents all the details to you, allowing the brain to fill in the blanks, and that is wonderful in itself.

OpenSea:

Well answered. My last question is: What does it mean to you to be here in Marfa, with these people, participating in this event?

DADABOTS:

In Marfa's Art Blocks community, people take generative art very seriously. I don't know of any other place that gathers a group of people dedicated to generative art and music creation for their entire lives, creating every day, a bit crazy yet philosophical. I love exploring people's thoughts. Why are every one of your works over the past 15 years filled with clouds? Why clouds? Taking control of your life and giving it meaning is exhilarating in itself.

Here, this is the world of generative art. In a cryptocurrency world filled with negative emotions and scams, I feel that the 1% truly beautiful things are here, in the Art Blocks community. This is why I have come here and will keep coming back.

OpenSea:

That's great, thank you. This experience is wonderful.

DADABOTS:

Thank you.

Disclaimer: This content is for informational purposes only and should not be considered financial or trading advice. Mentioning specific projects, products, services, or tokens does not constitute endorsement, sponsorship, or recommendation by OpenSea. OpenSea does not guarantee the accuracy or completeness of the information provided. Readers should verify any statements in this article before taking any action, and readers are responsible for conducting due diligence before making any decisions.

Content you care about from OpenSea

Browse | Create | Buy | Sell | Auction

Follow OpenSea on Binance channel

Stay updated