I used to believe that transparency was the ultimate solution. In crypto, it felt almost unquestionable. If everything was visible and verifiable, trust would naturally emerge. Systems would align. Adoption would follow clarity.

But what I observed in practice didn’t support that belief.

Transparency increased visibility, but not necessarily discipline. Activity was easy to measure, but harder to sustain. Users appeared, but they didn’t always return. What looked like progress often felt temporary.

That’s where the discomfort began.

It made me question whether transparency alone could support real systems or whether something else was missing.

Looking closer, I started noticing patterns that didn’t align with the narratives we tend to repeat.

Open systems showed participation, but coordination was inconsistent. Governance existed, but it was diffuse. Accountability was visible, yet not always enforced. On the other side, more controlled environments showed stronger consistency but at the cost of flexibility and broader composability.

What felt “off” wasn’t failure. It was fragmentation.

Ideas like transparency, confidentiality, and interoperability sounded important. But in practice, they existed as isolated priorities. Users still faced friction. Developers rebuilt logic across environments. Institutions hesitated to rely on systems that didn’t align with operational constraints.

The systems worked but not together

Over time, my way of evaluating systems changed.

I stopped asking whether a system was open or closed. I started asking whether it reduced repeated effort. Whether it allowed behavior to persist across contexts. Whether it worked without requiring constant user awareness.

From concept to execution.

From narrative to usability.

The systems that endure don’t force attention. They reduce it. They operate quietly, allowing interaction to continue without redefinition at every step.

That became the lens I now use

When I came across the $SIGN deployment framework, I didn’t initially see it as something fundamentally different. At first, this felt like a familiar categorization public, private, hybrid, labels that already exist across systems.

But upon reflection, what stood out wasn’t the categories. It was the framing.

#SignDigitalSovereignInfra does not treat deployment as ideology. It treats it as constraint.

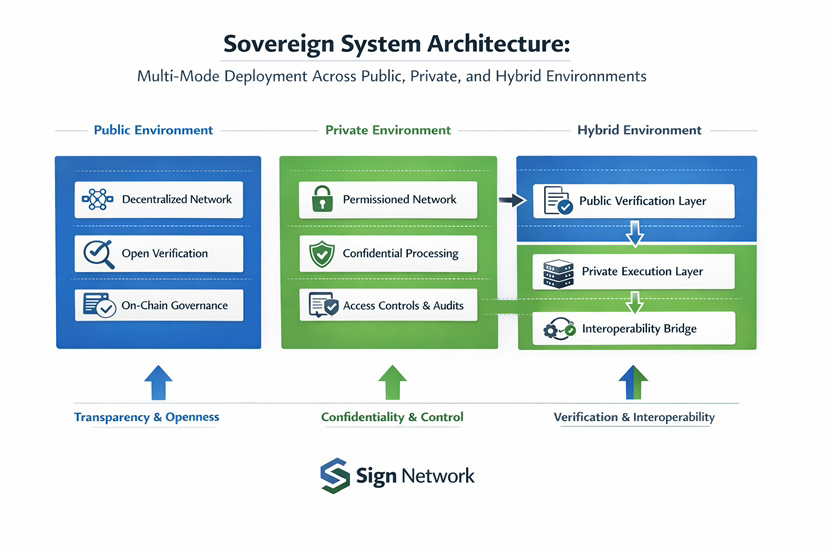

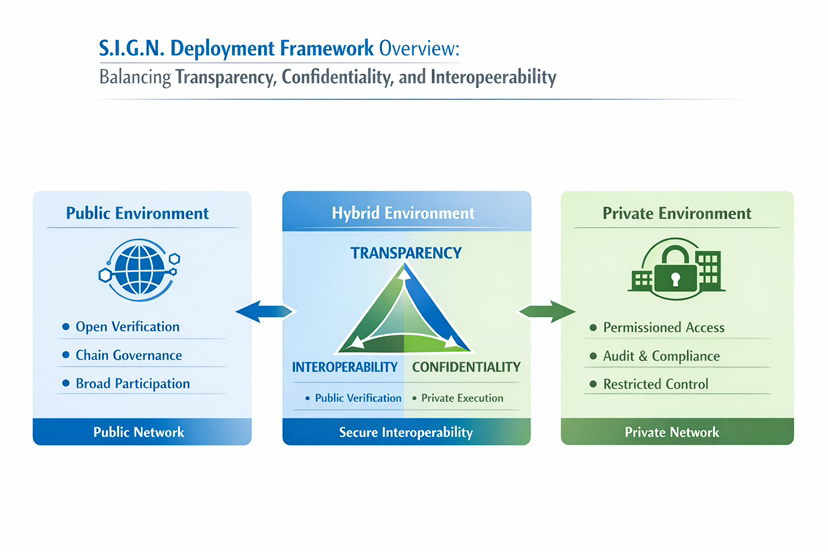

Public, private, and hybrid modes are not competing visions. They are different responses to real world requirements, transparency, confidentiality, and control. The framework doesn’t attempt to collapse these tradeoffs. It structures them.

That subtle shift makes the system feel more grounded.

It led me to a more precise question:

Can a system maintain public verifiability while allowing execution to adapt to context?

Or more directly can transparency, confidentiality, and interoperability coexist without undermining each other?

This is where the deployment framework becomes meaningful.

In @SignOfficial , public deployment modes optimize for transparency first systems. Verification is open. Participation is broad. Governance is expressed through chain parameters at the L2 level or contract level logic on L1. Anyone can observe and validate outcomes.

But openness introduces coordination challenges. Participation is accessible, but not always consistent. Behavior becomes harder to align without constraints.

Private deployment modes take a different approach. They prioritize confidentiality and compliance. Access is permissioned. Participation is governed through membership controls and audit policies. Systems operate with defined boundaries.

This creates discipline. But it constrains composability by design.

Hybrid deployment introduces a more structured separation.

Public verification remains intact, identity, attestations, and proofs can exist in a verifiable layer. But execution occurs in private environments, where operational control and regulatory constraints are enforced.

Interoperability, in this model, is not optional.

It becomes critical infrastructure.

Trust assumptions between environments must be explicitly defined. Verification and execution no longer happen in the same space, so coordination becomes the system’s central challenge.

This is not simplification. It is structured complexity.

What stands out to me is how closely this mirrors real world systems.

Financial infrastructure doesn’t operate in a single environment. Identity is verified once, but reused across multiple layers. Transactions pass through both public and controlled systems. Compliance exists alongside transparency, not in opposition to it.

S.I.G.N. reflects this reality.

Not by abstracting it away but by structuring it into deployment modes that can be composed depending on context.

This becomes especially relevant in regions where digital infrastructure is still forming. In parts of the Middle East and South Asia, systems are being built rapidly, but often without continuity.

Public systems provide access. Private systems provide control. But without a framework connecting them, trust remains fragmented.

A deployment model that allows verification to persist while execution adapts begins to address that gap.

There is also a broader market dynamic at play.

Crypto tends to reward visibility. Systems that maximize transparency attract more attention. Systems that prioritize control or confidentiality often receive less.

But attention is not usage.

Usage appears in repetition. In systems that don’t require users to restart processes. In workflows that institutions can rely on without adaptation.

Markets often price expectations. Infrastructure reveals itself through necessity.

Still, there are real challenges.

For this framework to work, identity and verification must be embedded into actual workflows. Not as optional features, but as foundational components. If attestations are not reused, the system loses its advantage.

Developer integration becomes critical. Without consistent implementation, interoperability remains theoretical.

There is also the issue of scale.

Hybrid systems depend on coordination across environments. Without sufficient shared usage, the benefits of interoperability don’t materialize. Systems remain isolated, even if the architecture allows connection.

This is the usage threshold problem.

Until repeated interaction reaches a certain level, the system behaves like a concept. Beyond that point, it begins to function as infrastructure.

There is a more subtle layer beneath all of this.

Technology often focuses on enabling possibilities. But systems that persist rely on constraints. On boundaries that shape behavior. On rules that create consistency over time.

Transparency without structure can feel chaotic.

Control without flexibility can feel restrictive.

Balancing both is not just a technical decision. It is a behavioral one.

It depends on how participants interact, not just how systems are designed.

What would build real conviction for me is not expansion or announcements.

It is patterns.

Applications where identity is required, not optional.

Users interacting repeatedly without re-verification.

Attestations being referenced across systems instead of recreated.

Sustained activity across both public and private environments.

Not spikes continuity.

That is when a system starts behaving like infrastructure.

I no longer see deployment as a purely technical choice.

It is a reflection of how systems handle reality, how they balance transparency, confidentiality, and interoperability under constraint.

The question is not which mode is superior.

It is whether the system can hold together when all three are required at once.

Because in the end, the difference between an idea that sounds necessary and infrastructure that becomes necessary is not design.

It is repetition.

It is whether the system continues to be used—quietly, consistently, and without needing to justify itself.