Most people assume that once something is proven, the hard part is over. A signature exists, a record is verified, and the system can move forward with confidence. But that assumption only holds if everyone involved agrees on what that proof actually means. And that agreement is never as automatic as it looks.

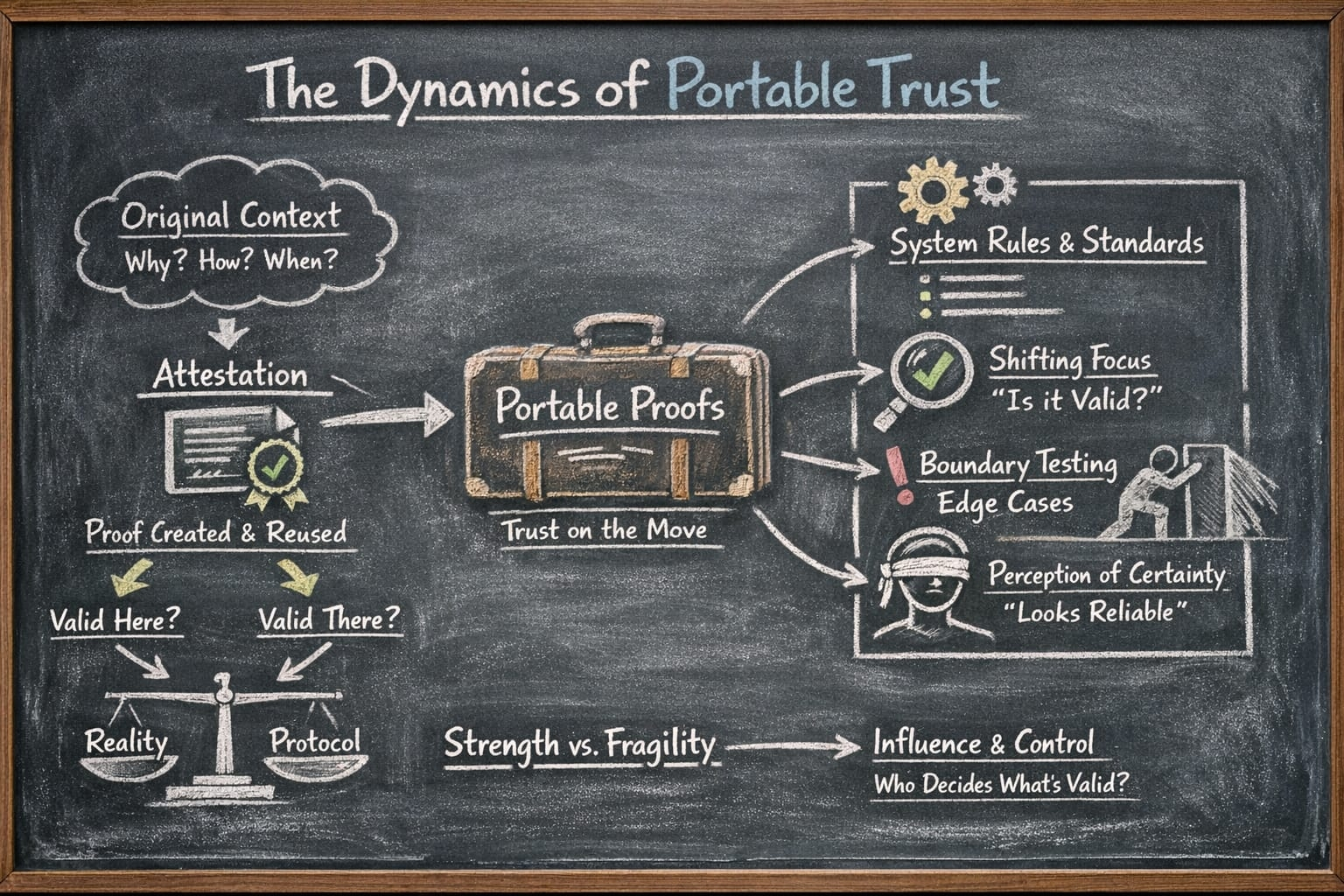

What Sign Protocol is really doing is trying to make trust easier to carry. Instead of re-evaluating everything from scratch, you rely on attestations that have already been created. Something was signed, so it can be reused. Something was verified, so it can be accepted again elsewhere. It feels efficient, almost like trust has been packaged into a portable form.

But the moment trust becomes portable, it also becomes detached.

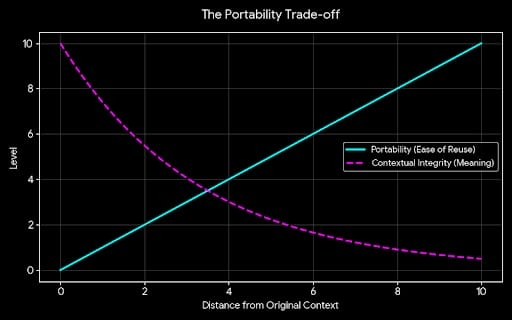

The original situation in which a statement was made why it was made, what conditions surrounded it, how accurate or complete it really was doesn’t fully travel with the proof. What travels is a compressed version, a cleaner signal that looks definitive. That’s useful, but it quietly shifts the burden. Instead of asking “what happened?” people start asking “is this valid according to the system?”

That difference is small at first, but it compounds.

Because validity inside a system is always defined somewhere. It might not be obvious, and it might not be centralized in a single place, but it exists in the form of standards, expectations, and shared assumptions. Over time, these definitions begin to matter more than the raw facts themselves. A proof doesn’t need to be deeply understood; it just needs to pass the checks that others recognize.

This is where things get interesting under pressure.

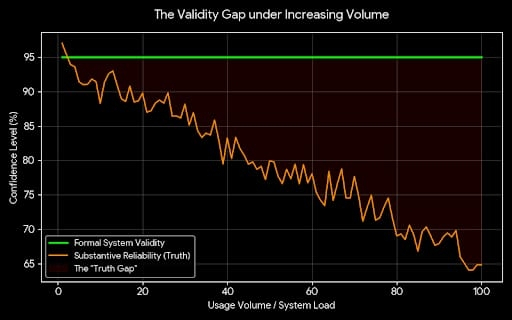

At low volume, most attestations behave as expected. They align with reality closely enough that the system feels reliable. But as usage expands, edge cases stop being rare. Proofs get reused in contexts they weren’t designed for. Timing gaps appear. Revocations lag behind usage. Actors learn how to operate within the boundaries of what is technically valid, even if the outcome stretches what most people would intuitively consider trustworthy.

At that point, the system isn’t just verifying information anymore. It’s shaping behavior.

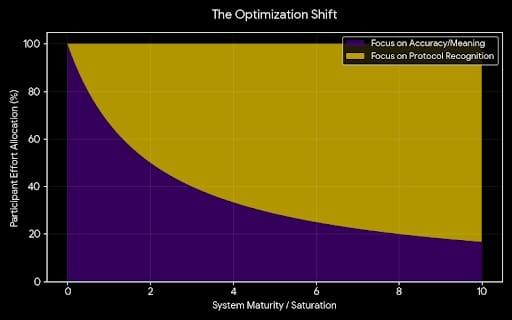

People begin optimizing for what the protocol recognizes, not necessarily for what is most accurate or meaningful. And once that shift happens, the question of “who decides what’s valid” becomes less abstract. It shows up in subtle ways through which schemas are widely accepted, which issuers are trusted by default, which interpretations of a proof are treated as standard.

No one needs to explicitly take control for influence to accumulate. It emerges wherever decisions about validity become dependencies for others.

That doesn’t make the system fragile by default, but it does mean its strength isn’t purely technical. It depends on whether those shared definitions can hold up when conditions stop being clean. When there are disagreements, when incentives push participants to test boundaries, when the difference between a formally correct proof and a substantively reliable one starts to matter.

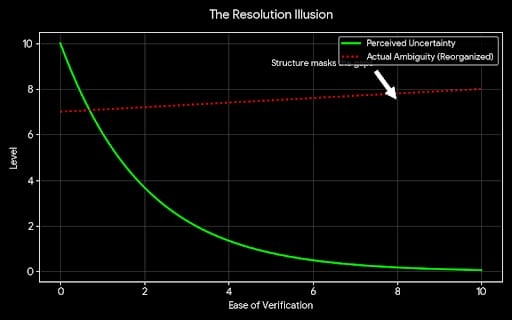

There’s also a quieter effect that builds over time. As proofs become easier to produce and verify, the presence of a proof starts to feel like the resolution of uncertainty. It creates a sense that things are under control, that ambiguity has been reduced. But often, the ambiguity hasn’t disappeared it’s just been reorganized into a format that is easier to accept without questioning.

That works until the system is asked to carry more weight than it usually does.

If Sign Protocol succeeds, it won’t be because it made verification possible that part is already understood. It will be because it managed to keep meaning intact as proofs moved across different contexts, scales, and incentives. It will have to show that validity doesn’t drift too far from reality, even when participants have reasons to stretch it.

If it can do that, then it becomes more than infrastructure. It becomes a stable reference point in environments that don’t naturally agree on trust.

If it can’t, then it may still function, but in a more limited way less as a foundation for certainty, and more as a system that organizes uncertainty into something that feels structured, right up until the moments when that structure is tested and the underlying gaps become harder to ignore.