There’s something almost comforting about the idea that trust can be cleaned up. That it can be trimmed down, formatted, and stored in a way that feels efficient and reusable. Systems like Sign Protocol lean into that instinct. They suggest that instead of carrying around the full weight of context every time we need to verify something, we can rely on structured claims attestations that are lighter, cheaper, and easier to move.

It sounds practical. And in many ways, it is.

But if you sit with it a little longer, the question starts to shift. It’s no longer about whether attestations can be made cleaner. It’s about whether making them cleaner actually changes what they represent.

Because what Sign really does is not strengthen truth. It reshapes how truth is packaged.

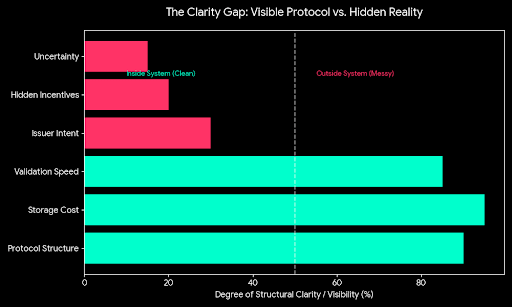

An attestation is still just a claim. Someone said something is valid, or verified, or approved. The protocol can make that claim easier to record and easier to share, but it doesn’t reach back into the moment it was created. It doesn’t see how careful the issuer was, what they overlooked, or what incentives shaped their decision. All of that stays outside the system, even as the output looks precise and structured inside it.

And that’s where the tension begins to feel real.

The cleaner the claim becomes, the easier it is to treat it as complete. When something is neatly formatted and easy to access, it starts to carry an implied confidence. Not because it deserves it, but because it looks settled. The mess that produced it the uncertainty, the judgment calls, the potential errors fades into the background.

In practice, that can quietly change behavior.

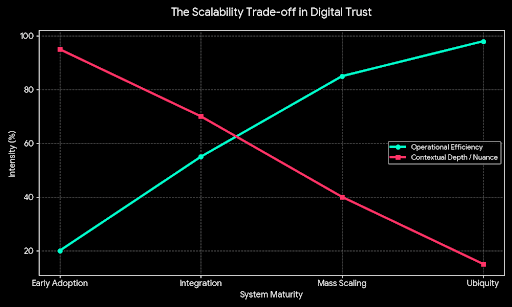

If it becomes cheap and simple to issue attestations, more of them will exist. More entities will participate, more systems will depend on them, and more decisions will be made based on their presence alone. At first, that feels like progress. Things move faster. Integrations become smoother. There’s less need to rebuild trust from scratch.

But over time, volume changes meaning.

When claims are everywhere, their weight starts to shift. The difference between a carefully issued attestation and a loosely generated one can become harder to see, especially when both appear identical at the surface. The system hasn’t failed it’s doing exactly what it was designed to do but the environment around it becomes noisier.

And in a noisier environment, interpretation becomes the real work.

This is where the limits of structure start to show. A protocol can organize information, but it cannot fully guide how that information is understood. It cannot resolve disagreements between issuers. It cannot ensure that a revoked claim is noticed in time. It cannot prevent someone from relying on a signal that was always weaker than it appeared.

Those gaps don’t disappear. They move.

They move into the spaces between systems, into the assumptions users make, into the operational decisions that happen off-chain. And because the on-chain representation looks clean, those off-chain complexities can become easier to underestimate.

That’s not necessarily a flaw. It may simply be the cost of making something usable at scale.

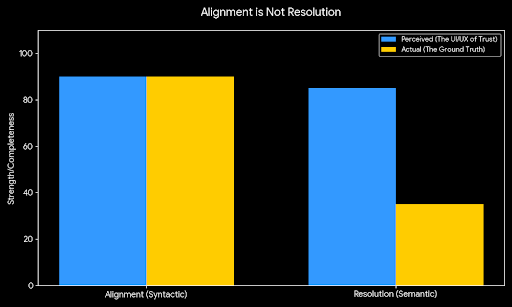

There is real value in turning scattered, inconsistent trust signals into something more standardized. It reduces duplication. It makes coordination easier. It gives builders a common reference point instead of forcing them to invent their own logic every time. In a fragmented ecosystem, that kind of alignment matters.

But alignment is not the same as resolution.

The deeper uncertainties who should be trusted, how much, under what conditions are still there. They are just less visible in the moment you interact with the system. And that creates a subtle risk: the system can feel more certain than it actually is.

The real test comes when that feeling is challenged.

When something goes wrong, when claims conflict, when an issuer’s credibility is questioned, or when a decision depends on more nuance than an attestation can carry those are the moments that reveal what the system actually provides. Not in theory, but in practice.

If the structure helps people navigate those moments, if it makes it easier to trace, question, and adjust, then it’s doing something meaningful. It’s not eliminating uncertainty, but it’s helping contain it in a way that remains usable under pressure.

If, instead, the structure mostly helps things move faster while leaving those harder moments just as difficult or even harder because the underlying complexity was hidden then the benefit is more superficial. The system still works, but it works by smoothing over uncertainty rather than engaging with it.

That distinction doesn’t show up clearly at the beginning. Early on, everything feels controlled. The use cases are clean, the participants are aligned, and the outcomes are predictable enough to reinforce confidence. It’s only later, as the system expands and the range of behavior widens, that the edges start to matter.

And that’s where this stops being a story about efficiency and becomes a question of resilience.

Sign Protocol is betting that making trust easier to express will also make it easier to use. That’s a reasonable bet. But it quietly depends on something else that users, developers, and institutions will continue to treat those expressions with the same care that was required before they were simplified.

If that discipline holds, the system could become a useful layer that reduces friction without distorting meaning. If it doesn’t, the system may still scale, still integrate, still produce clean outputs but those outputs might carry more confidence than they deserve.

So the outcome doesn’t really hinge on whether the protocol works as designed. It likely will. The real question is whether, as it spreads, it encourages clearer thinking about trust or simply makes uncertainty easier to package and move around without ever fully confronting it.