I remember sitting with someone who had done everything right.

They had the documents. The proof. The history. On paper, there was no real reason anything should go wrong.

But when the time came to verify everything, things started falling apart. One system didn’t recognize a document from another. A platform asked for a format that had never even been issued. Slowly, the whole process stopped being about whether they actually qualified.

It turned into a test of whether they could navigate a broken system.

What made it frustrating wasn’t just the rejection. It was the strange feeling that the result had very little to do with reality. The system wasn’t really measuring the truth. It was measuring whether the truth fit neatly inside its own limitations.

The more I think about it, the more I realize how common this problem actually is.

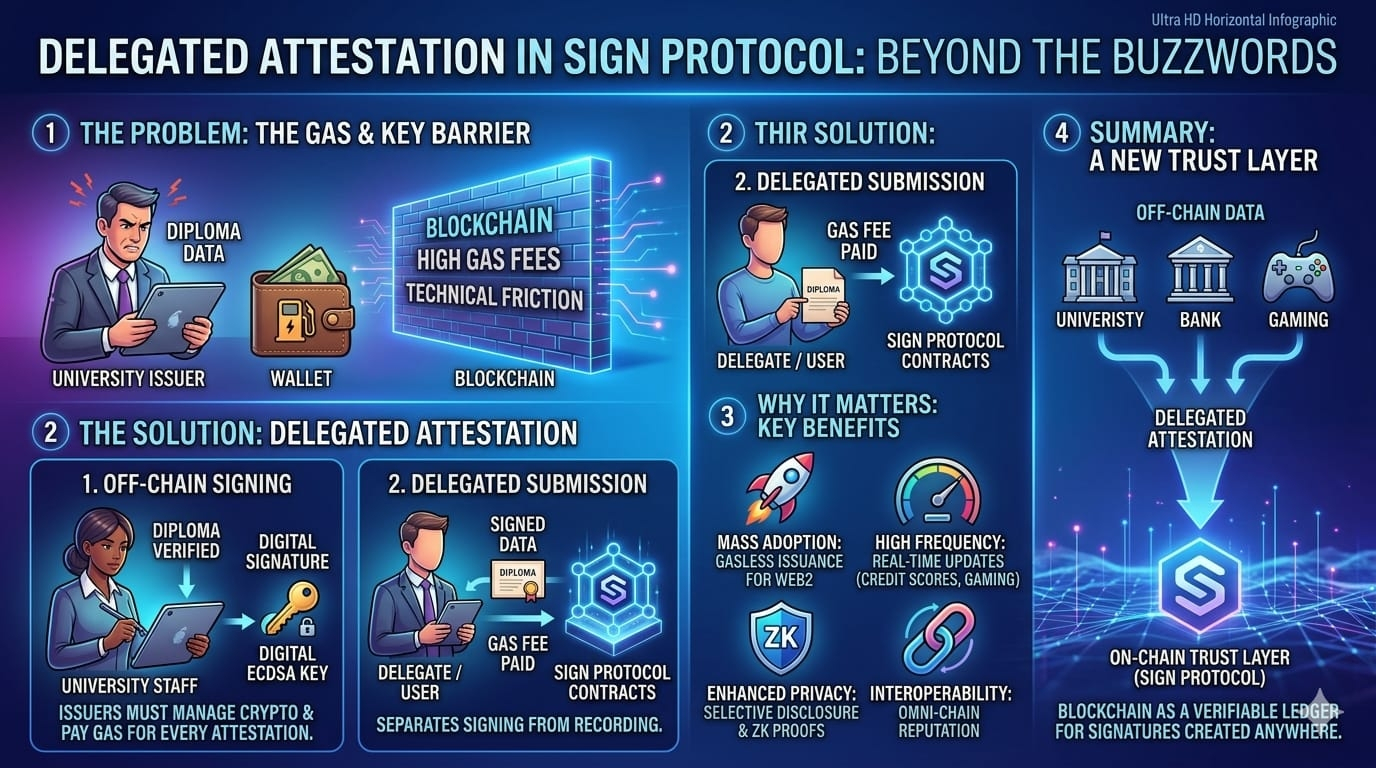

Verification sounds like a small technical step, but it quietly controls access to a lot of things — opportunities, rewards, recognition. And yet most verification systems today weren’t built for fairness or long-term accuracy. They were built for convenience within their own isolated environments.

That’s where the real inefficiency hides.

Every platform builds its own rules. Every system defines its own version of proof. And most of them don’t really communicate with each other. Instead of one shared layer of truth, we end up with scattered pockets of verification that constantly reset context.

You don’t carry your credibility forward.

You rebuild it. Again and again. Every time you move to a new system.

And that creates a subtle shift.

Success starts depending less on what someone actually did and more on how well they understand the system. The people who succeed aren’t always the ones who contributed the most. Often, they’re just the ones who know how to present their work in a format the system accepts.

The more I look at it, the less it feels like a small inefficiency.

It feels like a structural flaw.

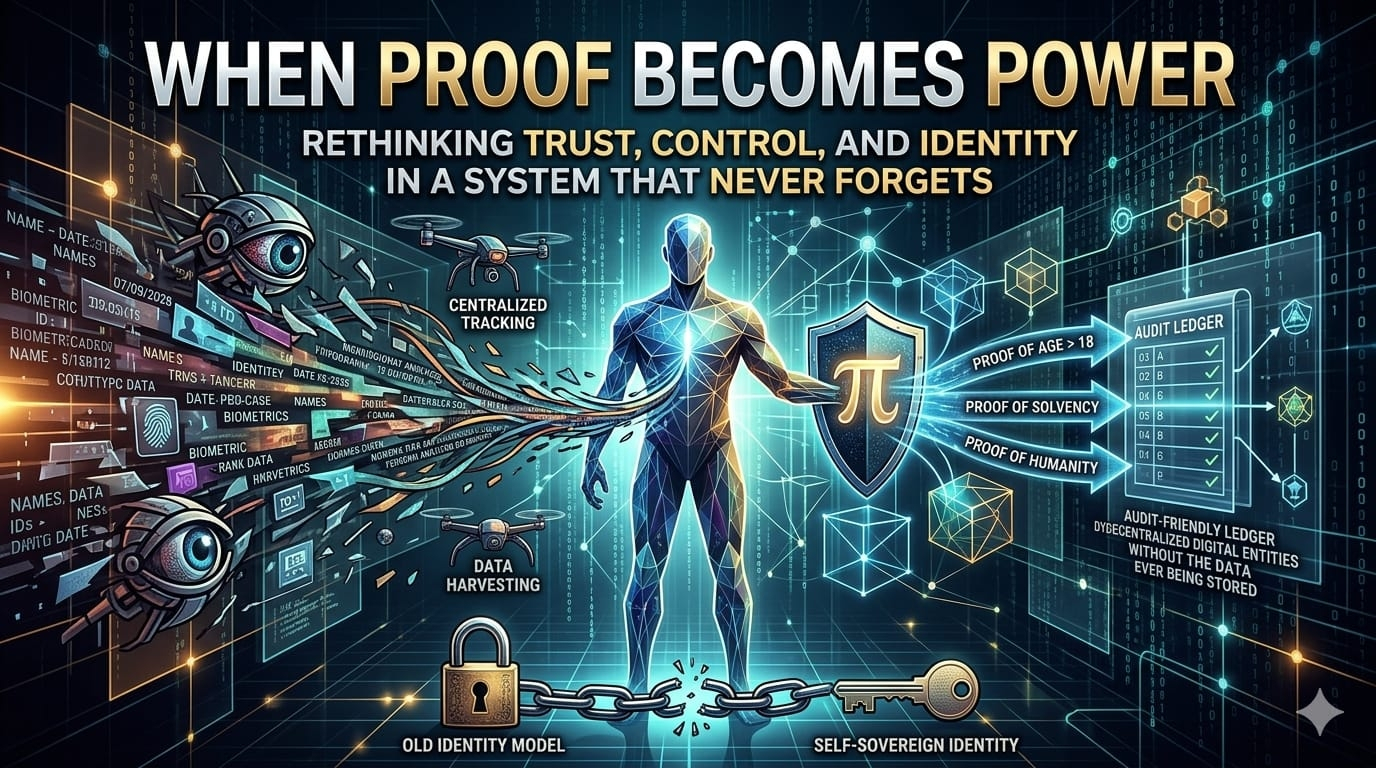

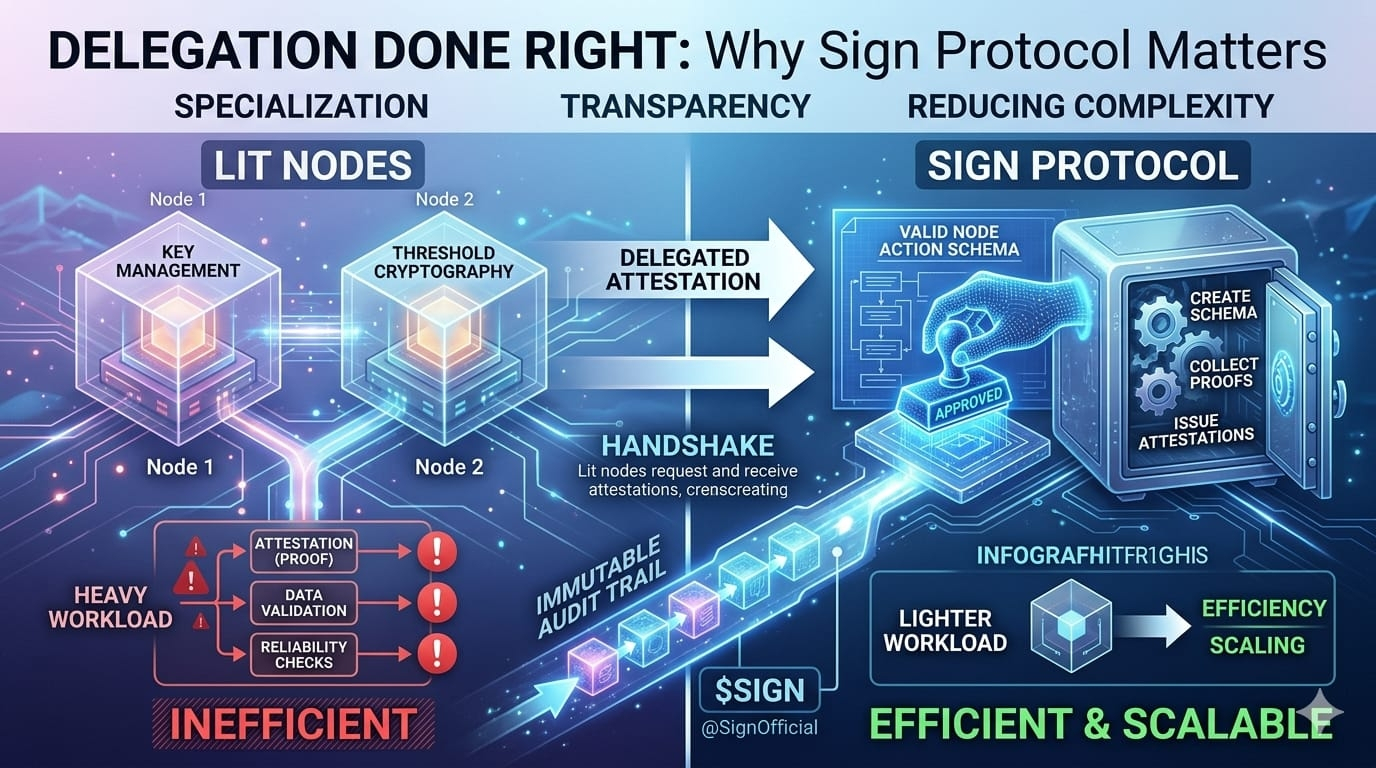

That’s the point where something like $SIGN starts to make sense to me. Not just as another product, but as an attempt to rethink the foundation underneath this whole process.

Because the real issue isn’t that verification doesn’t exist.

It’s that verification has no continuity.

What Sign seems to be trying is turning credentials into something persistent. Not just records sitting inside one platform, but proofs that can move, be referenced, and actually mean something across different systems.

That idea changes the role of verification completely.

Instead of being a one-time checkpoint, it becomes part of a larger system of memory.

And memory matters more than we usually realize.

When systems have no memory, every decision starts from zero. Contributions get reduced to small snapshots instead of full context. That’s where misallocation starts happening. That’s where unfair distribution quietly slips in.

So when Sign connects this idea to things like token distribution, it actually makes sense.

If you can verify what someone has really done in a consistent way, distributing value becomes less random. Airdrops don’t have to rely on vague filters. Incentives don’t have to reward surface-level activity. Ideally, they start aligning with real participation.

But this is also where things get complicated.

Because the moment you start structuring contribution, you also start defining it.

And that raises a difficult question.

What actually counts?

Is it activity? Consistency? Impact? Loyalty? Timing?

Any system that tries to answer that will end up making trade-offs. And those trade-offs influence behavior. If the system rewards what is easiest to verify, people will naturally optimize for that. Over time, the system stops just measuring reality.

It starts shaping it.

So while something like Sign might reduce randomness, it also introduces structure. And structure, if handled poorly, can create its own form of bias.

That’s the tension.

On one side, there’s chaos — unclear rules, inconsistent outcomes, and systems that struggle to scale fairly.

On the other side, there’s structure — clearer logic, stronger verification, but also the risk of becoming rigid.

There isn’t a perfect balance.

And there’s another layer that often gets overlooked.

Verification is usually treated as the same thing as trust. But they’re not exactly the same.

A system can verify that something happened. It can confirm a condition was met. But it can’t fully capture intent, context, or meaning.

It can tell you something was measurable.

Not necessarily that it was meaningful.

That gap matters.

Because if distribution starts relying too heavily on what can be verified, systems might slowly drift toward rewarding what is visible instead of what is actually valuable.

And that’s a difficult problem to solve.

Even with good infrastructure.

Then there’s the question of power.

If Sign becomes widely used as a layer for credentials and distribution, where does influence really sit? Not necessarily in the data itself, but in the standards. In the definitions that decide what counts as a valid credential.

Decentralization doesn’t eliminate influence.

It just redistributes it.

And sometimes that influence becomes harder to see.

So the real challenge isn’t just building a system that works.

It’s building one that can evolve. One that stays open enough to adapt without becoming trapped by its own structure.

That’s not just a technical challenge.

It’s a governance problem. A design problem. A human problem.

And there isn’t a perfect solution.

But this is also why Sign feels different from many projects I see.

It doesn’t pretend the problem is simple. It seems to engage with the messy reality underneath — the place where systems break under scale, incentives distort behavior, and fairness becomes harder to define than to promise.

Most projects stay on the surface where everything sounds clean and polished.

This one seems to be operating closer to the friction.

Of course, none of that guarantees success. If anything, it shows how difficult the path really is. Solving verification at scale isn’t just about efficiency.

It’s about deciding how truth, contribution, and value get interpreted across different systems.

And those interpretations will never be perfectly neutral.

But maybe that’s the point.

Maybe the value of something like Sign isn’t that it creates a perfect system.

Maybe it’s that it forces us to notice how imperfect the current ones already are.

It exposes the gaps we’ve gotten used to. The inconsistencies we’ve stopped questioning. The quiet ways value gets misallocated without anyone looking closely enough.

And once those gaps become visible, they’re harder to ignore.

Because at that point, the problem isn’t hidden inside broken processes anymore.

It’s right in front of us.

And that’s when things begin to shift.

Not because one system fixes everything overnight.

But because expectations change.

From accepting opacity to expecting clarity.

From repeating verification to carrying it forward.

From guessing value to trying to measure it with some consistency.

That shift doesn’t happen dramatically.

It’s slow. Subtle. Almost invisible at first.

But over time, it changes how systems behave.

And more importantly, it changes what people expect from them.

And once expectations change, going back to the old way starts to feel unacceptable.

That’s the real weight behind something like $BNB Sign.

Not in what it promises today.

But in what it quietly makes harder to tolerate tomorrow.