i'll start with the impressive part because it is genuinely impressive.

i grew up watching government aid programs in my country move incredibly slowly. money allocated for subsidies that took months to reach people. funds meant for specific purposes that somehow ended up somewhere else. not because people weren't trying — but because the system had no way to verify, in real time, whether money was actually being used the way it was supposed to.

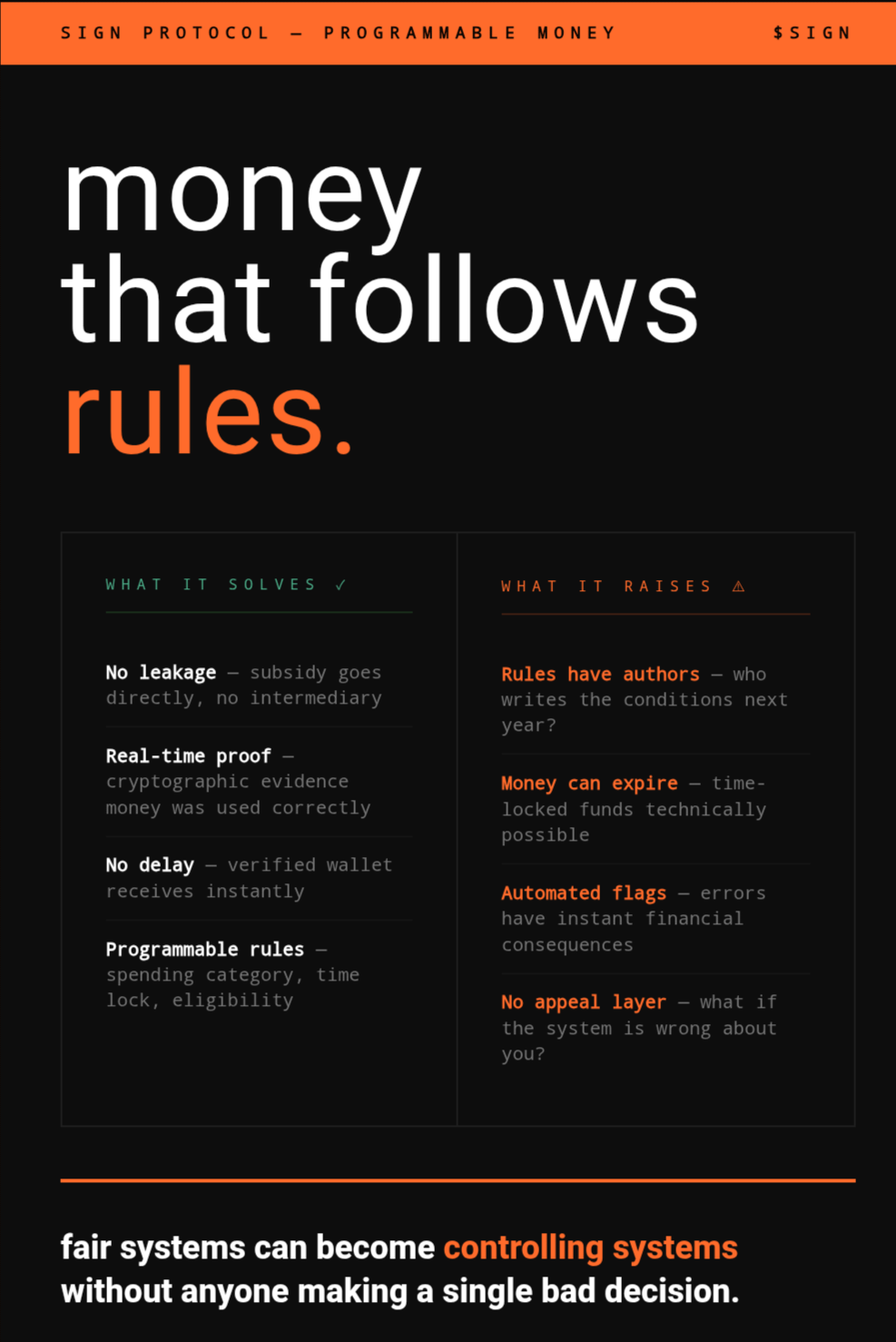

SIGN's programmable money layer changes that math completely. a CBDC built on this infrastructure doesn't just move value — it carries rules with it. a subsidy disbursement can be programmed so it can only be spent on food. a grant can be time-locked. benefits can go directly into a verified citizen's digital wallet without touching a single intermediary. no leakage. no delay. cryptographic proof that the money went where it was supposed to go.

from a governance standpoint, this is the kind of thing that policy people have been dreaming about for decades.

but.

programmable money means money that follows rules. and rules have authors.

i keep thinking about what it actually feels like to hold money that has conditions attached to it. the cash in my wallet right now can buy anything legal. i don't have to justify it. i don't have to prove i'm spending it correctly. it just works.

a CBDC that can be restricted to certain categories of spending is a different kind of thing. it might be more efficient. it might reduce fraud dramatically. it might get resources to people faster and more fairly. all of that is probably true.

but it's also money that can be turned off. money that can expire. money that knows what you bought and whether that purchase was approved under whatever rule set was active at the time.

SIGN says the privacy architecture handles this — selective disclosure, controllable visibility, minimal data exposure by default. i believe that's genuinely how the system is designed. the technology exists to make this less invasive than it sounds.

what i can't quite resolve is the feeling underneath all of it.

because the question isn't really whether the technology can protect your privacy. it's who decides when the rules change. who writes the conditions on your money next year, and the year after. and what happens if you're flagged by an automated compliance system you can't appeal to.

i've seen how error-prone manual systems are. automated systems at national scale will have errors too. the difference is that those errors can have instant financial consequences before anyone realizes they happened.

i think SIGN is building something that could make financial systems genuinely fairer. i also think fair systems can become controlling systems without anyone making a single bad decision. just a thousand small choices that each seemed reasonable at the time.

if your money could be programmed to only be spent in certain ways — would you feel protected or restricted?