@SignOfficial #SignDigitalSovereignInfra $SIGN

I’ve started to think about something I call credibility latency—the invisible delay between when information appears and when the market actually trusts it enough to act. It’s not measured in milliseconds or block times. It’s measured in hesitation. In second guesses. In the subtle pause before clicking confirm.

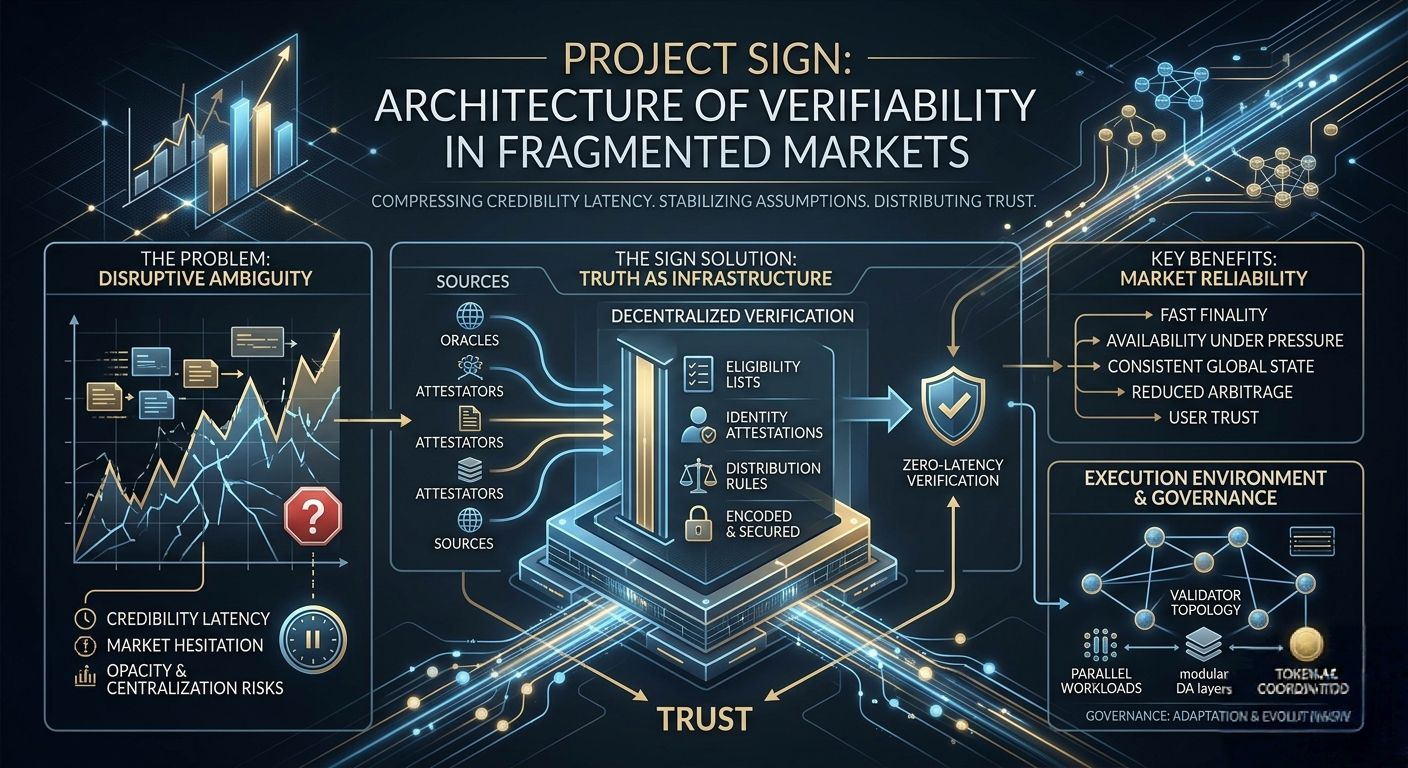

When I look at crypto markets through that lens, most systems don’t fail because they lack data. They fail because they cannot make that data settle into belief fast enough. And that is where something like SIGN begins to matter—not as a feature set, but as an attempt to compress that latency into something closer to zero.

What stands out to me about SIGN is not just that it handles credential verification and token distribution, but that it tries to reposition truth itself as infrastructure. Not truth in an abstract sense, but verifiable claims—who qualifies, who signed, who owns, who deserves access—encoded in a way that markets can rely on without constant reinterpretation.

Because in practice, decentralization quietly collapses the moment data ownership recentralizes. You can have distributed validators, parallel execution, and fast finality, but if the source of truth—eligibility lists, identity attestations, distribution rules—lives behind opaque APIs or trusted intermediaries, then the system reintroduces the same dependency it claims to remove.

I’ve seen this play out in small, almost forgettable moments. A trader waiting on an airdrop snapshot that hasn’t finalized. A distribution delayed because off-chain verification didn’t sync with on-chain logic. A liquidation event triggered not by price movement alone, but by delayed oracle updates that distorted perceived collateral value. These aren’t headline failures. They’re micro-frictions. But they accumulate.

SIGN, at least in design, is trying to eliminate that layer of ambiguity.

The infrastructure question becomes unavoidable here. Because credential verification at scale is not just about correctness—it’s about availability under pressure. If the system that confirms eligibility becomes slow, fragmented, or selectively accessible, then distribution becomes uneven, and markets begin to price in distrust.

So I find myself thinking less about the interface and more about the underlying execution environment. What kind of chain supports this? Is it optimized for parallel verification workloads, or does it serialize them into bottlenecks? How does validator topology influence access to credential data? Are we looking at geographically distributed nodes with independent data availability, or clusters that introduce subtle centralization risks?

These are not theoretical concerns. Under congestion, even small inconsistencies in block production or data propagation can create divergence in who thinks they are eligible versus who actually is. And that gap is where arbitrage—both financial and informational—emerges.

SIGN’s model implies a world where credential data is broken apart, distributed, and reconstructed when needed. Whether through erasure coding or modular data layers, the goal is clear: no single point should control access to truth. But distributing data introduces its own tension. Availability improves, but coordination becomes harder. Privacy strengthens, but latency can creep in.

You don’t eliminate trade-offs. You move them.

There’s also a psychological layer here that I don’t think gets enough attention.

Execution in crypto is not purely mechanical. It’s behavioral. The way a wallet prompts a signature, the way gas fees are abstracted, the clarity of what a transaction actually does—all of these shape how users feel about interacting with a system.

If verifying a credential requires multiple signatures, unclear prompts, or unpredictable fees, users hesitate. And hesitation, in markets, is costly. It changes entry points. It alters liquidity flow. It shifts outcomes.

SIGN’s relevance depends heavily on how invisible it can make its own complexity. If credential verification becomes as seamless as checking a balance, then it integrates into behavior. If not, it becomes another layer users try to bypass.

The deeper question, though, is about trust surfaces.

Every system that claims decentralization still makes assumptions. Some rely on trusted sequencers. Others depend on oracle networks that are only as reliable as their incentive structures. SIGN is no different. It has to decide where trust lives—whether in validators, attestors, or the mechanisms that aggregate and verify claims.

Partial centralization isn’t a flaw. It’s often a necessity. The real issue is whether it’s transparent and bounded.

Compared to other high-performance systems, the distinction here is subtle but important. Many chains optimize for throughput and execution speed, assuming that data correctness will follow. SIGN flips that priority. It assumes that execution without verifiable truth is fragile. That assumption changes how the system behaves under stress.

And stress is where things become real.

Imagine a high-volume distribution event—tens of thousands of users claiming tokens simultaneously. Network congestion rises. Oracles lag. Some nodes see updated credential states faster than others. A subset of users receives tokens earlier, potentially moving markets before others even confirm eligibility.

Now layer in leverage. Those tokens are used as collateral. Prices shift. Liquidations begin.

At that point, the question is no longer whether the system works in ideal conditions. It’s whether it maintains consistency under asymmetry. Whether two participants, acting at the same time, see the same reality.

SIGN’s long-term credibility will depend on how it handles exactly these moments. Not the clean demos. The messy edges.

The token, in this context, becomes less interesting as an asset and more as a coordination mechanism. It incentivizes validators to maintain data availability, rewards accurate attestations, and aligns participants toward preserving system integrity.

If designed well, it reduces the need for blind trust. Not by eliminating trust, but by distributing it across actors with something at stake.

Governance, then, is not about control. It’s about adaptation. The ability to adjust verification standards, respond to new attack vectors, and evolve data structures without breaking continuity. A static system in a dynamic environment doesn’t last.

Liquidity and oracles tie everything back to reality.

Credential verification doesn’t exist in isolation. It feeds into who can trade, who can borrow, who can access yield. If the verification layer is slow or inconsistent, it distorts these downstream systems.

I’ve seen trades fail not because of poor strategy, but because the underlying data didn’t resolve in time. An oracle update arrives late. A bridge delays settlement. A credential check stalls. The position exists in a kind of limbo, and by the time clarity arrives, the opportunity is gone—or worse, the loss is locked in.

This is why ideology alone doesn’t carry systems forward. You can believe in decentralization, privacy, and openness, but if the system cannot deliver predictable, reliable outcomes at scale, users adjust. They move toward whatever works.

What I keep coming back to is that SIGN is attempting something structurally quiet but foundational. It’s not trying to outpace the market. It’s trying to stabilize the assumptions the market relies on.

That’s harder.

Because it requires designing not just for success, but for failure. For delayed data. For inconsistent nodes. For adversarial conditions where incentives are tested.

Most systems reveal their true nature under stress. SIGN will be no different.

In the end, the real test is simple to describe but difficult to pass.

Can the system ensure that data ownership remains genuinely decentralized, that verification remains consistent under pressure, and that users experience it as something reliable rather than something they need to question?

If credibility latency approaches zero—if the market stops hesitating—then SIGN becomes more than infrastructure. It becomes part of the baseline assumption of how things work.

If not, it risks becoming another layer that users route around.And markets are very efficient at ignoring what they cannot trust.

@SignOfficial #SignDigitalSovereignInfra $SIGN