If you thought Sign Protocol S.I.G.N was all about airdrops and wallets, then you are living in the past. In March 2026, the frontier is AI Model Provenance.

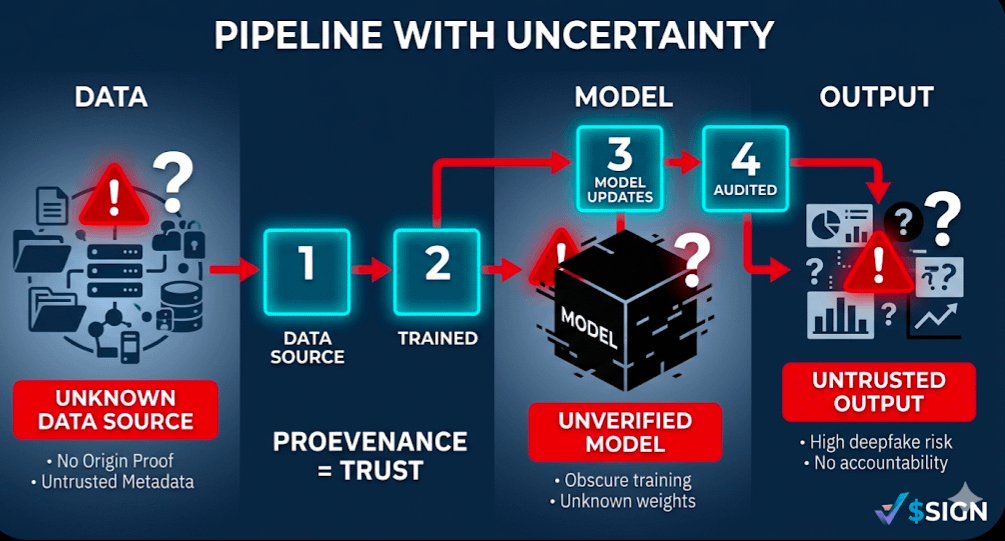

The Problem: The Untrusted AI

As AI gets more powerful, we have a massive "Trust Gap" problem: How do we know that an AI wasn’t tampered with? How do we know that the data used to train a medical AI was verified?

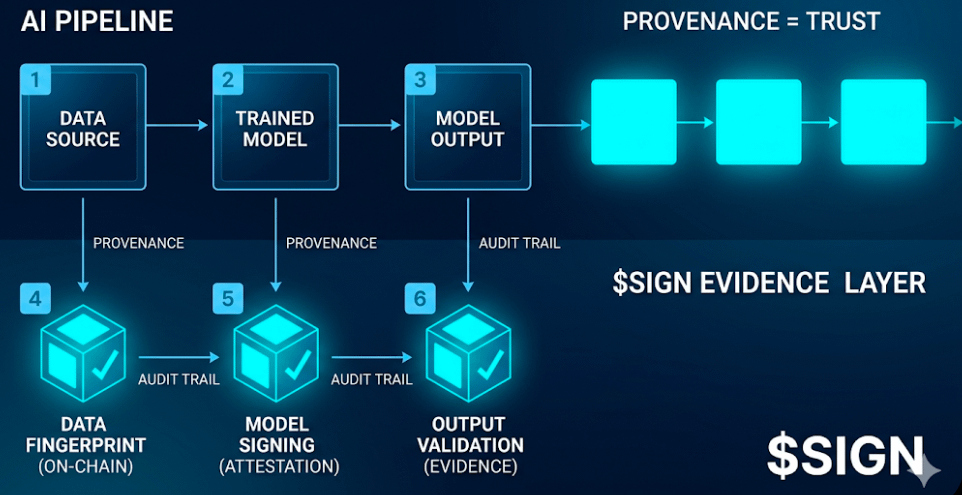

The Solution: Sign Protocol's Evidence Layer

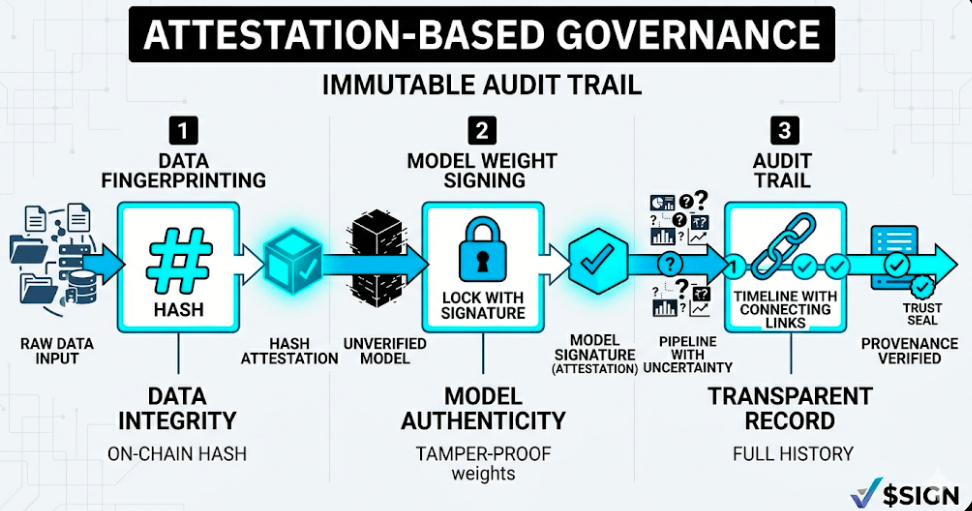

Rather than trusting an AI company to be honest with us, we are entering a world of Attestation-Based Governance.

1. Data Fingerprinting: Datasets are hashed and recorded as $SIGN attestations.

2. Model Weight Signing: When a model is "born," its weights are signed by the developers, creating an immutable bond between code and model.

3. Audit Trails: Any changes to an AI model create a new "thread" in the Evidence Layer, making it impossible to hide biases or changes.

Why "Irrelevant" is the Best Signal

The traders are distracted by watching the 96M token release today. Meanwhile, the real action is happening behind the scenes. While the markets are distracted, $SIGN is becoming the "Security Guard" of AI. In providing a framework for structured verification of what happened and when it happened, Sign is entering a multi-trillion-dollar industry that has absolutely nothing to do with "crypto vibes" and everything to do with the security of the entire tech industry.

Conclusion:

Trust in 2026 isn't a feeling; it's a mathematical certainty. Whether it's a Sierra Leone ID or an AI model training log, the infrastructure is the same.