I keep thinking about a very specific kind of failure that doesn’t look like failure at all… the kind where everything technically works, but the outcome still feels wrong.

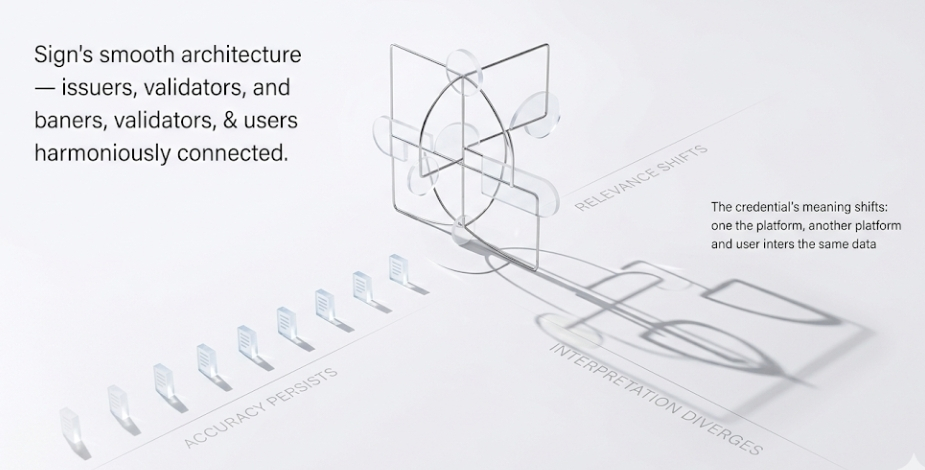

That’s the lens I end up using when I look at Sign. The system is structured cleanly—issuers define credentials, validators confirm them, users carry them forward—and nothing about that feels broken. In fact, it feels almost too smooth. And honestly, I get why that’s the goal. Reduce repetition, reduce friction, let trust move efficiently. But I can’t shake the thought that when systems become this smooth, they also become harder to interrogate.

Because Sign isn’t just verifying things—it’s allowing verified things to persist, to travel, to be reused in contexts that weren’t necessarily part of the original moment of issuance. That’s where I start to hesitate.

A credential is correct.

A validator confirms it.

A platform accepts it.

Everything lines up. And yet… the context has shifted slightly.

That shift is subtle, but it matters. And I keep circling back to how the system handles time, because time feels like the quiet variable here. Credentials are anchored to a moment, but their usage stretches far beyond it. The system preserves accuracy, but not necessarily relevance.

And that’s probably where things get complicated.

I also find myself thinking about how different actors interpret the same piece of information. Sign standardizes verification, but it doesn’t standardize meaning. One platform might treat a credential as sufficient proof, another might see it as just one signal among many. And the user, somewhere in the middle, assumes consistency because the system looks consistent.

That assumption feels fragile.

And honestly, I get why Sign avoids enforcing interpretation. The moment it tries to define meaning, it becomes heavier, more rigid, harder to scale. But by staying neutral, it creates space for divergence. Not dramatic divergence, just small, accumulating differences.

A credential means one thing here.

Something slightly different there.

And over time, those differences don’t cancel out—they stack.

Then there’s the question of incentives, which never really stays in the background no matter how much a system tries to abstract it away. Validators are expected to act correctly, but “correctly” often narrows down to what is technically valid, not what is contextually appropriate. Issuers might gain influence simply by being widely recognized, which introduces a quiet hierarchy even in a system designed to be distributed.

It’s not centralization in the obvious sense.

It’s more like gravitational pull.

And I can’t ignore the user side of this either. Because the system assumes a level of awareness that, realistically, most people won’t maintain consistently. Users reuse credentials because it’s easy. They trust that if something was accepted once, it will be accepted again. That behavior isn’t irrational—it’s efficient.

But efficiency has a way of flattening nuance.

A user reuses a credential without rethinking it.

A platform accepts it without recontextualizing it.

A validator confirms it without questioning its continued relevance.

And again, nothing breaks. But something feels slightly off.

I keep looping back to this idea that Sign doesn’t eliminate trust complexity—it compresses it into a more structured form. It organizes verification beautifully, but the meaning, the interpretation, the edge cases… those remain outside the system, interacting with it in unpredictable ways.

And maybe that’s inevitable. Maybe no system can fully contain something as fluid as trust.

But it leaves me watching a very specific boundary—the point where a credential stops being just a piece of verified data and starts shaping decisions in ways that weren’t originally intended.

Because that’s the moment when infrastructure quietly becomes influence.

And I’m not sure yet whether Sign stabilizes that influence… or simply makes it easier to carry forward.