The file looked settled before the argument had even started.

program_id. ruleset_version. amount. beneficiary_ref. eligibility_ref.

That last one kept pulling my eye back. Not the payout number. Not the vesting line. The reference. The little pointer off to somewhere earlier, somewhere cleaner-sounding, somewhere everybody in the room was already treating as done. Sign’s own TokenTable docs even show the shape of it that way: a distribution manifest with the program ID, ruleset version, beneficiary reference, amount, and an eligibility_ref pointing back to an attestation.

And that is the workflow that makes this theme feel real to me. Not an airdrop thread. Not “fairness in theory.” A boring release cycle. A welfare or subsidy distribution, or a grant program, where eligibility gets verified first, the evidence is anchored in Sign Protocol, the allocation table gets generated in TokenTable, funds move according to rules, and then the allocation plus execution evidence gets published. That is a literal canonical flow in the docs. TokenTable sits there as the capital distribution layer between identity, money movement, and the evidence that says what counts.

So yes, the table is supposed to feel like the fix.

Sign is explicit about that too. TokenTable exists because the old way is spreadsheets, manual reconciliation, opaque beneficiary lists, one-off scripts, slow audits, duplicate payments, operational errors, weak accountability. The promise here is deterministic, auditable, programmatic distribution. Allocation tables define beneficiary identifiers, amounts, vesting, claim conditions, revocation rules. Once finalized, they are versioned and immutable. That surface is not accidental. It is meant to stop the human wobble.

But the part that starts feeling wrong is exactly where the table stops.

Because TokenTable also says, very clearly, that it is not responsible for identity issuance or cryptographic evidence. It delegates evidence, identity, and verification to Sign Protocol. Then one layer down, Sign Protocol defines the schemas, the field types, the validation rules, the versioning, and the attested statements themselves: this citizen is eligible, this entity passed compliance, this program followed rule version X, this payment was executed. That means the table is deterministic, yes. But deterministic over what? Over inputs that were already shaped as institutional judgments before the table ever saw them.

That is the seam I care about.

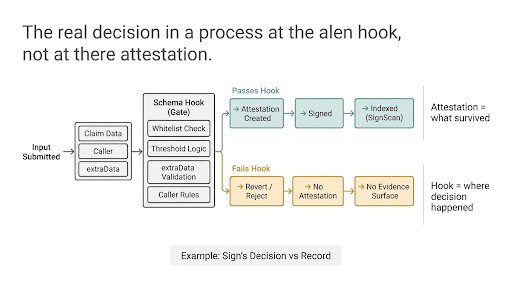

Because when somebody on ops asks why one beneficiary is out and another is in, the wrong answer is “the table decided.” The table did not decide anything interesting. It replayed. It enforced. It executed. The ugly part happened upstream, when somebody decided what “eligible” meant in the schema, what evidence source counted, what issuer had authority, what validation rule was strict enough, what review state was final enough to become attestable. By the time it lands in TokenTable, all that judgment has already been pressed flat into a reference field that looks as neutral as arithmetic. Sign’s own framing almost dares you to notice this: TokenTable ensures capital moves according to rules, not discretion, while Sign Protocol is the layer that makes facts inspectable, attributable, and reusable across systems.

And that is where the workflow gets mean.

Because the table can now be perfectly auditable and still carry a dispute nobody can solve at table-level.

You can replay the allocation logic deterministically. You can prove the finalized table matched the referenced eligibility proofs. You can show that governance actions were logged, that ruleset versioning existed, that the payout followed the published sequence. TokenTable is built to let auditors do exactly that. But that replay only tells you the distribution obeyed the evidence it consumed. It does not settle whether the evidence deserved to be treated as settled in the first place.

That creates at least three different consequences, and none of them are pretty.

First, the operational one. The team thinks they are debugging a distribution issue, but the distribution engine is not where the live uncertainty is anymore. The real fight has moved earlier, into attestation design, issuer trust, schema versioning, and approval workflow. So people keep reopening the table when the table is the one part behaving exactly as designed.

Second, the accountability one. A claimant or recipient sees an objective output and gets told the system was deterministic. Which is true in the narrowest possible way. But the narrow truth hides the wider one: the system became deterministic only after somebody upstream converted discretion into evidence. The challenge path gets harder because the controversial part no longer looks controversial. It looks referenced.

Third, the governance one. Sign keeps coming back to the idea that evidence establishes who approved what, under which authority, and according to which rules. That is powerful. It is also where the trust boundary quietly relocates. Not to the payout rail. Not to the table. To the earlier moment when a judgment becomes attestation-shaped enough for the rest of the system to stop arguing with it.

So I do think deterministic distribution is a fix.

I just do not think it fixes the part people are relieved to stop looking at.

What it really fixes is the downstream mess. The spreadsheet drift. The payout inconsistency. The reconciliation chaos. The visible human sloppiness. What it does not remove is judgment. It just forces judgment to happen earlier, inside Sign Protocol’s evidence layer, where it can arrive at the table wearing the mask of an input.

And once you see that, the clean table stops feeling like the whole story.

It starts feeling like the point where everybody else relaxes a little too early.