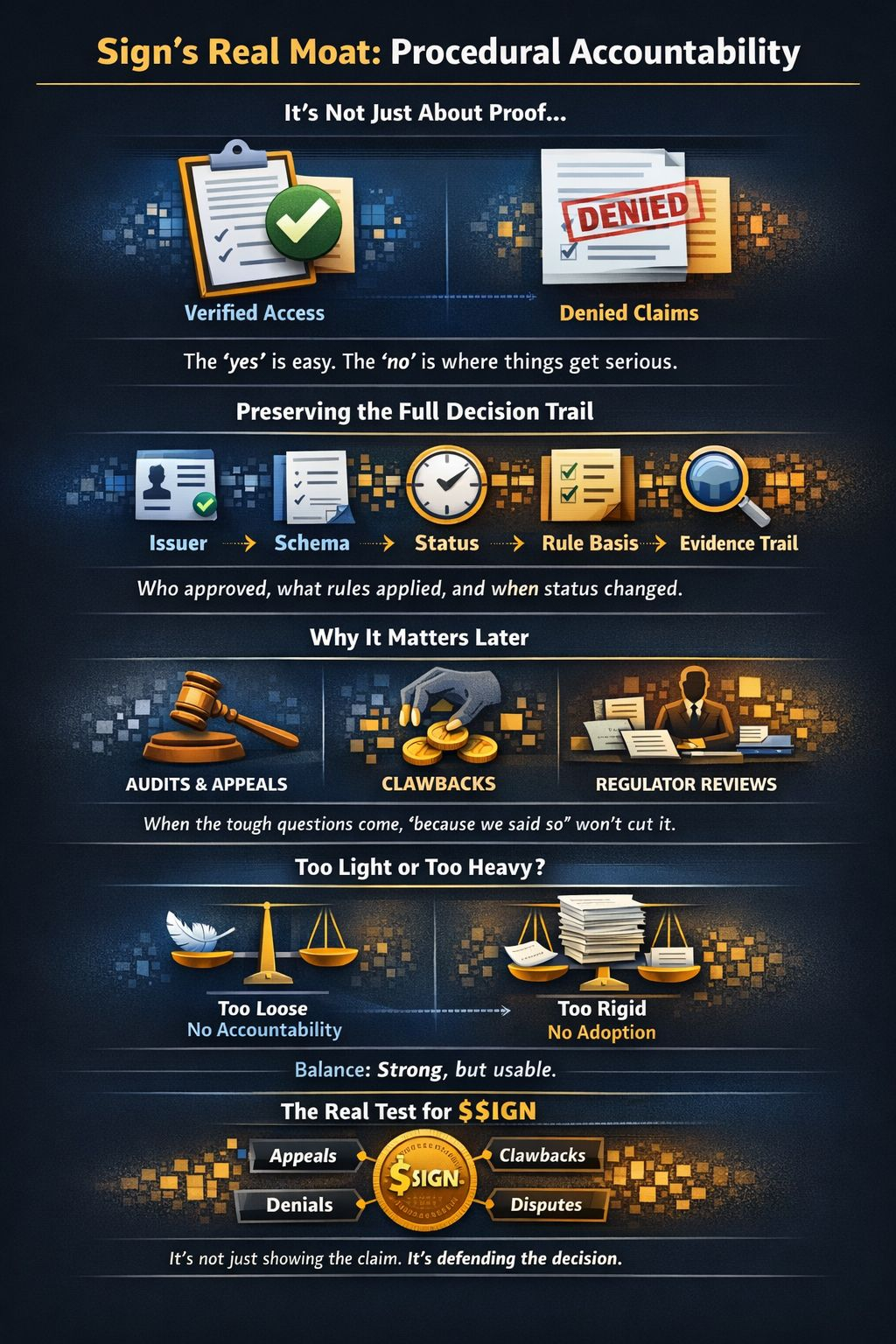

The more I looked at Sign, the less I thought the hard part was verification.Verification is the easy story. A claim gets issued. A credential gets checked. A wallet qualifies. Tokens move. That is the surface layer, and it sounds neat. But the harder question always shows up later. Why did this wallet qualify? Why was that one denied? Which issuer mattered? Which rule was applied? Was the attestation still active when the decision was made? Was the payout based on the right version of the criteria?

That is where Sign starts to look different.

I do not think the deeper product is just proof. I think it is procedure that can still be explained later.

That is a much stronger thing to build.

A lot of systems can show an output. Far fewer can defend the path that produced it. They can say yes, but they cannot cleanly explain why the yes happened. Or they can deny someone, but the logic behind the denial is scattered across dashboards, spreadsheets, side notes, and human memory. When the next conversation starts, audit, appeal, clawback, regulator review, partner review, the result is there, but the process behind it is blurry.

The result exists.

The procedure is foggy.

That is expensive.

This is why Sign feels more serious to me than the usual “trust layer” description. The stack is not just useful because it helps verify claims. It is useful because it can preserve the parts of a decision that people usually lose. The issuer behind the claim. The schema that defined the claim. The status of the attestation at the moment it mattered. The rule basis that turned the claim into a yes or a no. The evidence trail that lets someone revisit the whole thing later.

That is procedural accountability.

And that phrase sounds dry until real money or real access is involved. Then it stops sounding dry very fast.

A schema is not just formatting. It fixes what kind of claim is being made, so later systems are not guessing at the meaning. An attestation is not just a signed fact. It ties the claim to an issuer, a time, and a structure. Status logic matters because a claim that was valid once may later be revoked, expired, or replaced. Queryability matters because evidence is useless if nobody can reconstruct the right trail when the pressure shows up. TokenTable matters because this whole stack only becomes important when the decision turns into action. Allocation, vesting, distribution, denial, release, clawback.

That is the moment where procedure becomes real.

I think a lot of crypto writing misses this because it focuses on the first moment. Can the proof be made? Can the user be verified? Can the distribution run? Fine. But in serious systems, the first moment is not the hardest one.

The first decision says what happened.

The second asks whether it should have happened.

That second question is where weak systems get exposed.

A simple example makes it obvious. Imagine a token program using Sign-backed claims for KYC, region, contribution history, and eligibility checks. The first distribution runs smoothly. Then later a user appeals exclusion. Another wallet is flagged for clawback. A partner asks whether the rules were applied consistently. A regulator asks why one segment was included and another was blocked.

At that point, “the proof looked valid” is not enough.

The program needs the whole path. Which issuer signed the claim. Which schema shaped it. Which rule set was active. Whether status changed before execution. Whether the denial condition was clear. Whether the payout logic relied on the right inputs. If that trail is clean, the system looks strong. If that trail is broken, then even a correct decision starts looking weak because nobody can defend it properly.

That is why I think Sign’s moat may sit in preserving procedure, not just proving facts.

And this matters beyond approvals. It matters for rejections too. Honestly, maybe even more. Approvals are easy to celebrate. Rejections are where systems get tested. If a program blocks a claim, rejects a user, or denies a distribution, it needs more than a vague sense that “something failed.” It needs a defensible reason. Sign becomes more interesting if it can make those no decisions clearer, more structured, and harder to dispute later.

That is not just verification. That is accountable denial.

This is also where the design trade-off gets real. The more seriously you preserve procedure, the heavier the workflow can become. More structure. More status checks. More rule mapping. More evidence. More chances for builders to say, this is too much, I will just do it manually or keep the logic off to the side.

That is the risk.

If Sign stays too light, then the accountability story weakens. The outputs may still exist, but the decision trail gets thin. If Sign becomes too heavy, then builder adoption gets harder because the workflow starts feeling bureaucratic. So the product has to sit in a narrow space where procedure is preserved well enough to survive challenge, but not so heavily that teams avoid the rail altogether.

That is not a small balancing act.

It also shapes incentives in a very specific way. Builders want reusable decision logic because rebuilding trust checks every time is painful. Programs want fewer disputes and cleaner audits because repeated distributions get messy fast. Issuers want their claims interpreted correctly, not stretched across workflows with sloppy reasoning. Users want fewer arbitrary outcomes, even if they do not describe it that way. Institutions want something harder than convenience. They want results they can still defend later.

That is a stickier demand than hype.

And it changes how I think about TokenTable too. The shallow read is that it helps distribute tokens more cleanly. Sure. The deeper read is that TokenTable is where accountable procedure meets capital. If the underlying logic is preserved well, then payouts, denials, reviews, and clawbacks become easier to defend. If that logic is weak, then the distribution layer becomes a fast way to turn fragile reasoning into irreversible consequences.

That is a dangerous place to be.

So the value of TokenTable is not just smoother execution. It is whether execution can survive argument afterward.

That point matters for $SIGN as well. The token does not become stronger just because Sign touches verification or token distribution. That is too loose. The stronger case is that programs may keep returning to the same rail because they need repeated access to preserved decision logic, status-aware claims, reconstructable evidence, and defensible execution. If Sign becomes the place teams cannot skip when the next audit, appeal, denial review, or payout dispute shows up, then it starts to feel operationally necessary. If the real accountability layer still lives outside the stack, then the product may be useful while the token remains easier to bypass.

Useful is not enough.

The real test is whether the workflow keeps coming back to Sign when things get messy.

That is what I would watch. Not just launches. Not just new claims. I would watch repeated programs. Appeals. Clawbacks. Denials that need to be justified. Distributions that need to be defended. I would watch whether teams use status changes as real operating signals instead of passive metadata. I would watch whether Sign looks more valuable under scrutiny than it does in the clean first run.

That is where this thesis either gets stronger or breaks.

Because I think the market still reads Sign a little too politely. It is not just helping systems verify. It is asking systems to preserve enough procedure that later scrutiny does not tear confidence apart.

That is harder than proof.

Anyone can show that a claim existed.

The stronger system is the one that can still explain the yes, the no, and the payout when the argument starts later.@SignOfficial #signdigitalsovereigninfra $SIGN