I used to believe that capital inefficiency was mostly a distribution problem.

It felt logical. If funds weren’t reaching the right people, the issue had to be routing, better targeting, better tooling, better coordination. In crypto, this belief translated into chasing new primitives that promised fairer distribution: airdrops, grants, incentive programs. Each cycle introduced a more refined mechanism.

But over time, something started to feel off.

Despite better tools, the outcomes didn’t improve proportionally. The same patterns repeated, duplication, leakage, short term participation. Capital moved, but it didn’t always settle where it was intended. And more importantly, it didn’t create lasting behavior.

That’s when I began to question whether the problem was ever distribution to begin with.

Looking closer, the issue felt more structural than operational.

Many systems that claimed to distribute capital efficiently still relied on weak identity assumptions. Eligibility was often inferred, not proven. Participation could be replicated. Compliance existed, but mostly as an external process rather than an embedded one.

There was also a subtle form of centralization. Not in custody, but in verification. Decisions about who qualified and why were often opaque, platform-dependent, and difficult to audit across contexts.

And perhaps most telling, usage didn’t persist.

Ideas sounded important, even necessary. But they didn’t translate into repeated behavior. Users engaged when incentives were high, then disappeared. Systems weren’t retaining participation because they weren’t enforcing structure.

It wasn’t just a capital problem. It was a trust problem.

This is where my evaluation framework began to shift.

I stopped focusing on how capital was distributed and started paying attention to how systems verified participation. The question changed from “Where does the money go?” to “What proves that it should go there?”

That shift led me toward a different lens:

Systems should work quietly in the background, enforcing rules without requiring constant user awareness.

The strongest systems don’t ask users to prove themselves repeatedly. They embed verification into the process itself.

Payments do this well. When a transaction clears, no one questions the underlying validation steps. It’s assumed, because it’s built into the system.

Capital systems, I realized, rarely operate that way.

That’s where the idea of a “new capital stack” began to make sense to me.

Not as a new distribution mechanism, but as a restructuring of how capital, identity, and trust interact.

This is the context in which I started examining @SignOfficial and the broader $SIGN Token ecosystem.

At first, it didn’t feel radically different. Concepts like attestations, schemas, and verifiable records exist across Web3. But what stood out wasn’t the individual components, it was how they were positioned.

Not as features, but as infrastructure.

The core question that emerged was simple:

Can capital systems function reliably without a shared layer of verifiable identity?

Because without identity, distribution becomes guesswork. And without verifiable evidence, trust becomes contextual, dependent on the platform, the moment, or the narrative.

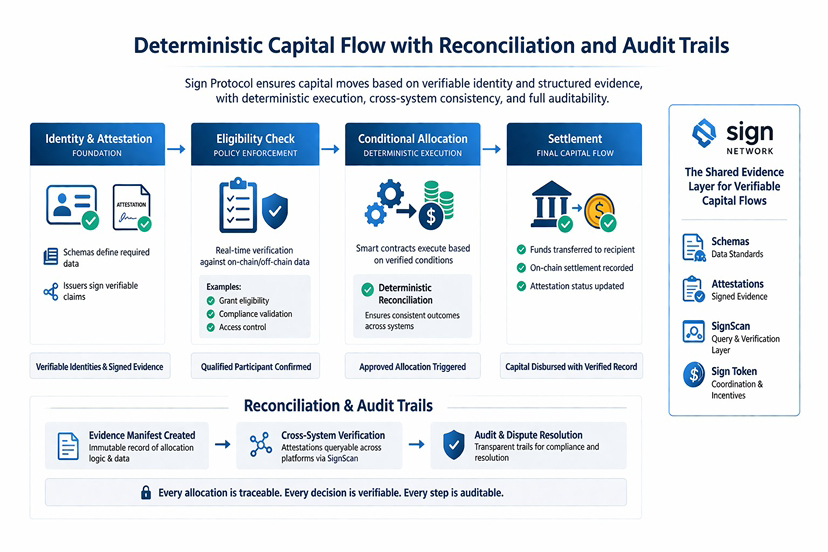

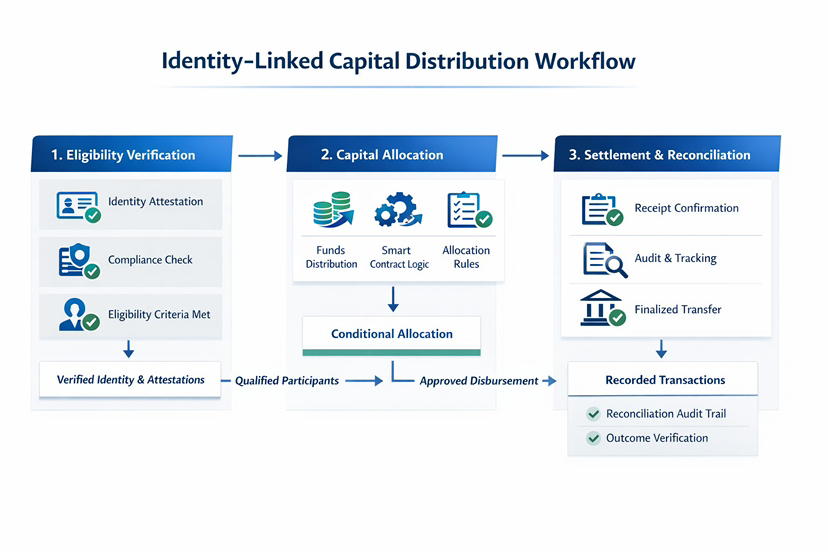

#SignDigitalSovereignInfra approaches this differently by structuring identity as an evidence layer.

Schemas define how data is standardized, acting as shared formats that allow different systems to interpret information consistently. Attestations act as signed records that encode actions, approvals, and eligibility, where the credibility of issuers and the reliance of verifiers shape trust across systems. Together, they create a system where capital flows are not just executed, but justified, and where the same verified data can be reused across applications without duplication.

This distinction matters.

It shifts capital from being distributed based on assumptions to being allocated based on verifiable conditions.

What makes this more practical is how the system handles data.

Not everything is forced on chain. Some attestations exist fully on chain for transparency. Others are stored off chain with verifiable anchors, allowing for scalability and privacy. Hybrid models combine both, depending on the use case.

This flexibility reflects a more realistic understanding of how systems operate.

In traditional finance, not every piece of data is public. But every decision is traceable. That balance between visibility and privacy is difficult to achieve, but necessary.

Sign Protocol seems to be designing for that balance from the start.

There’s also an important shift in how verification is accessed.

Through query layers like SignScan, attestations are not just stored they are retrievable across systems. This allows applications to integrate verification directly into their logic, enabling real time decision making based on structured evidence.

Eligibility checks, compliance validation, access control these are no longer external processes. They are enforced within the system itself, with deterministic reconciliation ensuring outcomes remain consistent across environments, and verifiable evidence supporting audits and dispute resolution.

At that point, identity is no longer something users manage. It becomes something systems reference.

This also reframes the role of the Sign Token.

Rather than acting as a speculative layer, it functions as a coordination mechanism. It aligns incentives across participants issuers, verifiers, and developers supporting the integrity and reliability of the evidence layer.

In a system where trust depends on consistent verification, aligned incentives are not optional. They are structural.

Looking at this more broadly, the relevance extends beyond crypto.

We’re entering a period where trust is increasingly fragmented. Online systems either expose too much or verify too little. Users are asked to provide data repeatedly, yet still face uncertainty about outcomes.

At the same time, digital infrastructure is expanding in regions where formal trust systems are still evolving. In these environments, verifiable identity and traceable capital flows are not just useful, they’re foundational.

This is where the idea of a programmable capital layer starts to feel less abstract.

It becomes a way to structure coordination at scale.

But even if something makes sense structurally, adoption isn’t guaranteed.

Markets often blur that distinction.

Attention tends to follow narratives, new primitives, new tokens, new systems. But usage follows necessity. And necessity only emerges when systems become embedded into workflows.

Right now, most capital systems even in crypto, are still optional.

They can be used, but they’re not required.

This is where the real challenge lies.

For a system like Sign Protocol to succeed, it has to cross a usage threshold.

Developers need to integrate attestations into core application logic. Identity must become a prerequisite for participation, not an add-on. Users need to interact with the system repeatedly, not because they’re incentivized temporarily, but because the system depends on it.

Without that, even well-designed systems struggle to sustain themselves

There’s also a deeper tension at play.

Technology can structure trust, but it doesn’t create it automatically.

People respond to systems based on how they feel to use. If identity systems feel intrusive, they’re avoided. If they feel unnecessary, they’re ignored. If they feel natural embedded, unobtrusive, they’re adopted without resistance.

That balance is difficult.

Too much visibility creates friction. Too little reduces meaning.

The systems that succeed will likely be the ones users don’t notice, but rely on consistently.

So what would build real conviction for me?

Not announcements or isolated integrations.

I’d look for applications where removing the identity layer breaks functionality. Systems where attestations are required for access, for participation, for settlement. Patterns of repeated use across users, across time.

I’d also watch validator and participant behavior. Are attestations being issued and verified consistently? Are systems depending on them, or just displaying them?

Because that’s the difference between signal and noise.

At first, the idea of a new capital stack felt like an extension of existing systems, more efficient, more programmable, more transparent.

But upon reflection, it feels more fundamental than that.

It’s not just about moving capital better. It’s about proving why capital moves at all.

And in that sense, the real shift isn’t technical, it’s structural.

Because the difference between an idea that sounds necessary and infrastructure that becomes necessary is repetition.