There’s a specific kind of frustration that doesn’t explode—it lingers. You feel it when you’ve already proven who you are, again and again, and still get asked for “just one more verification.” Another upload. Another delay. Another quiet implication that trust is provisional, temporary, and somehow always incomplete.

I’ve watched this happen in small, almost forgettable moments. A developer friend waiting three days for payment because a platform needed to “review” work that was already delivered and approved in spirit, just not in system logic. A hiring manager scrolling through polished profiles, knowing full well that half of what matters can’t actually be verified without back-and-forth emails. Even something as basic as proving you attended a university turns into a slow dance between databases that don’t talk to each other.

It’s strange, if you step back. We built systems that can move money across continents in seconds, but we still hesitate when it comes to confirming whether someone did the thing they said they did. Trust, in digital spaces, feels oddly fragile—like it resets every time you switch platforms.

SIGN enters here, not loudly, not as some grand reinvention, but more like an attempt to fix a leak that’s been ignored for too long. And I’ll admit, I didn’t take it seriously at first. It sounded like one of those infrastructural ideas that promise elegance but deliver complexity. Another layer, another abstraction. But the more I sat with it, the more it started to feel less like an addition and more like a correction.

Because what SIGN is really trying to do is embarrassingly simple in concept: if something can be proven, why should it ever need to be re-proven? And if that proof is valid, why should value—payment, access, recognition—be delayed?

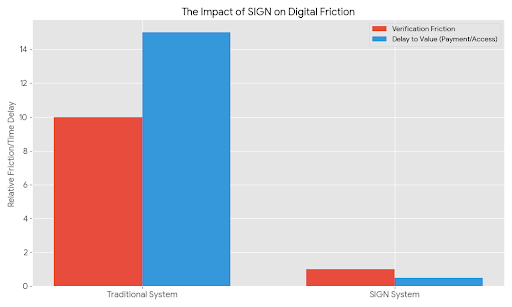

The gap between those two questions is where most of our digital friction lives.

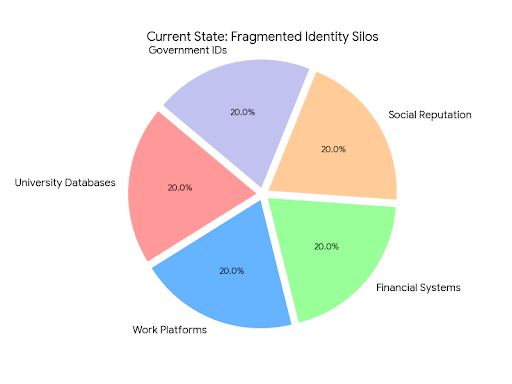

Right now, identity is scattered in pieces. Your degree lives somewhere formal and sealed off. Your work history floats on platforms that benefit from keeping it there. Your reputation is built in fragments, each one context-specific, each one slightly incompatible with the next. It’s like carrying around a dozen passports, none of which are fully recognized everywhere.

And so every system rebuilds trust from scratch. Slowly. Redundantly.

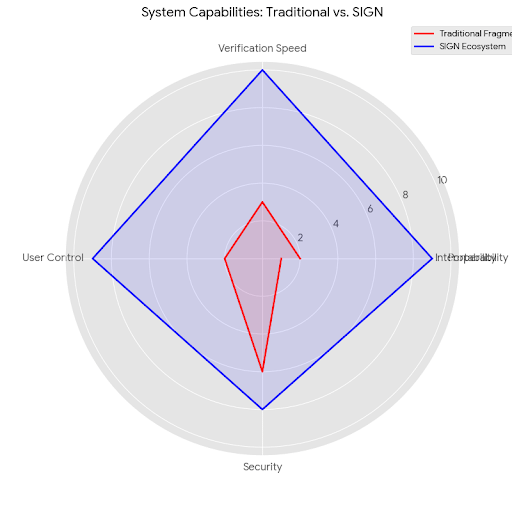

SIGN leans into a different assumption: that trust, once established properly, should be portable. Not in a vague, aspirational sense, but in a way that is technically verifiable and difficult to fake. That’s where the idea of verifiable credentials comes in, though even that phrase feels colder than the reality of it.

Imagine finishing a project and instead of just receiving a payment (eventually), you receive a kind of proof—something that confirms, in a way that can’t be quietly altered or exaggerated, that you did this work, for this client, under these conditions. Not a screenshot, not a testimonial buried in an email thread, but a piece of data that holds its integrity no matter where it goes next.

Now imagine that this proof doesn’t just sit there. It triggers something. Payment, yes—but also reputation, access, future opportunities. It becomes active.

That’s the part that’s easy to overlook. SIGN isn’t just about verifying things faster. It’s about collapsing the distance between verification and consequence. You prove something, and the system responds immediately. No human bottleneck, no waiting for someone to click “approve,” no ambiguity about whether you’ll actually receive what you’ve earned.

There’s a quiet radicalism in that.

I keep thinking about how much of modern work is built on delayed trust. You do the work first, then hope the system catches up. Sometimes it does. Sometimes it doesn’t. Entire industries—freelancing, remote contracting, even parts of corporate hiring—operate on this lag. It’s inefficient, but more than that, it’s psychologically draining. You’re always slightly unsure.

SIGN seems to be asking: what if that uncertainty isn’t necessary?

Of course, there’s a temptation to oversimplify this into a neat story about efficiency. Faster verification, instant payments, smoother systems. And yes, all of that matters. But there’s something deeper here that’s harder to articulate without sounding a bit abstract.

It’s about how trust is experienced.

Right now, trust online is conditional. It depends on the platform you’re on, the policies they enforce, the intermediaries they rely on. You don’t really own it. You borrow it, temporarily, from systems that can revoke or ignore it at any time.

With SIGN, the idea—at least in theory—is that trust becomes something closer to a personal asset. Not in a financialized, speculative sense, but in a structural one. Your credentials, your proofs, your verified actions—they move with you. They don’t belong to a single platform’s database. They exist in a way that other systems can recognize without needing to reinterpret them.

That sounds clean. Maybe too clean.

Because the moment you start thinking about real-world adoption, the edges get messy. Institutions are slow to change, and not without reason. A university doesn’t just decide one day to issue credentials in a new format because it feels more efficient. There are standards, reputations, bureaucracies. The same goes for companies, platforms, governments. Trust, ironically, is something they guard very carefully.

And then there’s the question of who gets to issue these credentials in the first place. If everything becomes verifiable, the authority behind that verification matters even more. A credential is only as strong as its issuer. So you end up with a new layer of trust—trust in the entities that define trust. It’s not a contradiction, exactly, but it’s not trivial either.

Privacy sits uncomfortably in the middle of all this. The idea of having a set of verifiable credentials that represent your identity, your work, your achievements—it’s powerful, but also slightly unsettling. There’s a fine line between transparency and exposure. People don’t want to reveal everything, all the time, to everyone.

So the system has to allow for nuance. Selective disclosure. The ability to prove something without revealing more than necessary. It sounds elegant in theory, but in practice, it requires careful design. Otherwise, you risk creating a world where verification becomes invasive rather than liberating.

And yet, despite these complications, there’s something compelling about the direction this points to. Not because it promises perfection, but because it addresses a problem that most of us have quietly accepted as inevitable.

The more I think about it, the more it feels like SIGN is less about technology and more about removing a kind of background noise. The constant low-level friction of proving, re-proving, waiting, doubting. If that noise disappears, even partially, the effect could be disproportionate.

You wouldn’t notice it in a dramatic way. There wouldn’t be a moment where everything suddenly feels different. It would show up in smaller shifts. Payments arriving when they should. Credentials being accepted without hesitation. Opportunities opening up a little faster because the system doesn’t need to pause and verify what’s already been proven.

And maybe that’s the point. Infrastructure rarely announces itself. It works quietly, or it doesn’t work at all.

There’s also a part of me that remains slightly skeptical, not of the idea itself, but of how neatly it can be implemented in a world that resists neatness. Human systems are messy. People make exceptions, bend rules, rely on intuition. Not everything can—or should—be reduced to verifiable data points.

But then again, not everything needs to be.

Maybe the role of something like SIGN isn’t to replace human judgment, but to handle the parts that don’t require it. The repetitive, mechanical aspects of trust. The things we verify not because they’re meaningful, but because the system demands it.

If those pieces can be automated, reliably, it frees up space for the more complex, human side of trust—the kind that involves interpretation, context, and, occasionally, risk.

I keep coming back to that freelancer waiting for payment. Not because it’s a dramatic story, but because it’s so ordinary. Multiply that moment by

And then you start to see why something like SIGN, despite its technical framing, feels less like an innovation and more like a long-overdue adjustment.

Trust shouldn’t be this hard to move around.