@SignOfficial #SignDigitalSovereignInfra

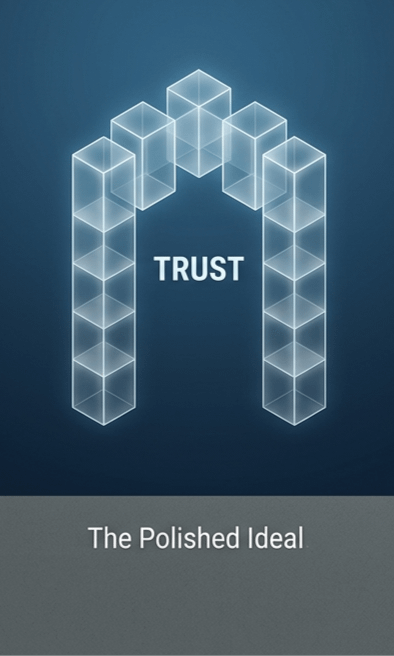

I’ve spent enough time watching systems rise and fall to stop being impressed by how they sound in the begining. Most ideas arrive polished, almost inevitable in their logic, as if the world has simply been waiting for them to apear. They promise clarity where there was confsion, fairness where there was imblance, trust where there was doubt. And for a moment, it’s easy to believe them.

But ideas don’t live where people do. Systems do.

And systems don’t survive becase they sound right—they surive because they keep working when things stop being ideal.

I’ve noticed that trust is often spoken about as if it were a mood, something you can generate with the right mesage or the right desgn. But in practice, it behaves more like a record than a feeling. It acumulates slowly, unevenly, sometimes invisibly. It has to be maitained, cheked, and sometimes repired. It doesn’t care how elgant the underlying idea is. It only reflects what actually hapened, over time, under presure.

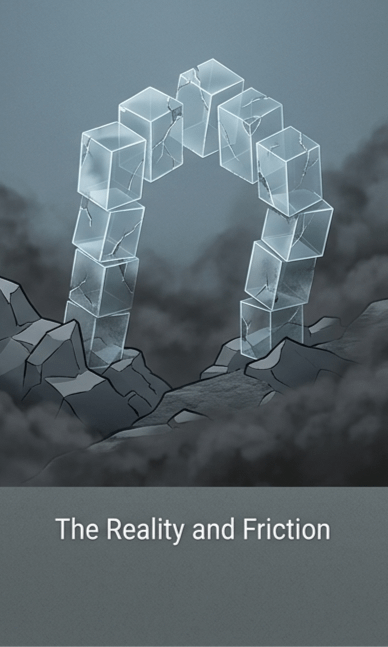

That’s where most things begin to strain.

Identity, for example, always seems staihtfoward at a distance. It feels like something that can be defined cleanly, assigned, and verified without much resitance. But when it meets reality, it becomes one of the most peristent sources of friction. Not because people are unwilling, but because identity is rarely singular or stable. It shifts across contexts, carries history, and resists being reduced without losing something important. Systems that ignore this tend to either overcompesate or collapse under their own asumptions.

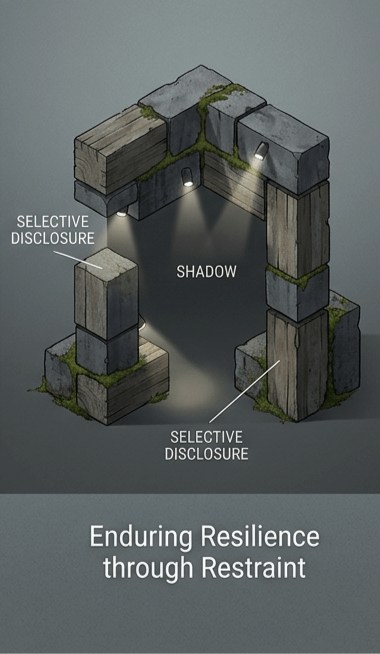

I’ve seen the same tension play out between visibility and privacy. There’s a recurring belief that more transpency will resolve uncertainty, that if everything is visible, trust will naturally follow. But complete visibility often creates its own distortions—people perform, data overwhelms, and meaning gets diluted. On the other end, total concelment erodes confidence just as quickly. Without any visibility, there’s nothing to evaluate, nothing to verify.

Somewhere in between, there’s a quieter approach that rarely gets atention. Not full exposure, not full concelment, but something more deliberate. Selective disclosre. Intentional sharing. Systems that allow information to be revealed when necesary, and withheld when it isn’t, without breaking the underlying trust. It sounds simple when stated like that, but in practice, it demands a level of discipline that most designs don’t anticpate.

Because design is the easy part.

Implementation is where everything becomes uneven.

What looks clean in theory often becomes tangled when it encounters real constraints—human behavior, insitional limits, operational realties. People don’t always act predictably. Organations don’t always move coherntly. Proceses don’t always scale the way they’re suposed to. And slowly, the system starts adapting, sometimes in ways that drift far from its original intent.

I’ve come to think that this is where the real work happens—not in defining what a system should be, but in observing what it becomes when it’s used, misused, stretched, and relied upon.

That’s why the systems that endure tend to feel unremarkable at first. They don’t announce themselves loudly. They don’t rely on constant atention to justify their existnce. They just keep functioning, quietly resolving problems that don’t make headlines. Over time, they become part of the background—trusted not because they claim to be, but because they’ve been tested enough times without failing.

SIGN, at least in how I think about it, sits somewhere in this space of tension. Not as an idea that tries to redefine everything at once, but as something that has to contend with the same realities as any other system—trust that must be earned, identity that resists simplification, verification that has to hold up under scrutiny, not just intention.

And maybe that’s the part that matters more than anything else.

Not whether something feels new, or even whether it feels right at first glance, but whether it can continue to function when the noise fades, when expectations settle, when the system is left to stand on its own.

I’m still not sure what separates the things that last from the ones that don’t. It doesn’t seem to be ambition, or clarity, or even usefulness in the short term. If anything, those can be misleading.

What I do notice, though, is that the systems that endure rarely try to resolve everything at once. They leave space—for adjustment, for uncertainty, for the parts of reality that refuse to be neatly defined.

And I find myself wondering if that restraint, that willingness to remain incomplete, is not a weakness… but the only way something can stay true over time.

Sometimes I think trust doesn’t collapse all at once—it erodes in places no one thought to look.