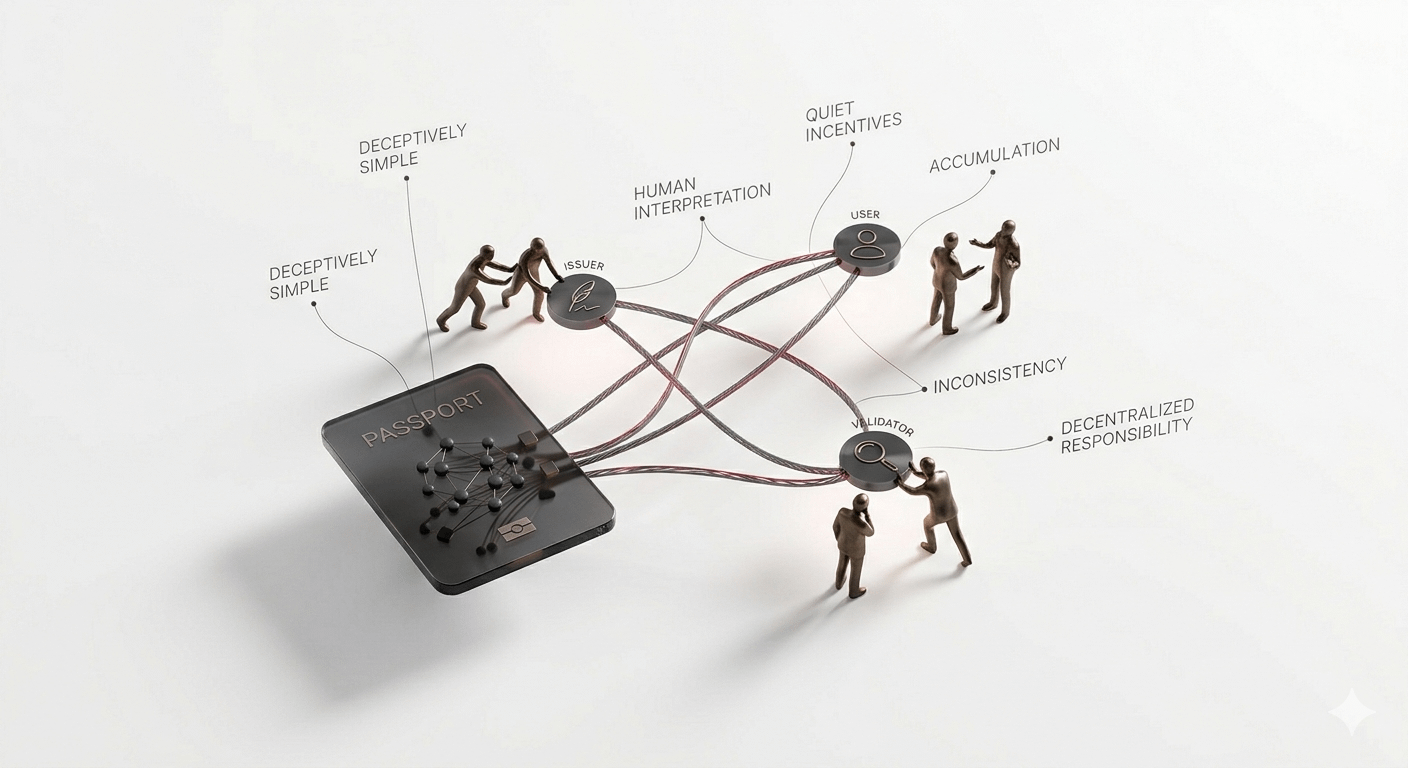

I keep thinking about Sign and how deceptively simple it looks on the surface. It’s almost meditative in its structure: issuers create credentials, validators check them, users carry them around like digital passports. And that’s appealing at first glance—clarity, minimal friction, something that just works. But then I start circling the edges, noticing where assumptions quietly stack up. The system assumes people will act predictably, that validators will behave as expected, that issuers won’t drift from their standards. That’s a lot of faith to place on layers of human behavior that are inherently messy.

And honestly, I get why Sign stays so restrained. By keeping the mechanics minimal, it avoids becoming an overbearing authority, and that part makes sense to me. But minimalism also means neutrality, and neutrality shifts responsibility outward. Users have to interpret what it means to carry a credential, platforms have to decide how to treat it, and suddenly a system designed for efficiency depends on countless small, unpredictable human decisions. That’s the tension: portability feels clean, but interpretation is inherently messy. A credential accepted one way in one context can behave differently somewhere else. Over time, small misalignments could compound into something substantial.

Then there’s the incentives layer, subtle but unavoidable. Validators are rewarded for correctness, issuers gain influence over repeated confirmations, users gain convenience. It all looks balanced, but balance isn’t the same as alignment. Incentives nudge behavior quietly—sometimes in ways the designers didn’t intend. A validator might prioritize speed over scrutiny. An issuer might gain de facto authority. Users might overshare because the cost of friction feels higher than the cost of misunderstanding. The system doesn’t break when that happens. It just drifts, slowly. That’s both fascinating and worrying.

I keep circling back to adoption, because theory and reality diverge here in a very human way. Sign’s design works perfectly in controlled conditions, when everyone follows the intended roles and the context is clear. But real-world networks are messy. People misunderstand, platforms misinterpret, and integration layers introduce subtle inconsistencies. A credential might carry different weight depending on where it’s checked. That inconsistency isn’t catastrophic immediately, but it accumulates. And accumulation is the thing most systems don’t predict. It’s quiet, invisible, but meaningful.

Privacy versus transparency also sits in the back of my mind. Sign allows portability without centralizing verification, which feels good—users retain agency. But decentralized verification means oversight is diffuse. There’s less guidance for borderline cases, and the system won’t automatically correct misunderstandings. So while it preserves freedom, it also leaves a lot of room for misalignment or misuse. That trade-off is never clean. Freedom comes with ambiguity.

And I keep returning to that point: Sign redistributes complexity rather than eliminating it. By compressing repeated verification into portable credentials, it moves the friction outward—to users, platforms, and human interpretation. That makes the design elegant, but it also makes adoption tricky. The same strength—flexibility and portability—could also become a subtle weakness in large-scale, messy environments. Will the system handle thousands of platforms, millions of users, and countless edge cases without silently degrading? I don’t know. That’s the tension I find most compelling.

So, in the end, Sign fascinates me not because it solves every problem, but because it exposes where the hard parts of digital trust really live: not in the code, but in the friction, the interpretation, the drift. Watching it operate feels like observing a quiet experiment in trust, one that’s elegant, restrained, and quietly vulnerable at the same time. And I can’t help but circle back to that edge where design meets real-world chaos, because that’s where the story actually unfolds.