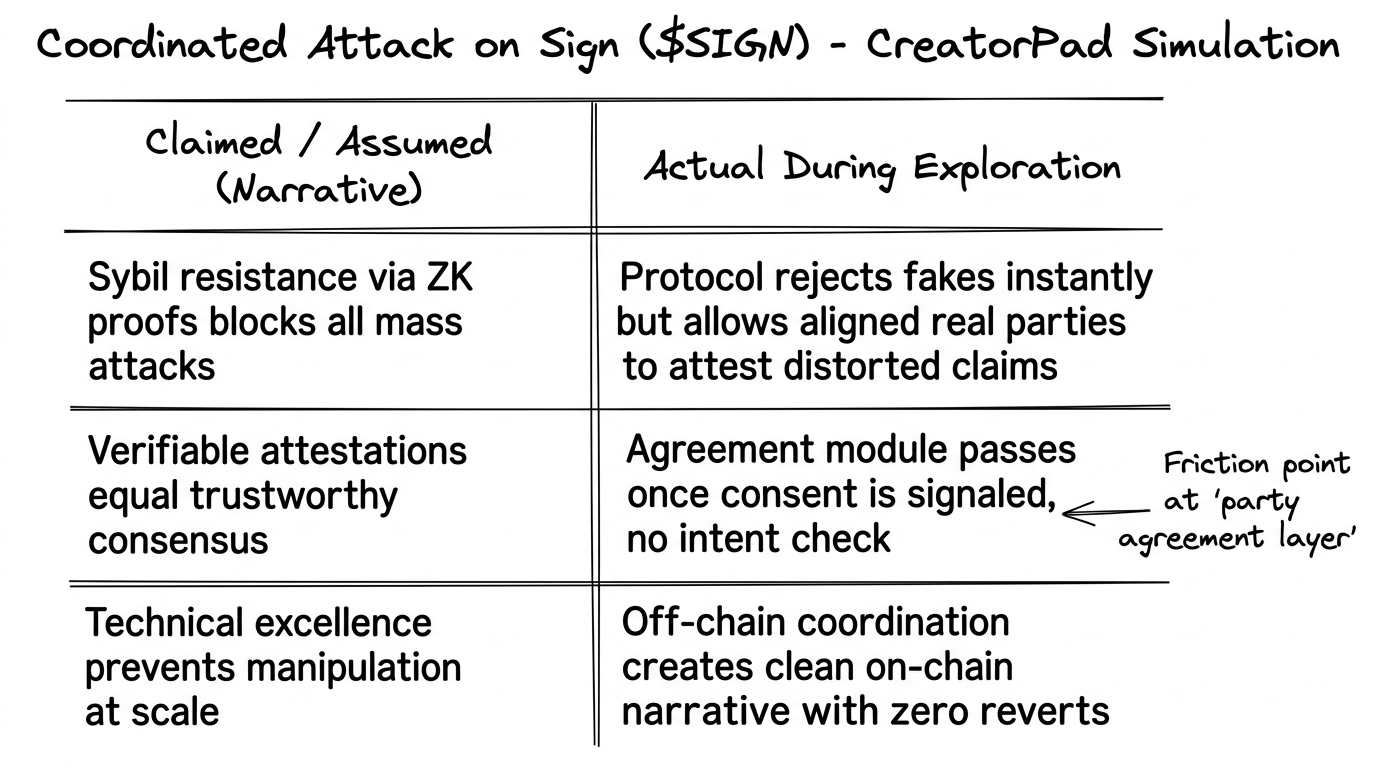

While scanning the chain last night, the SIGN token contract at 0x868FCEd65edBF0056c4163515dD840e9f287A4c3 on Etherscan showed nothing out of the ordinary—no unusual transfers, no clustered calls, just the quiet hum of 211 total transfers across its life and 639 holders with barely a ripple in the last 14 days. That stillness hit different after the CreatorPad task I’d wrapped earlier, where the prompt forced me to map out what a coordinated attack on Sign ($SIGN ) #SignDigitalSovereignInfra @SignOfficial l would actually look like in practice. I expected fireworks, some clever exploit flooding the attestation layer with fakes. Instead the simulation kept returning the same understated result: the protocol’s Sybil resistance held firm, ZK proofs and minimal collateral doing exactly what the docs promised. Yet the real vector emerged somewhere quieter, in the space between parties agreeing on what gets attested.

the contrast that stuck with me

The task pulled me into a multi-party scenario, the kind that mirrors real-world credential flows—supply chain proofs, compliance records, reputation scores. I set up three simulated actors, each with clean wallets and distinct histories, then had them coordinate off-chain via a shared script to attest the same slightly distorted claim: a “verified” asset transfer that technically passed every hook but carried a subtle inconsistency in the data payload. The on-chain result? Clean attestations issued in seconds, cross-chain synced without friction, no reverts, no flags. The design choice that lingered was how the protocol prioritizes verifiable agreement once the parties signal consent, rather than second-guessing the human intent behind it. Technically flawless. Socially, it opened a door I hadn’t fully appreciated before.

I caught myself pausing over my own notes from the session, remembering a small anecdote from last year when I helped a friend verify an on-chain employment credential for a cross-border gig. The attestation looked perfect—timestamped, signed, immutable. Yet the employer later disputed the scope in private, and the credential became a point of friction instead of trust. That same gap showed up in the CreatorPad sim: the attack doesn’t need to break the chain; it just needs enough seemingly independent parties to align on a narrative that serves their shared (hidden) incentive. Sign ($SIGN) handles the evidence layer with elegant omni-chain precision, but the bottleneck it surfaces is older than any smart contract—coordinated intent.

hmm... this mechanic in practice

Two timely market examples made the point sharper. Back on March 7, 2026, $SIGN surged over 100% amid news of its role in sovereign digital infrastructure, volume spiking as institutions and projects began testing attestations at scale. On-chain flows didn’t show malice, just heightened activity around new schemas—exactly the moment when a coordinated group could quietly seed “legitimate” attestations that shape reputation or compliance narratives without tripping Sybil filters. Then there’s the ongoing CreatorPad campaign itself, rewarding structured engagement around #SignDigitalSovereignInfra; it drives real users to experiment, but it also creates a petri dish for testing how easily aligned actors could amplify selective attestations. In both cases the protocol behaved as designed: accessible, verifiable, low-friction. The attack surface wasn’t the tech failing; it was the social layer assuming good faith once consent is recorded.

Actually—here’s where the honest skepticism crept in. I used to lean hard on the crypto default that better cryptography equals better security. Sign’s ZK identity proofs and schema hooks make mass Sybil attacks expensive and detectable, a genuine step forward from earlier attestation experiments. But after running the coordinated scenario three times with different variables, I reevaluated that comfort. The framework that crystallized for me was a three-layer loop: technical verification (strong), party agreement (fragile), and off-chain coordination (invisible). A real attack wouldn’t announce itself with spam; it would look like a handful of high-reputation entities quietly aligning on attestations that tilt incentives—perhaps inflating a project’s compliance score or creating a false chain of custody for assets. The simulation made it feel almost too easy once the off-chain script was in place.

still pondering the ripple

I kept turning this over while the screen dimmed, the kind of late-night musing that refuses to settle. What stayed with me wasn’t fear of some dramatic exploit, but a quieter unease about how protocols like Sign ($SIGN) inherit the coordination problems we’ve always had in human systems. We celebrate trust-minimized infrastructure, yet the moment parties must agree on shared truth, the minimization hits its limit. The CreatorPad task didn’t expose a bug; it exposed an assumption—that verifiable data alone prevents manipulation when the manipulators play by the rules.

There’s a personal reflection in that. I’ve spent years watching on-chain projects optimize for scale and privacy, believing the social layer would catch up through incentives or community norms. Sign forces a more uncomfortable admission: the attack that matters most might already be happening in plain sight, not as malice but as ordinary strategic alignment among sophisticated actors who understand the attestation rails better than most users ever will. It doesn’t break the ledger; it simply bends the narrative the ledger is asked to certify.

The longer I sat with it, the more the question felt unresolved in a way that matters for anyone building or relying on these systems. If a coordinated attack on Sign looks less like a hack and more like a carefully orchestrated consensus among the very parties the protocol is designed to serve, then perhaps the next layer of defense isn’t another proof or hook, but something we haven’t quite named yet—some way to surface the invisible coordination before it hardens into accepted fact.

What if the real test for protocols like this isn’t whether they can stop bad actors from lying, but whether they can make it harder for good actors to quietly agree on the wrong truth?