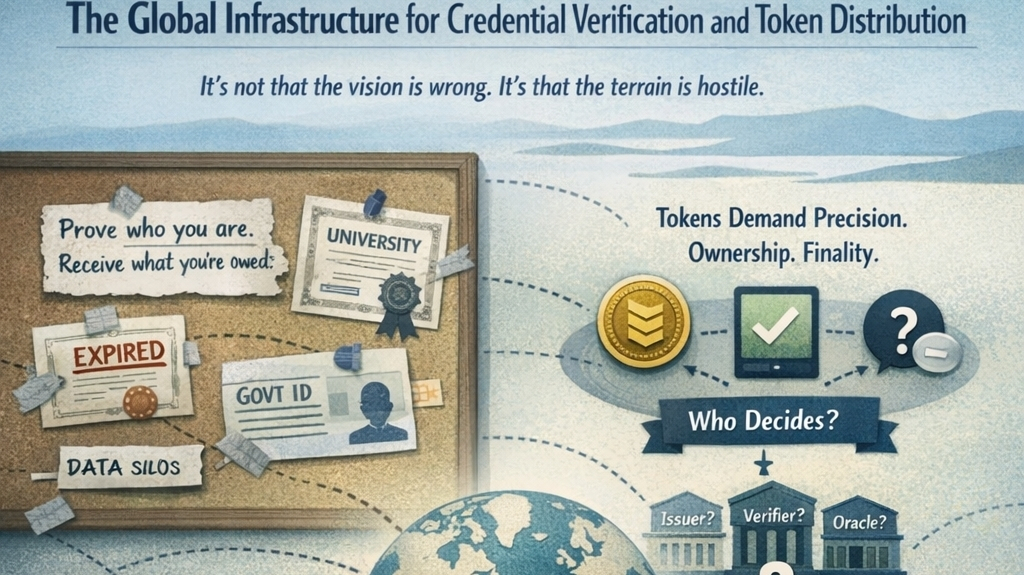

I tend to look at credential verification and token distribution systems the same way I look at settlement layers in markets: not as narratives, but as machinery. What matters is not what they promise, but how they behave when usage becomes uneven, adversarial, or simply large. A global infrastructure for credential verification sounds clean in theory, but in practice it sits at the intersection of identity, incentives, and capital flow—three areas that rarely align without friction.

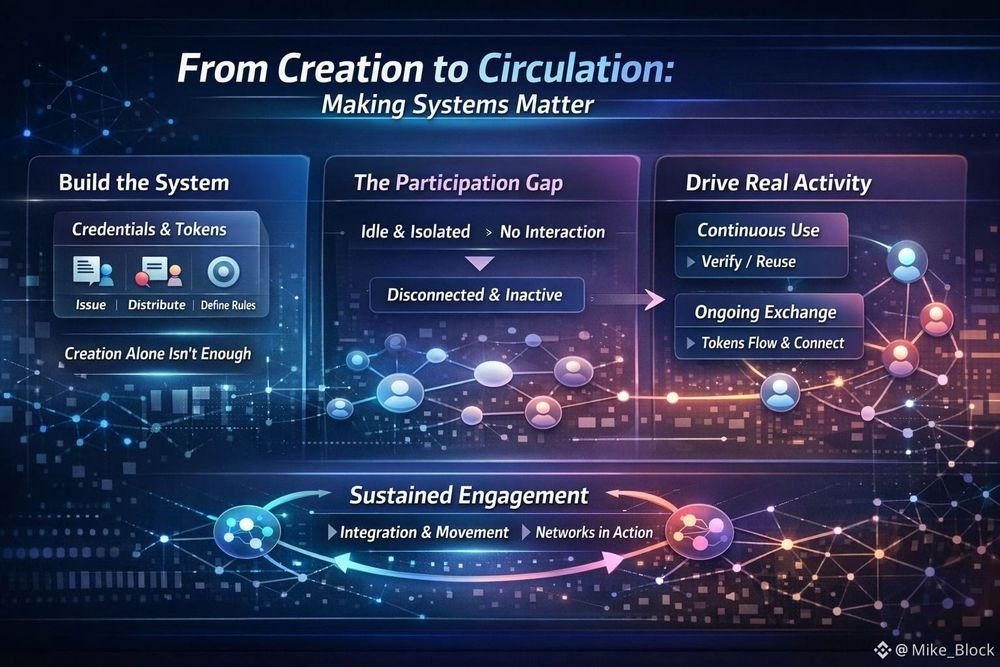

What I find most revealing is how credentials are actually issued and consumed over time. It’s easy to imagine a steady flow of attestations—users proving something about themselves, protocols recognizing it, tokens moving accordingly. But real usage doesn’t look like that. It clusters. There are bursts of verification around incentives, airdrops, access gates, and campaigns. Outside of those windows, activity often drops off sharply. That alone tells you something: credentials are not inherently valuable; they become valuable when tied to distribution events or gating mechanisms that create urgency.

That creates a subtle but important dependency. The infrastructure doesn’t just verify truth—it amplifies moments of economic attention. If token distribution is the primary driver of credential demand, then the system inherits the cyclical and opportunistic nature of those distributions. You can see this on-chain when issuance spikes align with reward programs, and then flatten out when incentives dry up. The infrastructure becomes reactive, not foundational.

The more interesting layer sits underneath: who is doing the verifying, and what are they optimizing for? Validators or attesters in such systems are rarely neutral actors. They are economic participants. If issuing a credential carries any form of reward—direct or indirect—then the quality of verification becomes a function of incentive design. Tight incentives lead to conservative issuance, slower growth, and higher trust density. Loose incentives lead to rapid expansion, but also dilution. You don’t need to read documentation to see which path a system has taken; you can infer it from the distribution of credentials per user, the reuse patterns, and how often those credentials are actually referenced in downstream interactions.

There’s also a quiet tension between portability and specificity. A credential system that aims to be global needs to produce attestations that are reusable across contexts. But the more reusable a credential is, the more abstract it becomes, and abstraction tends to weaken enforcement. On the other hand, highly specific credentials—tied to a single application or condition—carry stronger guarantees but fragment the system. In practice, most infrastructures oscillate between these two extremes without fully resolving the trade-off. You end up with a layered mess: some credentials are broadly recognized but weakly enforced, while others are highly trusted but rarely used outside their origin.

Token distribution adds another layer of complexity. Distribution mechanisms are often framed as neutral processes—tokens allocated based on verified attributes. But the reality is that distribution logic shapes behavior upstream. If users know that certain credentials unlock future rewards, they will optimize for acquiring those credentials, regardless of whether the underlying signal remains meaningful. This creates a feedback loop where the act of verification becomes gamified, and over time, the signal degrades.

You can observe this degradation indirectly. Look at how often newly issued credentials are followed by immediate token claims or transfers. Look at how long tokens stay in the hands of recipients versus how quickly they are sold or bridged. If the majority of distributed tokens exit the system quickly, it suggests that the credentials enabling that distribution are not anchoring long-term participation. They are functioning as temporary access keys to liquidity.

There’s also a structural issue around storage and permanence. Credentials are often treated as durable records, but their relevance decays. A verification that was meaningful at one point—say, participation in a network, ownership of an asset, or completion of a task—may lose significance as conditions change. Yet the infrastructure typically preserves these credentials indefinitely. This creates a kind of historical bloat where the system accumulates attestations that no longer reflect current reality, but still influence distribution logic or access decisions.

From a design perspective, the question isn’t just how to verify credentials, but how to retire them. Very few systems handle this well. Revocation mechanisms exist, but they are rarely used at scale because they introduce friction and potential conflict. It’s easier to keep adding new credentials than to invalidate old ones. Over time, this leads to a layered state where the signal-to-noise ratio declines, and participants rely more on recent activity than on the full credential set.

Another angle I pay attention to is settlement speed and composability. If credential verification is slow or expensive, it won’t be used in real-time interactions. It becomes a pre-processing step—something you do once before accessing a system. But if verification is fast and cheap, it can be embedded directly into transactions, shaping behavior at the moment of action. This difference matters. Real-time verification allows for dynamic incentives, conditional transfers, and adaptive access control. Static verification limits the system to coarse gating.

You can often infer this from how frequently credentials are referenced in transactions versus how often they are simply stored and forgotten. High reference frequency suggests that the infrastructure is actually integrated into the flow of economic activity. Low frequency suggests that it’s more of a registry than an active layer.

There’s also an overlooked psychological component. Traders and users don’t think in terms of credentials; they think in terms of outcomes. If the path from credential to outcome is unclear or delayed, engagement drops. This is why tightly coupled systems—where verification leads quickly to a tangible result—tend to see higher usage, even if the underlying verification is weaker. The market consistently favors immediacy over purity.

Over time, these small design choices compound. Incentives shape issuance, issuance shapes distribution, distribution shapes liquidity, and liquidity feeds back into participation. None of this is visible at a glance, but it shows up in the data if you look closely enough. Wallet clustering, transaction timing, credential reuse patterns—they all tell the same story if you’re paying attention.

What I’ve come to accept is that a global infrastructure for credential verification and token distribution doesn’t succeed by being perfectly accurate or universally trusted. It succeeds by being just reliable enough to coordinate behavior at scale, without introducing so much friction that users route around it. That balance is fragile. Push too far in either direction—toward strictness or flexibility—and the system starts to lose relevance.

And when I look at these systems in the wild, I don’t see stability. I see constant adjustment. Parameters shift, incentives are recalibrated, new credential types are introduced, old ones fade away. It’s less like a fixed infrastructure and more like a living market, responding to pressure, adapting to misuse, and quietly redefining what counts as a valid signal.@SignOfficial #SignDigitalSovereignInfra $SIGN