What we casually refer to as “privacy settings” carries an implicit promise: control. A toggle suggests authority, a checkbox implies consent, and a settings panel feels like ownership. But this interface-level comfort hides a deeper structural question—are these settings actual guarantees of digital rights, or are they simply preferences offered within a system whose boundaries are defined elsewhere? In other words, are we shaping our privacy, or are we selecting from pre-approved versions of it?

Decentralized identity systems enter this conversation as both a technological breakthrough and a philosophical pivot. They claim to invert the traditional model where institutions store, control, and verify identity. Instead, the individual becomes the holder of verifiable credentials, capable of presenting proofs without surrendering raw data. This is where concepts like Selective Disclosure become central. The idea is simple but powerful: share only the “Minimum Viable Data.” Prove you are over 18 without revealing your exact birthdate. Confirm eligibility without exposing your entire identity profile. From a cryptographic standpoint, this is a profound leap forward.

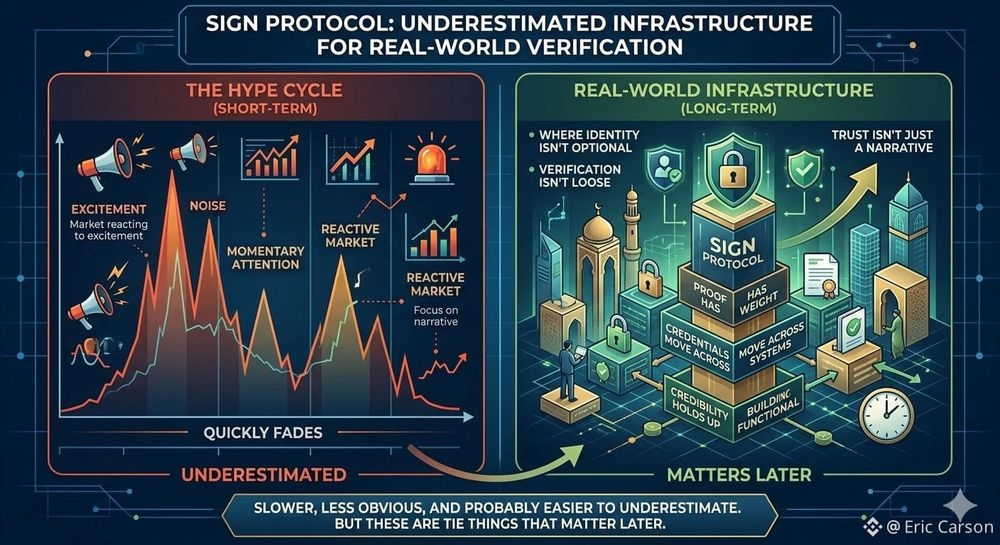

Under the hood, these systems rely on mathematical proofs rather than institutional trust. Zero-knowledge constructions, signed attestations, and verifiable credentials allow data to remain local while still being globally verifiable. The elegance lies in the separation between truth and exposure—you can prove something is true without revealing why it is true. Protocols such as the Protocol operationalize this idea, enabling credentials to move across platforms, ecosystems, and jurisdictions without losing their integrity. Identity, in this model, becomes portable, composable, and user-centric.

From a purely technical perspective, the system works. It reduces unnecessary data sharing, limits centralized honeypots of sensitive information, and introduces a framework where verification does not require surrender. It is efficient, scalable, and aligned with long-standing privacy ideals. If the story ended here, decentralized identity would represent a clean victory for user sovereignty.

But the story does not end at the protocol layer.

Because every technical system operates within a broader framework of rules—legal, economic, and institutional. And this is where the narrative becomes more complex. The cryptography defines what is possible, but policy defines what is acceptable. The system may allow you to disclose only fragments of your identity, but the entity requesting verification determines what fragments are sufficient.

This introduces the concept of Policy-Controlled Boundaries. These are the limits not imposed by code, but by governance. A protocol may support minimal disclosure, but a regulator might require expanded data fields for compliance. A platform might demand additional credentials for risk mitigation. Over time, these requirements shape the practical reality of the system, regardless of its theoretical capabilities.

Within this structure emerges a more subtle dynamic—Conditional Choice. On the surface, users retain the ability to choose what data to share. In practice, that choice is often constrained by consequences. Refuse to disclose certain information, and access may be denied. Decline to meet verification thresholds, and participation becomes impossible. The choice exists, but it is bounded by the cost of non-compliance.

This is not an overt loss of control. It is something quieter, more gradual.

A Quiet Erosion of privacy.

Not through sweeping changes or visible overreach, but through incremental adjustments that accumulate over time. A new compliance standard here. An expanded definition of “required data” there. Each step appears reasonable in isolation, often justified by security, fraud prevention, or regulatory alignment. But collectively, they reshape the user’s private space, narrowing what can realistically remain undisclosed.

What makes this particularly significant is that the underlying technology remains intact throughout this process. The cryptographic guarantees do not weaken. The protocols continue to function as designed. The erosion happens not because the system fails, but because the environment around it evolves.

In this context, the Protocol should be understood not as a solution that resolves power dynamics, but as an infrastructure that makes them more explicit. It enables a world where credentials can be verified without central authorities, but it does not eliminate the influence of those who define verification standards. It provides the tools for privacy-preserving interaction, but it does not dictate how those tools are used.

This creates a persistent tension between Technical Possibility and Regulatory Reality. On one side, we have systems capable of near-perfect data minimization. On the other, we have frameworks that often prioritize transparency, auditability, and control. The result is not a binary outcome, but a negotiated equilibrium.

Participation in digital systems becomes an ongoing negotiation. Users present credentials, systems evaluate them, and policies determine whether the exchange is sufficient. Identity is no longer a static asset owned outright, but a dynamic interface between individual agency and institutional requirements.

This reframes the concept of ownership itself.

We are not simply holding our data in isolation. We are engaging in structured exchanges where access, utility, and participation are contingent on how that data is presented. The value of identity lies not just in possession, but in its acceptability within different contexts.

And acceptability is rarely neutral.

It is shaped by economic incentives, regulatory pressures, and platform-specific goals. A financial institution may demand stricter verification than a social platform. A government framework may impose broader disclosure requirements than a decentralized application. Each context introduces its own version of what is “enough,” gradually standardizing expectations across the ecosystem.

Over time, this leads to a normalization of expanded disclosure—not because users prefer it, but because systems converge around similar requirements. The negotiation space narrows, and the distinction between voluntary sharing and necessary compliance becomes increasingly blurred.

This does not mean that privacy is disappearing.

It means that privacy is being redefined as a form of negotiated participation.

A space where individuals leverage tools like Selective Disclosure to minimize exposure, while systems leverage Policy-Controlled Boundaries to ensure compliance. A dynamic where Conditional Choice shapes behavior, and Quiet Erosion subtly adjusts expectations over time.

The promise of decentralized identity remains real. It introduces capabilities that were previously unattainable, redistributes certain forms of control, and reduces reliance on centralized intermediaries. But it does not exist outside of power structures. It interacts with them, adapts to them, and is ultimately shaped by them.

So the critical question is no longer whether the technology works.

It clearly does.

The more important question is who defines the rules that govern its use—and how those rules evolve over time. Because in a system where identity is both a credential and a gateway, the power to define verification is, in many ways, the power to define participation itself.

And in that sense, we are not simply moving toward a future of data ownership.

We are entering a future where ownership is continuously negotiated, access is conditionally granted, and privacy exists not as a fixed right, but as a variable boundary—one that shifts quietly, persistently, and often without us fully noticing.