I remember the first time I stopped thinking about a bridge as a tool… and started thinking about it as a risk surface.

Before that, it felt simple.

You move assets from one chain to another. Maybe there’s a validator set, maybe a relayer, maybe some smart contracts in between.

But the mental model stays the same:

lock → mint burn → release

And as long as it “works,” nobody questions it.

I didn’t either.

I realized I wasn’t thinking about safety at all. I was just assuming it existed.

Until something didn’t line up.

A transfer looked successful on one side, but not fully reflected on the other. There was no clear failure, just… inconsistency.

That’s when the question changed for me.

Not “how fast is this bridge?” Not “how cheap is this bridge?”

But:

what are the rules that guarantee this system behaves correctly?

That’s where most of the conversation around bridges starts to fall apart.

Because we talk about interoperability like it’s a connectivity problem.

Connect chain A to chain B. Move value between them.

Done.

But that’s not what interoperability really is.

Interoperability is agreement between systems.

And agreement only works if there are rules both sides can rely on.

Not assumptions.

Not best-case execution.

Actual, enforceable rules.

A bridge doesn’t fail when it breaks. It fails when nobody knows if it broke.

That’s why the angle here matters:

atomicity, limits, and emergency pause are not features.

They are the rules that determine whether a bridge survives under pressure or breaks under it.

Without verifiable claims, atomicity, limits, and pause are just assumptions.

And when you look at SIGN through that lens, it stops looking like just another interoperability layer.

It starts looking like an attempt to fix what bridges were never properly designed to handle:

verifiable meaning under constraints.

Most bridges were built to move assets. Not to enforce rules.

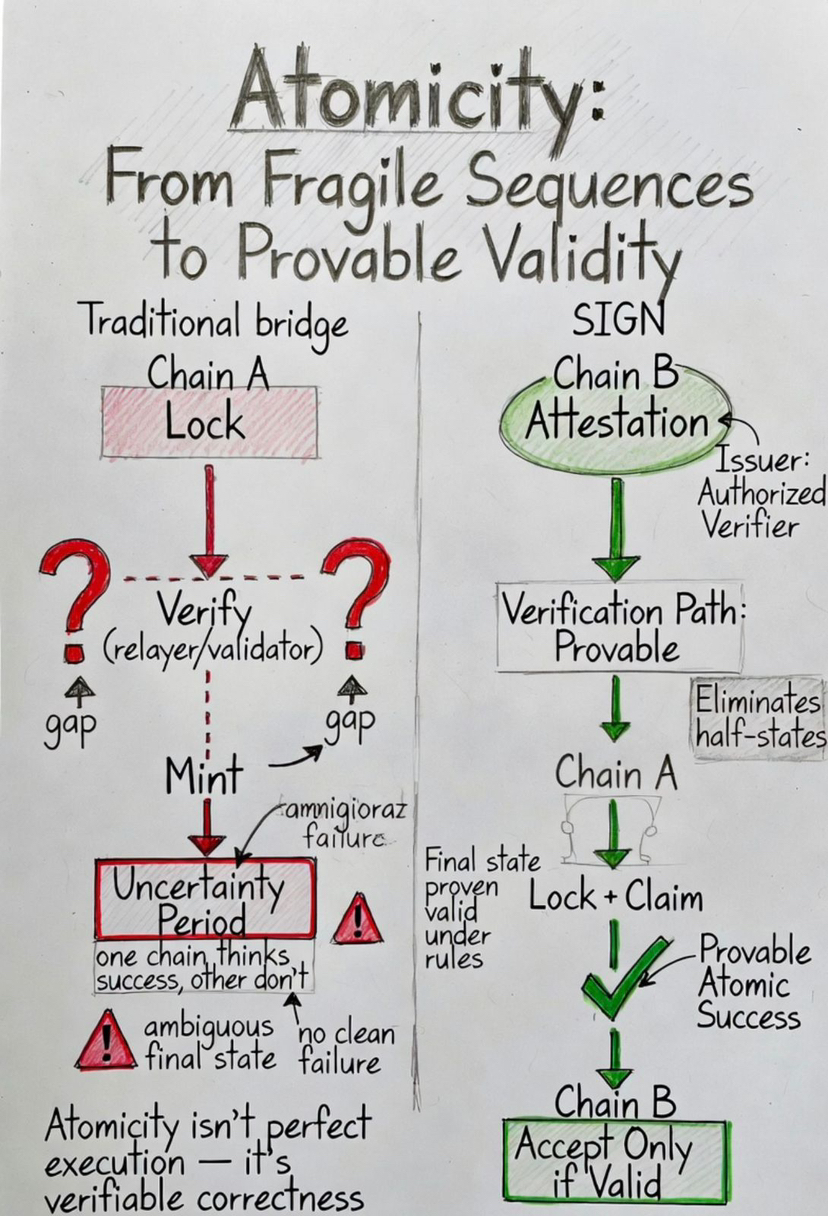

Let’s start with atomicity.

On paper, atomicity is simple.

A transfer should either fully succeed or fully fail.

No in-between.

No half-completed state.

No scenario where one chain thinks the transaction happened and the other doesn’t.

But in practice, most bridges don’t actually guarantee atomicity.

They simulate it.

They rely on sequences of steps:

lock assets on chain A verify event mint representation on chain B

Each of those steps introduces a gap.

A moment where something can go wrong.

A dependency on off-chain actors, relayers, or validators behaving correctly.

And if something breaks in between, you don’t get clean failure.

You get ambiguity.

That’s the real danger.

Not failure.

uncertain state.

SIGN approaches this from a different direction.

It doesn’t try to guarantee atomicity purely through execution.

It shifts the focus to verification of outcomes.

Instead of asking:

“did every step execute correctly?”

It asks:

“can the final state be proven valid under defined rules?”

This is where attestations come in.

Every meaningful action can be expressed as a claim.

Not just:

“this transfer happened”

But:

“this transfer satisfies these conditions under this schema, verified by this issuer”

And that claim is not trusted blindly.

It is checked.

Because it is tied to:

a schema (what the claim represents) an issuer (who is authorized to assert it) a verification path (how it is validated)

So instead of relying entirely on sequential execution…

the receiving system relies on verifiable correctness.

These aren’t add-ons. Without this structure, the system cannot safely agree across chains.

That doesn’t remove the need for execution to work.

But it changes what matters.

It reduces dependence on fragile sequences and increases reliance on provable outcomes.

That’s a more robust way to approach atomicity.

Not as a promise of perfect execution.

But as a requirement of provable validity.

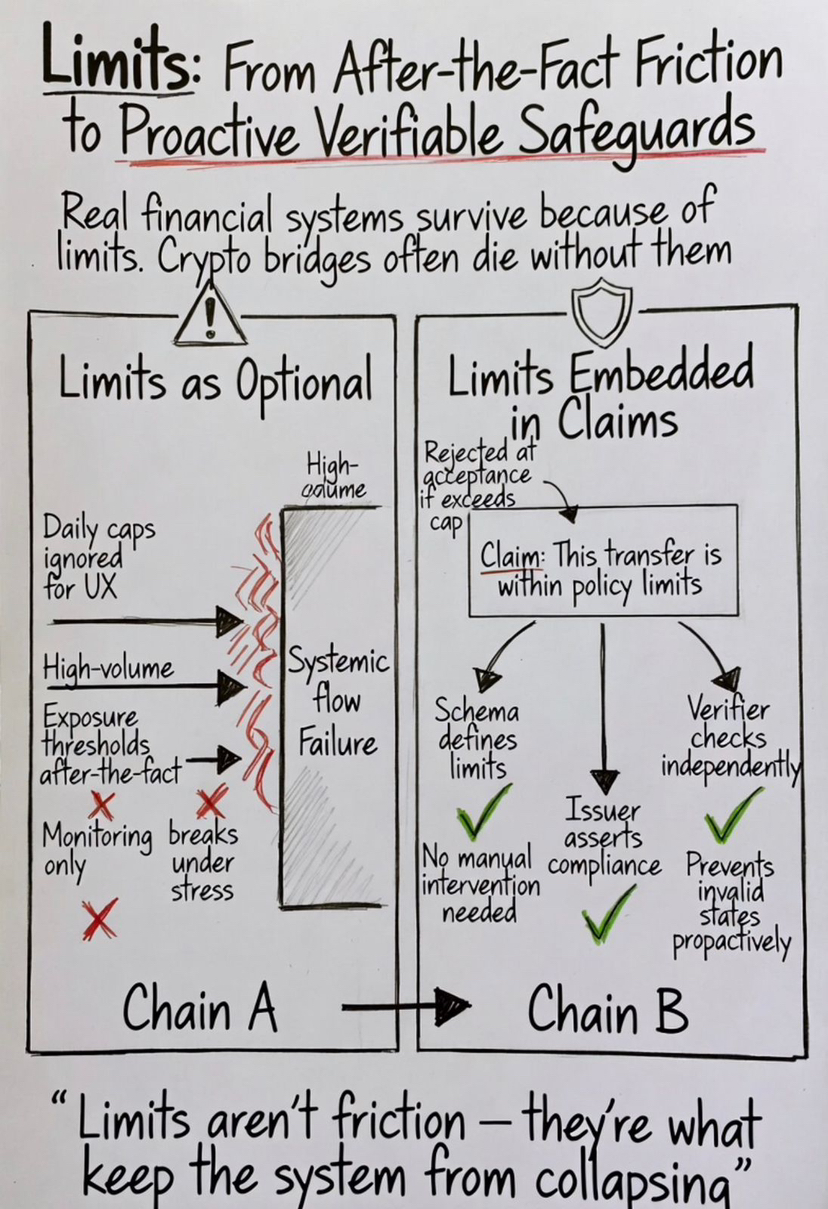

Then there are limits.

This is where most bridge designs feel disconnected from reality.

Because real financial systems are built around limits.

Not as constraints to slow things down.

But as safeguards to prevent systemic failure.

Daily caps. Exposure thresholds. Rate controls.

These aren’t optional.

They are what keep systems from collapsing under stress.

But in crypto, limits are often treated as friction.

Something to minimize.

Remove limits → increase flow → improve UX.

That works… until something breaks.

And then the lack of limits becomes the reason everything breaks at once.

SIGN treats limits differently.

It doesn’t push them outside the system.

It embeds them into the logic of what must be proven.

A transaction doesn’t just say:

“move this amount”

It can carry a claim like:

“this transfer is within allowed limits under this policy”

And again, that’s not a statement you trust.

It’s a statement you verify.

Because the schema defines what “limits” mean. The issuer defines who is authorized to assert compliance. The verification path ensures it can be checked independently.

That changes how limits operate.

They are no longer enforced after the fact.

They are part of the acceptance condition.

If the claim doesn’t satisfy the limit condition…

the system doesn’t accept it.

No manual intervention required.

No off-chain monitoring needed.

That’s a very different level of safety.

Because it prevents invalid states from ever being accepted…

instead of trying to fix them later.

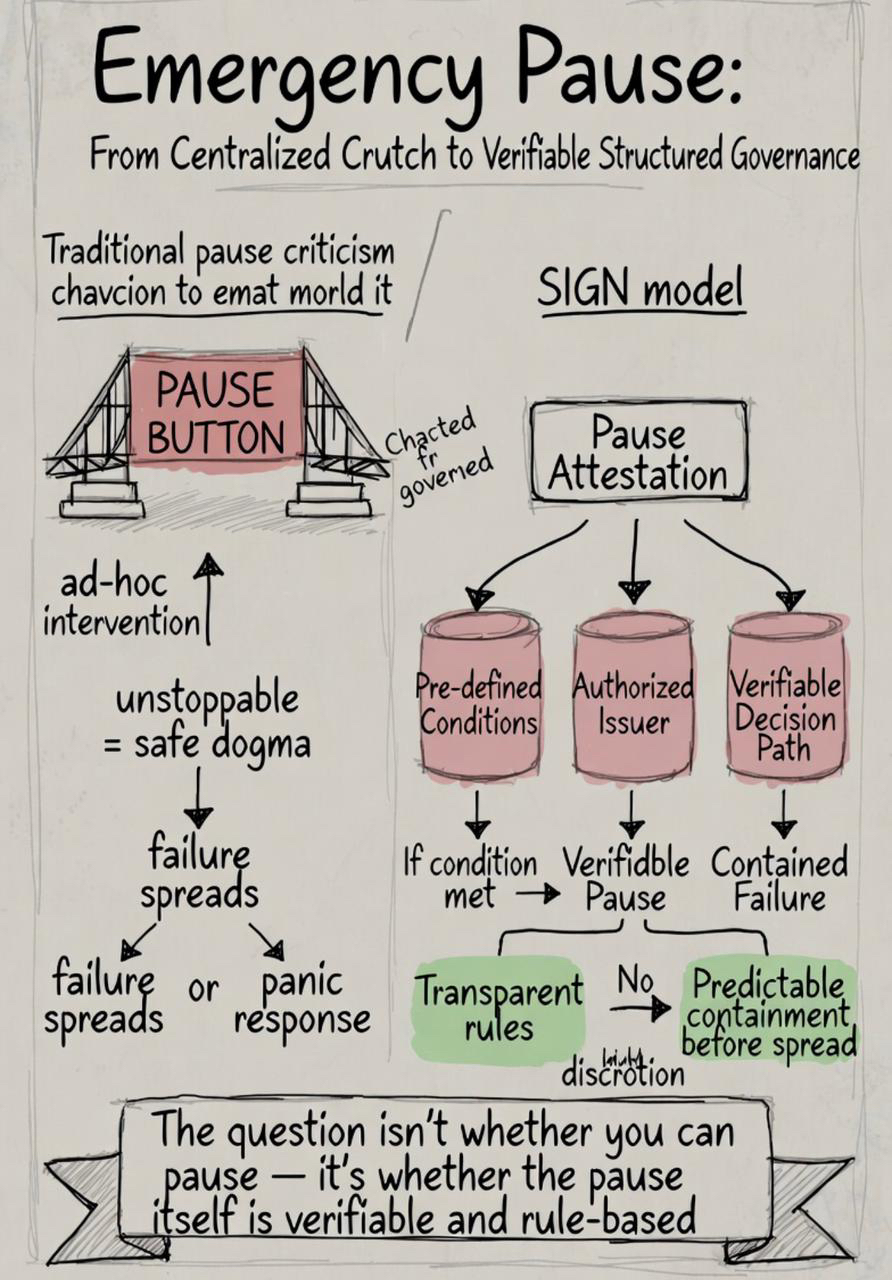

Now let’s talk about the part most people avoid:

emergency pause.

In crypto culture, this is often seen as a flaw.

“If you can pause it, it’s centralized.” “If you can stop it, it’s not trustless.”

But that perspective only holds if you assume systems never fail.

In reality, every complex system needs a way to contain failure.

Because failure is not theoretical.

It’s inevitable.

The real question isn’t:

“can the system be paused?”

It’s:

under what conditions, by whom, and how is that decision verified?

SIGN doesn’t ignore this.

It doesn’t pretend that unstoppable systems are always safer.

Instead, it makes governance itself part of the system’s verifiable logic.

Pause conditions can be defined.

Not as arbitrary admin actions.

But as structured rules:

if this condition is met and this authority is verified then this action is allowed

Again, expressed through attestations.

Again, tied to schema, issuer, and verification.

That means intervention is not hidden.

It’s not discretionary in the moment.

It is pre-defined, transparent, and verifiable.

That’s a very different model of control.

Not centralized.

Not chaotic.

But structured governance embedded into the system.

And that matters when things go wrong.

Because when systems fail, speed doesn’t matter.

Decentralization slogans don’t matter.

What matters is:

can you contain the failure before it spreads?

Most bridges don’t have a strong answer to that.

They either:

continue operating and amplify the issue or rely on ad-hoc intervention

SIGN gives a third path.

Define intervention rules in advance.

Make them verifiable.

And enforce them when conditions are met.

That’s how real systems survive stress.

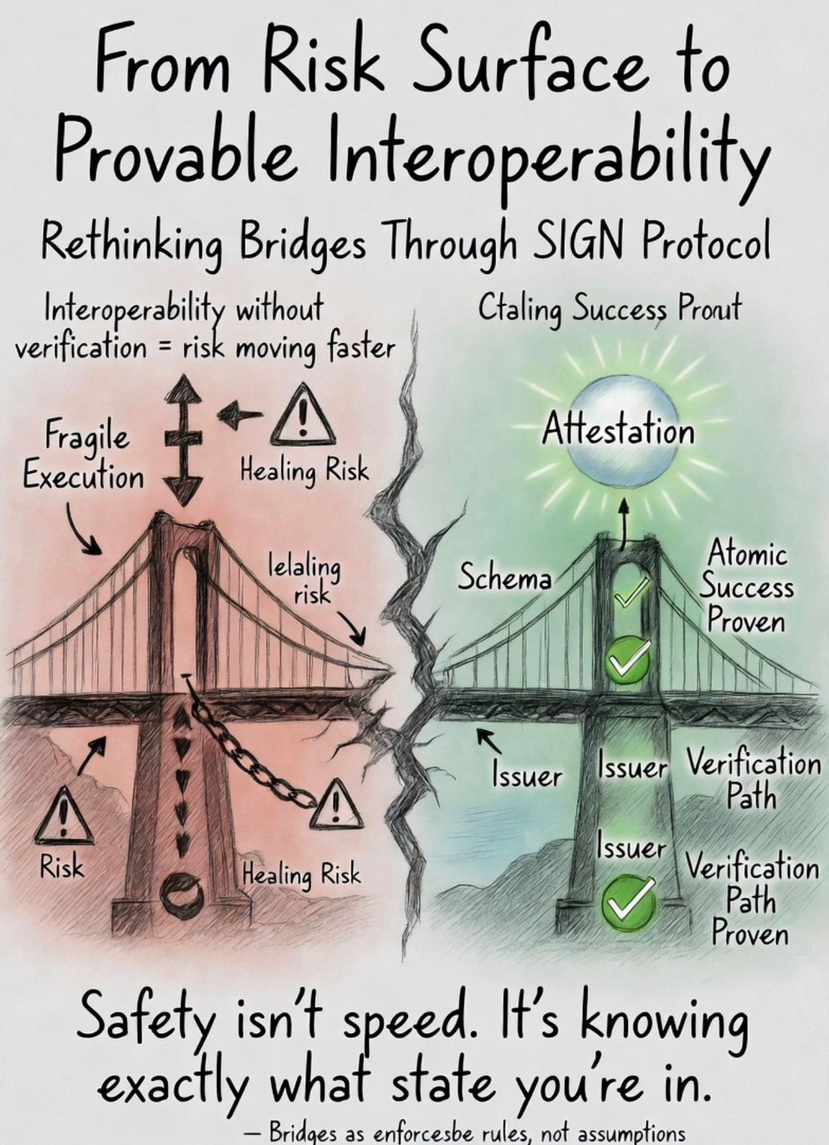

When you step back, a pattern starts to emerge.

Atomicity, limits, and pause are not separate concerns.

They are different expressions of the same idea:

a system must define what is acceptable, enforce it, and handle failure predictably.

Safety isn’t speed. It’s knowing exactly what state you’re in.

And that’s exactly where most bridges are weakest.

They focus on movement.

Not on rules.

But interoperability without rules is fragile.

Because it assumes systems will behave correctly under all conditions.

And that assumption doesn’t hold in the real world.

SIGN shifts the focus.

From movement → to verifiable agreement. From execution → to provable conditions. From trust → to structured verification.

That’s why it feels different.

Not because it makes bridges faster.

But because it makes them more accountable to rules that can be checked.

There’s a line that stayed with me while thinking about this:

Interoperability without verification is just risk moving faster.

And once you see that, you can’t unsee it.

Every bridge becomes a question of:

what is being trusted here? what is being proven?

SIGN answers that differently.

It doesn’t ask you to trust the bridge.

It asks you to verify the claim.

That’s a subtle shift.

But it changes everything.

Because once systems stop inheriting trust…

and start verifying meaning…

interoperability becomes something you can actually rely on.

Not because nothing will ever go wrong.

But because when it does…

the system knows exactly what rules apply.

And that’s what separates a system that works…

from one that survives.