I keep catching myself stuck on a small, almost annoying question: when a system claims to give users control over their identity, does it actually simplify their responsibility… or quietly multiply it?

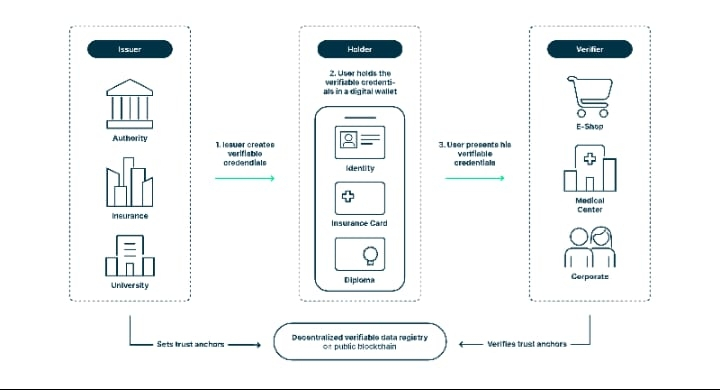

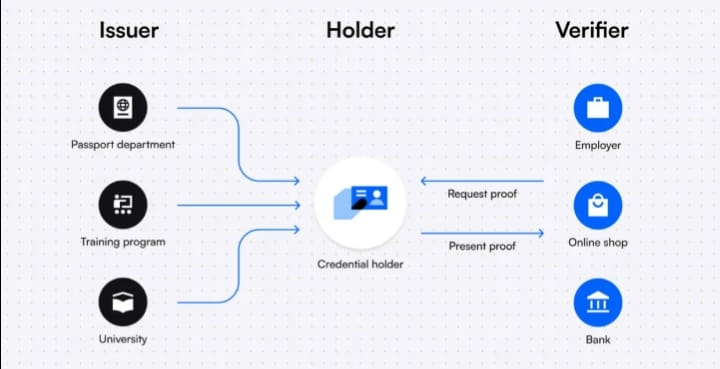

With Sign, the promise feels clean—credentials issued, verified, and then carried by the user across different platforms, no constant re-checking, no repeated friction. And honestly, I get why that’s appealing. Nobody enjoys proving the same thing ten times. But then I start thinking about what “carrying your own credentials” really means in practice. Control sounds empowering until something goes wrong.

Because the architecture assumes a certain kind of behavior. Users manage access carefully. Validators behave consistently. Issuers maintain credibility over time. That part makes sense to me, at least structurally. But systems like this don’t live in clean environments—they live in messy ones, where people forget, rush, misunderstand, or simply don’t care enough to manage things perfectly.

And that’s where I start circling again.

A user exposes more than intended.

A credential gets reused in a context it wasn’t designed for.

A platform trusts a proof without questioning its relevance.

Nothing breaks instantly. But the edges start to blur.

I also keep thinking about the balance between privacy and usability. Sign leans into selective disclosure, which sounds ideal—share only what’s necessary, keep everything else private. But selective disclosure isn’t just a feature, it’s a responsibility. And I’m not entirely convinced most users will treat it that way. Sometimes convenience wins over caution.

Then there’s the institutional side, which feels like a different tension entirely. Systems like Sign aim to reduce reliance on centralized authorities, but institutions don’t disappear—they adapt. They might accept the infrastructure, but still layer their own rules, their own interpretations, their own thresholds of trust on top. So instead of one system, you get overlapping ones.

And honestly, I get why that happens. Institutions are slow to trust external frameworks. But it creates this quiet inconsistency where the same credential doesn’t carry the same weight everywhere.

I keep looping back to incentives too. Validators are expected to maintain correctness, the network aligns behavior through $SIGN, and the system tries to remain neutral. But neutrality is fragile. Over time, influence shifts—subtly, gradually. Not enough to break the system, but enough to shape it.

A validator prioritizes efficiency over precision.

A governance decision tilts standards slightly.

A dominant participant influences interpretation without saying so directly.

These aren’t failures. They’re adjustments. But adjustments accumulate.

So I’m left in this space where the design feels thoughtful, even careful, but the real question isn’t about how it works in theory. It’s about how it behaves when stretched—across different platforms, different users, different expectations.

Because Sign doesn’t just verify identity. It reshapes how identity moves, how it’s trusted, how it’s reused. And that shift, as subtle as it seems, carries weight.

I’m not dismissing it. But I’m not fully convinced either. I keep watching that gap—between control and responsibility, between structure and behavior—because that’s usually where systems like this reveal what they really are.