@SignOfficial #SignDigitalSovereignInfra $SIGN

Sign doesn’t feel like a system of shared knowledge—it feels more like a system of coordinated ignorance.

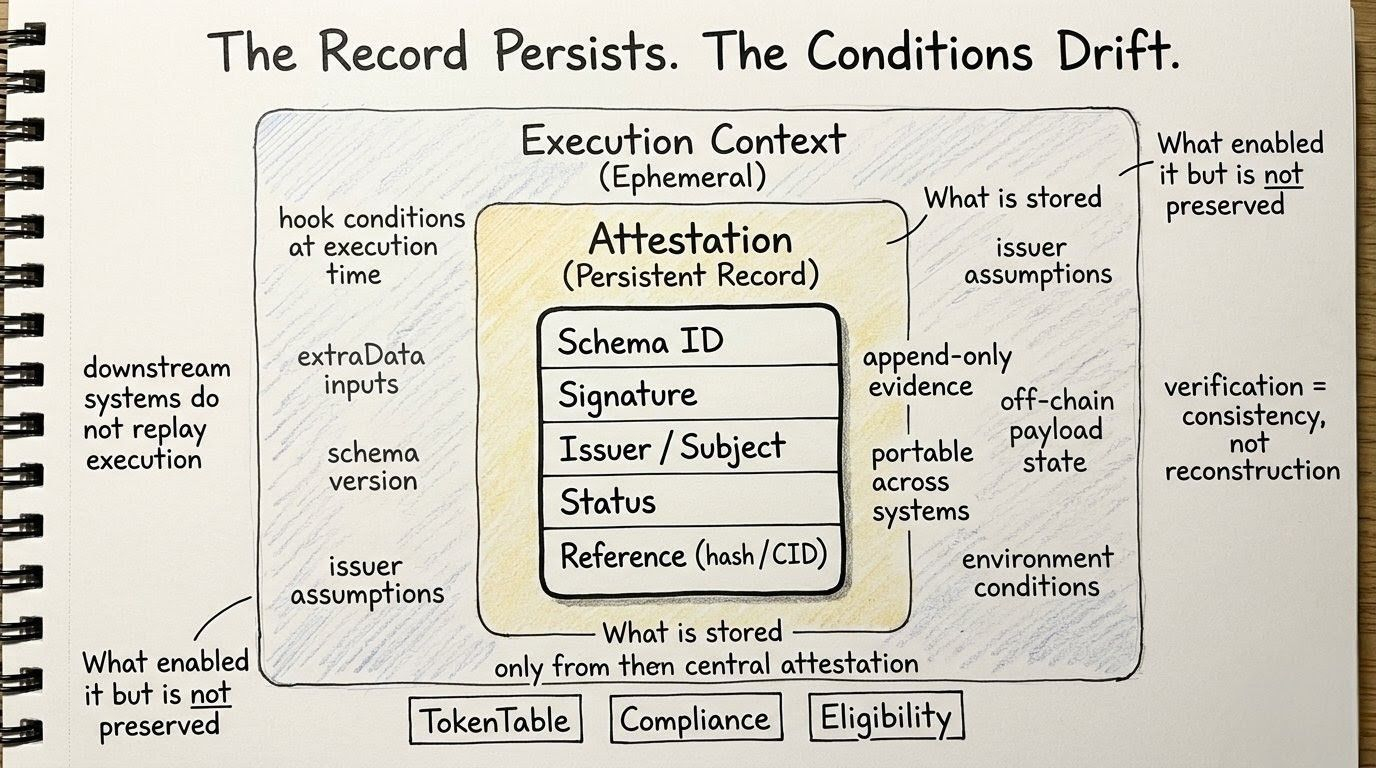

What’s unsettling is that it appears most reliable at the exact moment it understands the least. When I look at a clean attestation—structured, signed, timestamped, neatly tied to a schema—it gives off an illusion of completeness. But the more polished it looks, the more I wonder what had to be stripped away to make it that clean.

Because the full story never enters the system.

Before Sign is even involved, decisions have already been made somewhere else—inside institutions, compliance processes, or human judgment calls. That earlier layer is messy: documents, edge cases, exceptions, politics, hesitation. By the time a claim reaches Sign, it’s no longer raw reality. It’s already been compressed into something simpler.

And then it gets reduced again.

Sign doesn’t first ask whether something is true—it asks whether it can be expressed. If a claim doesn’t fit the schema—its structure, types, or format—it doesn’t get debated or rejected. It simply never becomes legible to the system. It vanishes before it can even exist inside the protocol.

That’s a deeper kind of blindness: not denial, just absence.

Even once something becomes legible, it still isn’t guaranteed to survive. Schema hooks, permission checks, thresholds, and runtime conditions determine whether it actually becomes an attestation. If it fails here, something strange happens: the system forgets it entirely. There’s no visible record of failure—only the things that passed remain.

So what we end up with is not a full record of reality, but a filtered residue.

Schema defines what can be said. Hooks decide what can remain. Attestations are what survive both filters. And downstream systems—explorers, apps, APIs—only ever interact with these survivors.

This creates an illusion of certainty.

Users tend to trust the system most at the endpoint, when the attestation looks final and complete. But that “certainty” is really just compression. It’s the result of everything unnecessary—or incompatible—being removed along the way.

And sometimes even that remaining data isn’t fully present. Parts may live off-chain, referenced through hashes or CIDs. So the attestation isn’t the full evidence—it’s a pointer, a fragment, or an anchor to something larger.

Which raises the question: what are we actually trusting?

The full story?

The usable fragment?

Or just the fact that something verifiable exists somewhere?

This becomes even more apparent when looking at retrieval layers like explorers. What they show isn’t everything that happened—it’s only what survived and was indexed. The system’s memory is narrower than its actual execution. Success is visible. Failure leaves no trace.

And that silence matters.

Because downstream applications don’t care about the full history. They just need a valid attestation to proceed—whether for access, distribution, or eligibility. Efficiency depends on ignoring everything that didn’t make it through.

But for anything that failed to become legible or acceptable, there’s nothing to reference. No artifact. No record. Nothing to challenge.

Looking across the stack, each layer knows less than the one before it—and that’s by design. The human layer holds context. The schema layer understands structure. The hook layer enforces conditions. The attestation reflects only what survived. Retrieval layers show only what can be indexed. Applications consume only what they need to execute.

No layer holds the full picture.

This isn’t shared knowledge—it’s selective awareness.

And that selectiveness is what makes the system work. If every layer demanded full context, it would collapse under complexity. Instead, each layer is optimized to know just enough: enough to parse, validate, verify, store, and act.

“Enough” becomes the operating principle.

This becomes even clearer across chains. When attestations move between environments, they don’t carry the full original decision. They carry a verification result—something that other systems agree is sufficient. The receiving side doesn’t know what happened. It accepts that the right checks were performed.

So cross-chain isn’t about transferring complete truth. It’s about transferring acceptable fragments of it.

And maybe that’s the real strength of Sign.

Not that it preserves everything—but that it carefully decides what to keep, what to discard, and what future systems are willing to accept without asking further questions.

It’s efficient. It’s scalable. It works.

But it’s also a little unsettling.

Because if no single layer ever holds the full story, then what we call an “attestation” isn’t a complete truth. It’s a chain of filtered fragments—each one shaped by what the system was capable of seeing, and willing to keep.

And somehow, that ends up being enough.