I keep coming back to this slightly uncomfortable loop in my head: automation makes systems efficient, but it also makes them quieter… and quiet systems are harder to question.

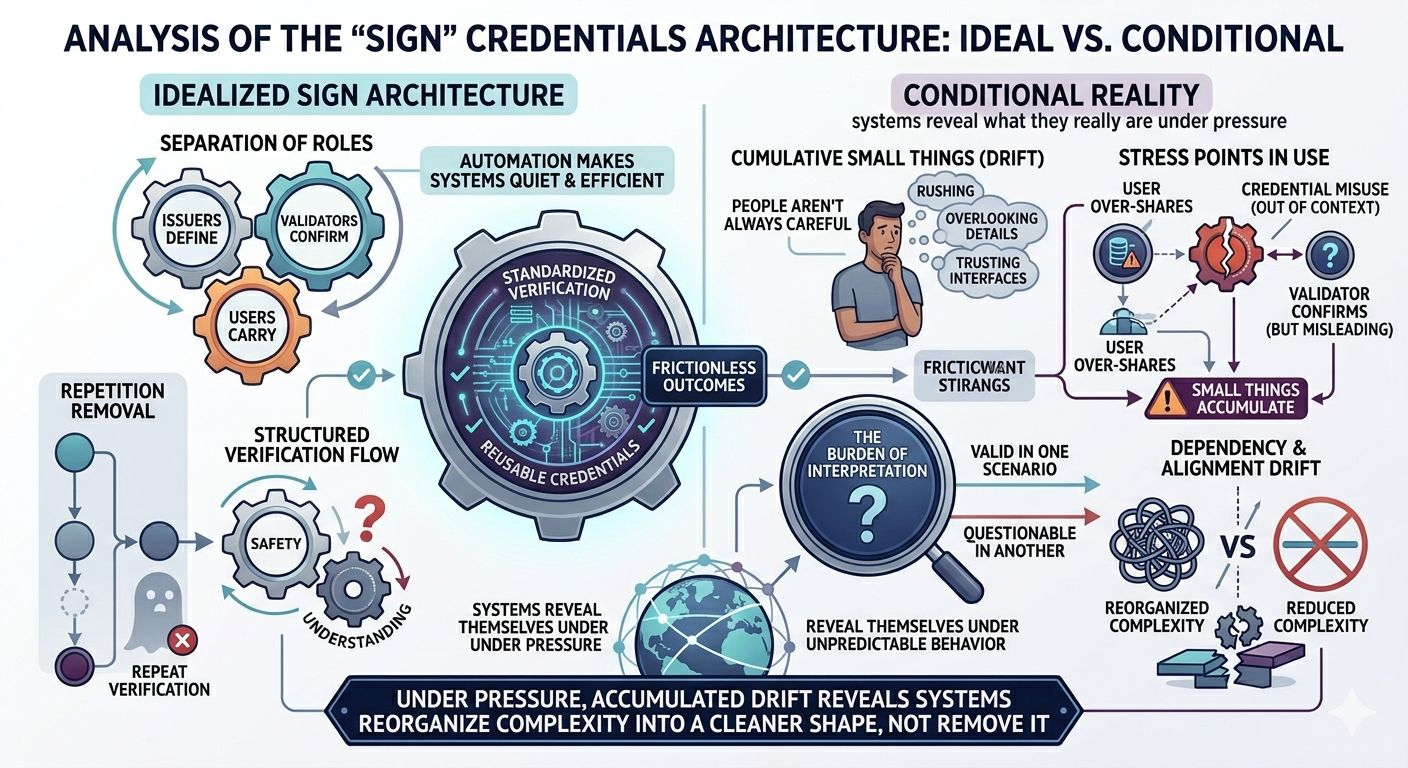

With Sign, the idea is almost deceptively simple—credentials get issued, validated, and then reused across different environments without repeating the same verification steps. It’s structured, predictable, almost frictionless. And honestly, I get why that’s appealing. Repetition is wasteful. But I can’t shake the feeling that repetition, as inefficient as it is, sometimes acts like a second layer of doubt. Remove it, and you remove that hesitation too.

The architecture leans heavily on separation of roles—issuers define, validators confirm, users carry. That part makes sense to me. It distributes responsibility instead of concentrating it. But distribution doesn’t automatically mean balance. It just means responsibility is spread out, sometimes thin enough that no one fully owns the outcome when something goes wrong.

And I keep circling back to privacy. The system assumes users will manage their credentials, reveal only what’s necessary, control access carefully. That sounds ideal. But in practice, people don’t always behave carefully. They rush, they overlook details, they trust interfaces more than they should.

A user shares more data than intended.

A credential gets reused in a context it wasn’t designed for.

A validator confirms something that is technically correct, but contextually misleading.

Small things. But they accumulate.

Then there’s this deeper tension between efficiency and interpretation. Sign tries to standardize verification, but verification isn’t always objective. Context matters. A credential might be valid in one scenario and questionable in another. The system doesn’t eliminate that—it just moves the burden somewhere else, often onto the edges where interpretation happens.

I also wonder about how dependency forms over time. If multiple platforms begin to rely on the same verification layer, consistency becomes critical. Not just technical consistency, but social and institutional alignment. And that’s where things tend to drift. Quietly.

And honestly, I get why the system doesn’t try to solve everything. It focuses on structure, on making verification reusable and portable. That restraint is intentional. But it also means the harder problems—trust, interpretation, behavior—don’t disappear. They just sit outside the system, waiting to interact with it.

I keep looping back to the same question: does Sign reduce complexity, or does it just reorganize it into a cleaner shape?

Because from a distance, it feels efficient. Up close, it feels conditional.

So I’m left watching how it behaves under pressure—not whether it works in theory, but how it holds when users act unpredictably, when platforms diverge slightly, when small inconsistencies start stacking instead of resolving.

That’s usually where systems reveal what they really are.