Most tokenization stacks start in the wrong place.They obsess over the asset.That’s not where things break.The failure mode shows up later execution under constraint, when latency creeps in, when policy is hard-coded in three different systems, when nobody agrees on the “current” state. Representation was never the problem. Coordination was.

S.I.N.G flips it. Cleanly.

Distribution is not a byproduct it’s the output. Deterministic. Derived from a fully attested state machine. No soft guarantees hiding behind dashboards. If it can’t be proven, it doesn’t exist.

This isn’t about “putting assets on-chain.” That phrase already lost the plot.

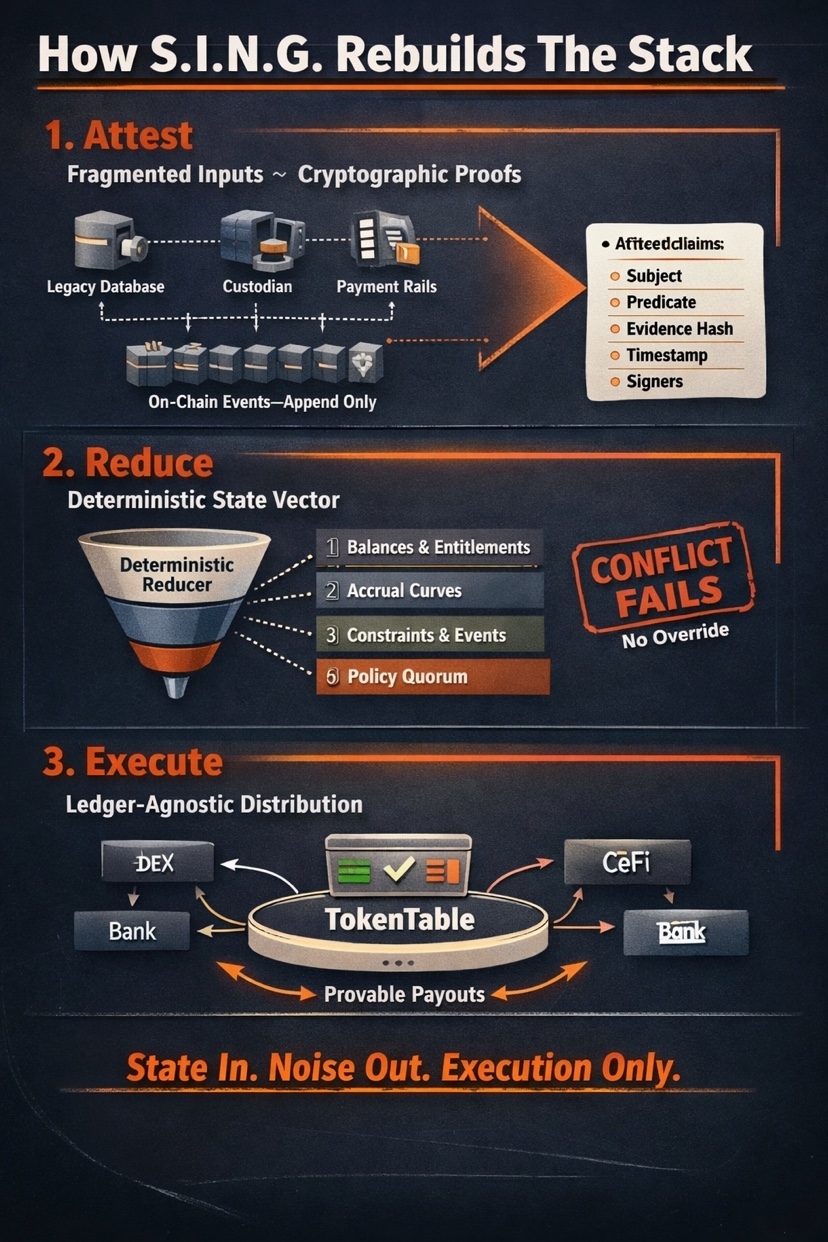

This is about constructing a canonical state from hostile inputs fragmented registries, asynchronous payment rails, stale custodial snapshots and forcing them through a reducer that doesn’t care about your narrative, only your proofs.

TokenTable? It sits at the edge. Quiet.

No opinions. No governance theater. It executes whatever the state resolves to.

Start with the mess. Because that’s the reality.

Multiple data sources each with its own schema, its own clock, its own version of truth. One system says a position settled. Another lags by hours. A third encodes eligibility rules in some opaque middleware nobody wants to touch. Classic state-bloat, just smeared across organizations instead of chains.

S.I.N.G doesn’t “integrate” these systems. It strips them down to something harsher.

Attestations.

A single primitive.

A signed, schema bound claim about a fact at time t.

Not a report. Not an API response.

A payload you can verify without calling anyone back.

It carries structure subject (instrument, tranche, account), predicate (ownership, accrual, covenant status), evidence hash (commitment to whatever lives off-chain), timestamp, signer set mapped to roles not brands, not logos, just keys with defined authority surfaces.

That last part matters. A lot.

Because now you’re not trusting institutions. You’re validating signatures against policy. Ledger-agnostic, by the way this doesn’t care where the data originated, only that it resolves cleanly under verification.

These attestations get written as events. Append only. No edits.

You don’t “update” state here. You emit facts and let the system deal with it.

Short rule. Brutal rule.

No attestation, no state transition.

But raw attestations are noise without structure.

You don’t get a usable system by stacking signed messages and hoping something coherent falls out. You need a reducer. Deterministic. Unforgiving.

Think of it like this every new attestation is just input. The reducer folds it into a state vector, or it rejects it. No middle ground.

That state vector isn’t vague. It’s explicit:

Balances, entitlements who gets paid, how much, when.

Accrual curves time-indexed, not some end-of-period guess.

Constraint surfaces jurisdiction rules, mandate limits, eligibility gates.

Event flags coupon triggers, revenue checkpoints, breach conditions.

All derived. Nothing manually set.

And here’s where most systems fall apart they try to “resolve” conflicts socially. Emails. Reconciliation calls. Manual overrides buried in back offices.

S.I.N.G doesn’t play that game.

Conflicting attestations don’t get massaged into alignment. They fail. Hard stop. Unless a valid policy quorum predefined, on-chain submits a superseding attestation set that satisfies the rules.

No quorum? No state update.

No state update? No downstream execution.

It’s rigid by design. Because ambiguity is where capital gets stuck.

Now zoom out.

What you actually have here isn’t a tokenization pipeline. It’s a state machine with strict inputs and deterministic outputs. The “token” is almost incidental a projection of state, not the source of truth.

That’s why S.I.N.G avoids the usual traps state-bloat from duplicated records, latency mismatches across systems, hard-coded policy scattered in execution layers. Everything collapses into one flow:

Attest → Reduce → Execute.

Nothing else matters.

And when the state is clean fully derived, fully attested distribution becomes trivial. Not easy. Just inevitable.

That’s where TokenTable comes in.

At the very end.

Not deciding. Not interpreting. Just executing the resolved state against liquidity environments on-chain, off-chain, hybrid, doesn’t matter.

It reads the vector.

It routes value.

Every transition provable.

No hidden logic. No discretionary paths. If someone asks “why did this payout happen?” you point to the attestations, the reducer rules, the resulting state. End of discussion.

This is the part people underestimate.

They think tokenization is about wrapping assets. Issuing representations. Maybe plugging into DeFi rails for yield.

That’s surface area.

The real problem is agreeing on state under adversarial conditions different actors, different incentives, different clocks and doing it without introducing latency or trust assumptions that break under scale.

S.I.N.G doesn’t solve that with better UX or cleaner APIs.

It solves it by removing interpretation entirely.

Everything is either attested and valid under policy, or it doesn’t exist

It’s harsh.

But it works.