@Fabric Foundation $ROBO #ROBO

On Saturday morning, the sunlight just crossed the balcony's blinds, casting lazy golden rays on the floor. A rare weekend, free from the relentless barrage of meeting invitations and the endlessly growing unread emails.

In the kitchen, I was observing the auxiliary robotic arm in the corner that was in the testing phase—I habitually call it "Little R." As an end-side device of a life assistance system, it was preparing to execute a seemingly simple instruction: to pour me a cup of hot coffee.

Little R's camera accurately locked onto the handle of the coffee pot, its mechanical claws opened and closed at a preset angle, smoothly lifting the coffee pot. Its movements were as smooth as an industrial veteran on an assembly line. Then, it aimed the spout at the mug on the table, tilted, and the brown liquid poured down with a fragrant aroma.

Everything was perfect until the last half a second.

Today, I temporarily replaced it with a new bone china cup. Its surface glaze is extremely smooth, and there are a few water stains from last night's wash that haven't dried yet. When the coffee was poured in, the center of gravity of the cup shifted slightly, and with the friction with the marble countertop sharply decreasing, the cup unexpectedly slid two centimeters to the left.

Little R's visual sensor captured the displacement of the cup, but its control algorithm was still executing the second half of the 'pouring liquid' program. Its brain froze at that moment: should it immediately stop pouring? Or should it chase the moving cup?

After a delay of a fraction of a second, the coffee ruthlessly poured onto the countertop, dripping from the edge onto the floor.

I picked up a cloth to clean while I couldn't help but smile. This spilled coffee not only did not ruin my morning but also allowed me to intuitively see an ultimate problem that has troubled the tech community for many years, which is precisely the chasm that the Robo project (embodied intelligence and general robotic platform) is trying to bridge—Moravec's paradox.

In the digital world, AI can solve extremely complex calculus in seconds, write beautifully crafted poetry, and even defeat the world's best Go masters; but in the real physical world, making a robot perceive the sliding of a cup and instantly make muscle adjustments 'like a human' is a daunting challenge.

This spilled coffee is the breakthrough point for the Robo project to move from 'concept' to 'greatness'. Today, we will start from this small daily event to deeply analyze the technological logic, architectural reconstruction, and the future landscape depicted by the Robo project.

1. Breaking the Deadlock: From 'Code Logic' to 'Physical Intuition'

Traditional robots (like mechanical arms in factories) are typical 'rote memorization' type performers. They rely on extremely precise coordinate systems and pre-written scripts. In an undisturbed environment, they are gods; but once placed in human life scenarios filled with variables—even just a cup with a slipping base—they instantly become disabled.

The core vision of the Robo project is to endow machines with 'physical intuition'.

It no longer attempts to exhaust all possible unexpected events (because the variables of the real world are infinite), but instead uses a brand new embodied intelligence architecture to enable machines to perceive, judge, and react in dynamic environments in real time. It aims to achieve a leap from 'executing predetermined programs' to 'understanding physical common sense'.

To achieve this, the Robo project underwent a major reconstruction at the underlying architecture.

2. Core Reconstruction: The 'Trinity' Architecture of the Robo Project

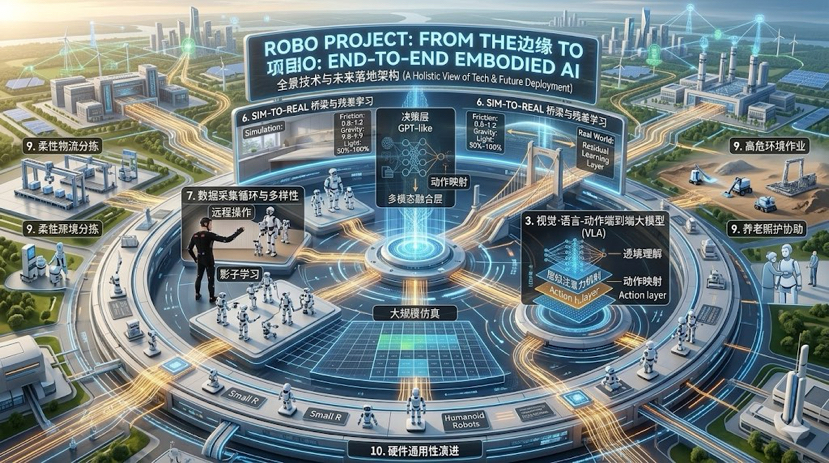

To have the robot catch the cup of coffee that was about to spill, the Robo project built three crucial modules in its tech stack: perception layer, decision layer, and execution layer. This is not a traditional hierarchical structure but a highly coupled 'trinity'.

1. Multimodal Visual-Tactile Perception Network (VTPN)

One reason for Little R's failure is that it only 'saw' the cup but did not 'feel' it. The Robo project completely abandoned single visual dependence.

• High-Frequency Visual Flow: No longer processing a few static images per second, but introducing event camera-level dynamic capture, focusing on capturing 'changes' in the field of view rather than 'global background', greatly reducing computational overhead.

• High-Resolution Haptic Feedback: Integrating high-density flexible sensors at the mechanical execution end (such as grippers). When the gripper contacts the cup, it can not only sense that it has 'grasped' it but also feel the 'friction coefficient', 'temperature', and even 'slight deformation of the material'.

• Spatial Acoustic Perception: The sound changes of liquid pouring and the subtle friction sounds of objects sliding are integrated into the perception large model as an important supplement to visual blind spots.

2. Vision-Language-Action End-to-End Large Model (VLA)

This is the 'brain' of the Robo project and the most revolutionary part. Traditional robots convert visual information into code logic and then control motors through code. This process is long and prone to information loss.

The Robo project adopts an end-to-end architecture. You can think of it as a ChatGPT encased in a steel shell, but what it outputs is no longer text, but actions.

• Context Understanding: When a user says 'pour me a cup of coffee, don't spill it', the brain translates it into a continuous physical expectation.

• Direct Mapping: The model directly receives camera pixel and haptic signal inputs, and through the 'black box' computation of neural networks, directly outputs torque and speed commands for each joint. This eliminates the complex inverse kinematics solving process and greatly reduces latency.

3. Compliant Actuation

With a smart brain, agile limbs are also needed. When the cup slides, the robotic arm should not remain rigidly suspended in mid-air but should have 'compliance' like a human arm.

• Dynamic Impedance Control: The hardware drive of the Robo project no longer pursues absolute positional rigidity but allows robots to act according to the circumstances when encountering unexpected resistance (or losing resistance).

• High-Frequency Fine-Tuning: Refreshing the control loop at 500Hz or even higher. Within 0.1 seconds of the cup sliding, the actuator has already received dozens of correction commands from the brain, and the wrist instinctively follows the movement of the cup or quickly retracts to cut off the water flow.

3. Bridging the Gap: Data Flywheel and Sim-to-Real Challenges

No matter how good the architecture is, it is empty without data. Large language models can grow by consuming text from the internet, but the 'physical interaction data' needed for the Robo project does not exist online. You cannot expect a robot to learn how to cut potatoes by watching a thousand hours of Li Ziqi cooking videos.

The barrier of the Robo project is built on its extremely large and efficient data acquisition and transfer loop.

1. Teleoperation and Shadow Learning

In the early stages of the project, core data came from human 'demonstration and teaching'. Operators wore motion capture devices with haptic feedback, remotely controlling robots to pour coffee, fold clothes, and tighten screws as if playing a VR game.

During this process, the robot recorded every tiny correction made by humans when faced with 'slipping', 'falling', and 'size mismatch'. These high-quality 'Expert Trajectories' are the foundational teaching materials for the Robo large model.

2. God's Eye View in Simulation Training

Collecting data in the physical world is too slow. The Robo project built an extremely realistic physical engine simulation environment (Sim). In cyberspace, ten thousand virtual Little Rs are simultaneously pouring coffee.

• Domain Randomization: The system will crazily change the variables in the virtual environment—cups turning into glass, tables tilting, slightly altering gravity, dimming light. After experiencing millions of failures, the robot used reinforcement learning to summarize a set of the most robust action strategies.

3. The Thrilling Leap of Sim-to-Real

Bringing the unparalleled skills honed in the virtual world into reality is the most stunning aspect of the Robo project. Since the physical laws of the real world (air resistance, motor wear, unknown minute vibrations) can never be 100% simulated, the system employs 'Residual Learning'. The large model provides a basic action framework, while the small model on the edge makes real-time interpolations based on real-world feedback.

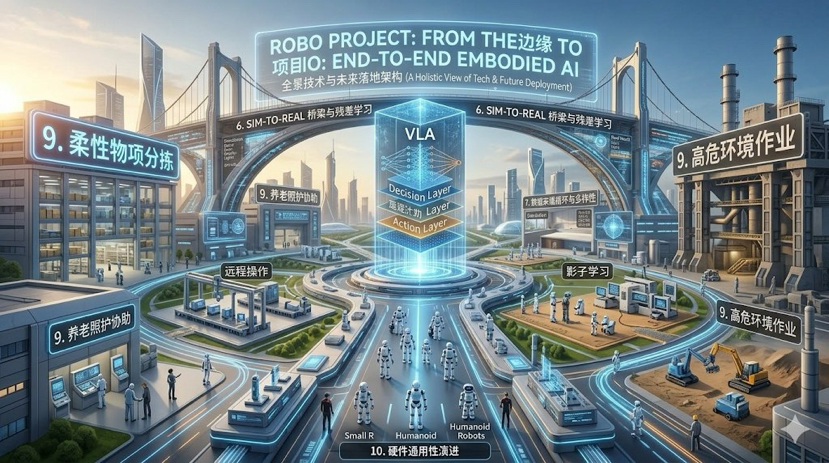

4. The Commercial Landscape of the Robo Project: Beyond the Kitchen

Although a cup of coffee triggered this reflection, the ambition of the Robo project is by no means limited to being a high-end housekeeper. When 'general physical interaction capabilities' are unlocked, a trillion-level market will be reshaped.

• Flexible Manufacturing and Intelligent Logistics: Facing packages and parts of various shapes and random sizes, traditional robotic arms require expensive custom fixtures. Robo-driven robots can autonomously plan grabbing strategies just by 'taking a glance', thoroughly liberating the sorting bottleneck of non-standard products.

• High-Risk Environment Operations: In areas of nuclear radiation cleanup, deep-sea exploration, or post-disaster rubble rescue, the Robo robot with dynamic adaptability will become an extension of human hands.

• Rehabilitation and Elderly Care: This may be the warmest landing scenario. In the face of fragile human bodies, machines must possess extreme 'softness' and 'empathy algorithms'. Supporting the elderly and assisting in turning over, these zero-tolerance actions are the ultimate test for Robo's multimodal perception and compliant control.

5. In Conclusion: When Magic Becomes Everyday

After cleaning up the spilled coffee, I washed the bone china cup, dried the bottom, and replaced it with a rough-surfaced mug. This time, Little R successfully completed the task. The aroma of steaming coffee filled the Saturday morning once again.

The progress of technology often does not arrive in a shocking way but quietly integrates into life. When we are no longer surprised that our phones can understand us or that cars can center themselves on highways, that is the mark of technological victory.

For the Robo project, its true success acceptance criteria is not demonstrating a backflip at the launch event or completing complex precision assembly in the lab. Rather, it is on an ordinary morning when the coffee cup on the table unexpectedly slides again, and the robotic arm in the kitchen can naturally reach out to support it like an old friend, then steadily pour a cup of fragrant coffee.

The entire process was uneventful and taken for granted.