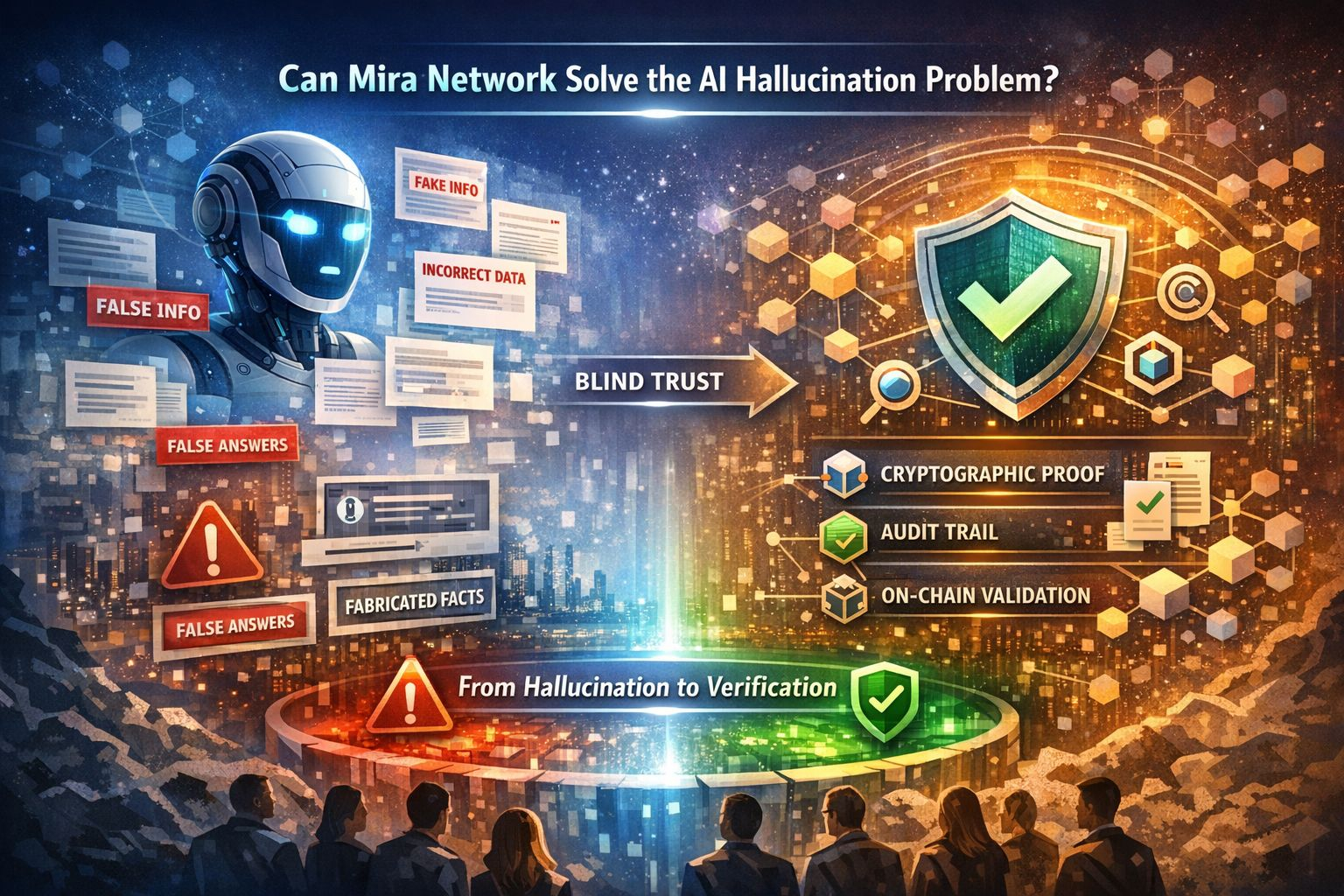

Can Mira Network Solve the AI Hallucination Problem? 🤖🔍

Artificial Intelligence is evolving at lightning speed ⚡. From content creation to autonomous agents, AI is transforming industries worldwide. But there’s one major challenge that continues to raise concerns — AI hallucinations.

AI hallucination happens when a model generates information that sounds confident and accurate but is actually false or misleading. 😬 This issue affects chatbots, research tools, trading bots, and even enterprise AI systems. As adoption grows, the cost of misinformation grows with it.

So the big question is: Can Mira Network solve this problem?

Understanding the Root of AI Hallucinations 🧠

Most AI models are probabilistic systems. They predict the most likely next word or action based on patterns in data — not true understanding. When data is incomplete, biased, or ambiguous, models may “fill in the gaps” with incorrect information.

The result?

Fabricated facts 📉

Incorrect citations 📚

Confident but false outputs ❌

Risky automated decisions ⚠️

In high-stakes environments like finance, healthcare, or autonomous robotics, hallucinations aren’t just annoying — they’re dangerous.

Mira Network’s Verification Approach 🔐

Mira Network introduces a powerful concept: verification before trust.

Instead of blindly accepting AI outputs, Mira aims to create a system where responses can be validated through cryptographic proofs and consensus mechanisms. This adds a verification layer between AI generation and final execution.

Here’s how this changes the game:

✅ AI outputs can be cross-checked

✅ Results can be validated before deployment

✅ Autonomous agents can operate with accountability

✅ Systems become more transparent

Rather than replacing AI models, Mira acts as a trust layer — ensuring that intelligence is not just generated, but verified.

From Blind Trust to Verifiable Intelligence 🌐

Today’s AI ecosystem largely runs on trust. Users assume the output is correct. Businesses hope automation works properly. But hope isn’t a security model.

Mira Network shifts the paradigm toward provable intelligence. By integrating blockchain-style validation and decentralized verification processes, it reduces reliance on single-model outputs.

Think of it like moving from:

“Trust me, I’m right.” 🤷♂️

to

“Here’s proof that this output was verified.” 🧾✨

That distinction could define the next phase of AI evolution.

Is It a Complete Solution? 🤔

No system can eliminate hallucinations entirely. AI models will always carry statistical uncertainty. However, what Mira Network offers is mitigation — reducing risk through layered validation.

The real power lies in:

Multi-model verification

Transparent audit trails

Decentralized checking mechanisms

On-chain proof of accuracy

If successfully adopted at scale, this could dramatically improve reliability in AI-driven systems.

The Bigger Picture 🚀

As AI moves toward autonomous agents, machine-to-machine finance, and self-operating systems, verification becomes non-negotiable. Trust alone won’t support the future digital economy.

Mira Network is positioning itself not as another AI model, but as the infrastructure for trustworthy AI.

Can it completely solve AI hallucinations?

Maybe not 100%.

But can it significantly reduce them and redefine how we trust machine intelligence?

That’s where the real potential lies. 🌟#Mira $MIRA