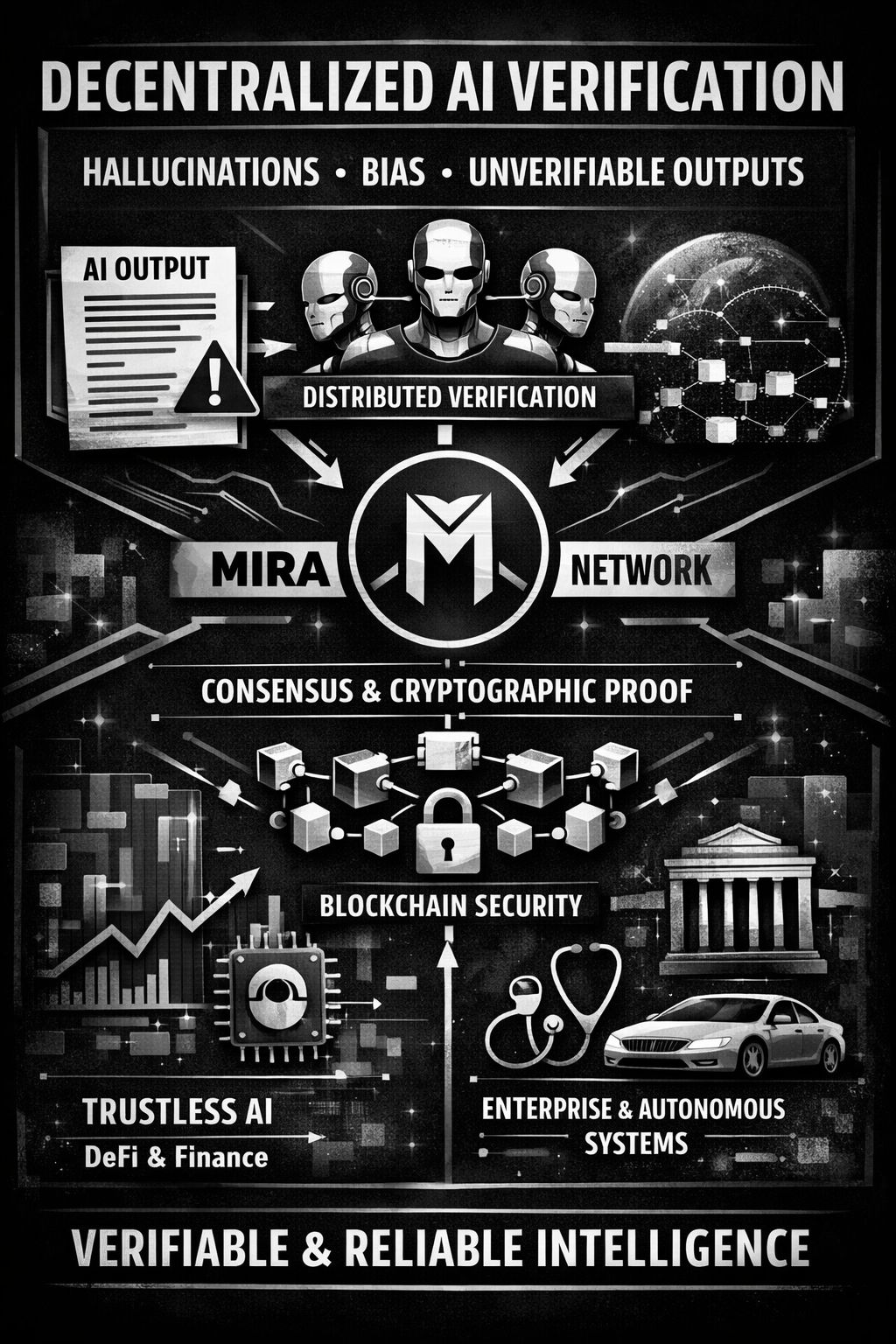

Artificial intelligence is powerful, but reliability remains its biggest bottleneck. Hallucinations, bias, and unverifiable outputs make most AI systems unsuitable for mission-critical applications like finance, healthcare, governance, and autonomous infrastructure. That’s why I’ve been diving deeper into Mira Network — a decentralized verification protocol that rethinks how AI outputs are trusted.

Instead of blindly accepting a single model’s response, Mira breaks complex AI-generated content into smaller, verifiable claims. These claims are distributed across independent AI models within a decentralized network, where consensus and economic incentives determine validity. The result? AI outputs transformed into cryptographically verified information secured by blockchain consensus.

This approach shifts trust away from centralized providers and toward a transparent, incentive-aligned ecosystem. It’s not just about better AI responses — it’s about building a trustless verification layer for the entire AI economy. Imagine DeFi protocols executing AI-driven strategies with provable correctness, or enterprises integrating AI systems that are economically incentivized to be accurate.

By aligning verification with blockchain mechanics, @mira_network is positioning itself at the intersection of AI and Web3 infrastructure. If reliable AI is the missing layer for autonomous systems, then $MIRA could represent a key primitive in the future decentralized intelligence stack.

The future of AI isn’t just smarter — it’s verifiable.#Mira