I don’t really see systems like Sign Protocol as neat pieces of logic running in isolation. They feel more like busy roads at rush hour. Early on, everything looks fine. Cars move, signals work, and people assume others will follow the same rules. But the real character of a system only shows up when things get crowded, when urgency rises, and when a few participants start testing how far they can push.

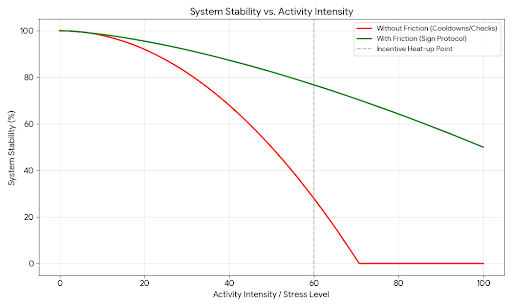

That’s where rules like cooldowns, buyer checks, and country blocks start to matter. Not because everything is broken without them, but because over time people have learned that behavior changes fast once incentives heat up. Calm conditions hide a lot. Stress reveals almost everything.

Cooldowns are the simplest example, but also one of the most misunderstood. On paper, they just force a pause between actions. It can feel unnecessary, even annoying. If someone wants to act again, why slow them down? But that question assumes everyone is operating at a human pace, making decisions one step at a time.

That’s rarely what happens once there’s something to gain. I’ve seen how quickly activity shifts from manual to automated. Scripts take over, repetition becomes instant, and one participant can suddenly act faster than the rest of the system can even notice. Without any delay, a small edge turns into a loop, and that loop compounds.

It’s a bit like letting one driver circle a roundabout endlessly at high speed while others are trying to enter. The structure still exists, but it stops working as intended. Cooldowns don’t fix the behavior, but they slow it enough that it can’t spiral out of control immediately. They give the system a chance to catch up with what’s happening.

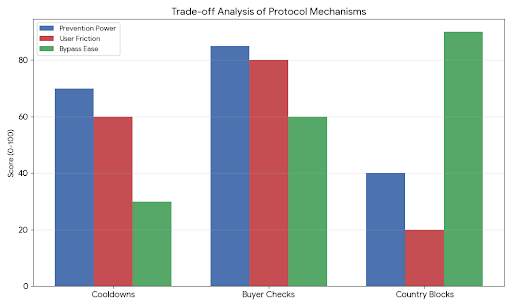

Of course, that comes at a cost. The system doesn’t know who is acting in good faith and who isn’t. Everyone gets slowed down the same way. Under normal conditions, that friction feels unnecessary. Under stress, it becomes a buffer that keeps things from tipping too quickly. There’s always a balance here, and it’s never perfect. Too much delay, and real users get frustrated. Too little, and the rule barely matters.

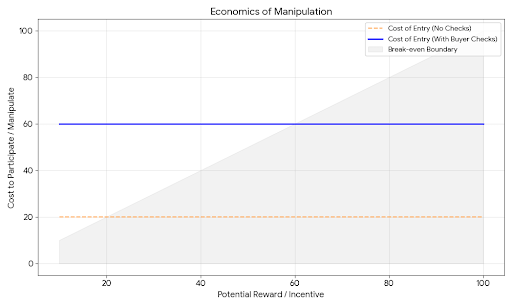

Buyer checks come from a different place. They’re less about speed and more about who gets to participate. In a perfect world, every participant would be independent and honest, and you wouldn’t need to question that. In reality, identity becomes flexible the moment there’s an advantage to bending it.

I’ve watched systems where one actor quietly becomes many. Multiple accounts, layered identities, intermediaries standing in between. What looks like a broad group of participants can actually be tightly coordinated behind the scenes. That’s where buyer checks try to step in, adding a layer of scrutiny before someone can engage.

But identity in these environments is tricky. It’s not fixed, and it’s not always reliable. Any method used to verify someone can eventually be copied, bypassed, or outsourced. If verification is simple, it spreads quickly. If it’s strict, it starts excluding people who aren’t doing anything wrong. And if it relies on outside data, it brings in delays and blind spots from those sources.

So buyer checks don’t really stop manipulation. What they do is make it more expensive and more complicated. That shift matters. Systems rarely break because there are no rules. They break when ignoring the rules becomes too easy compared to the reward.

Country blocks add another layer, one that feels more rigid at first glance. Restrict access based on location, and you draw a clear boundary. But in practice, location is one of the easiest things to disguise. Tools that reroute traffic or mask origin are widely available and constantly used.

So these blocks don’t act like solid walls. They’re more like barriers that slow down casual access while doing less against determined workarounds. Still, they serve a purpose. Not every risk comes from highly motivated actors. Some of it comes from exposure, from being openly accessible in places where that creates legal or regulatory pressure.

By putting country restrictions in place, the protocol signals an attempt to limit that exposure. It’s less about perfect enforcement and more about reducing obvious points of conflict. But under pressure, these restrictions can shift activity rather than stop it. People find alternate paths, and those paths often connect back in indirect ways.

It reminds me of closing a major street in a city. Traffic doesn’t disappear. It spills into side roads, sometimes creating more chaos than before. The system still functions, but in a more fragmented and less predictable way.

When you look at all three mechanisms together, what they really introduce is friction. Not the kind that stops movement entirely, but the kind that shapes how movement happens. They slow repetition, filter participation, and limit exposure. Each one targets a different weak point that tends to show up when activity intensifies.

But friction always comes with trade-offs. It can stabilize things, but it can also push people away or encourage workarounds. There’s no setting where everything works smoothly all the time. The goal is usually more modest, to avoid sudden breakdowns and give the system room to adjust when conditions change.

One thing that often gets overlooked is how these rules interact with behavior outside the system. Activity doesn’t stay contained. If one path becomes harder, people look for another. A strict check here might create a service elsewhere that specializes in passing that check. A delay in one place might shift volume into another system that has fewer restrictions.

These indirect effects can be harder to predict than the rules themselves. I’ve seen situations where the second-order responses matter more than the original design. People adapt quickly, especially when there’s money involved. The system is always in a kind of quiet negotiation with its users.

There’s also the issue of timing. No rule is enforced instantly. There’s always a gap between action and reaction. Cooldowns help by limiting how fast something can repeat, but they don’t stop the first move. Buyer checks depend on information that may already be outdated. Country blocks rely on signals that can be altered in real time.

So the system is never fully in control. It’s always reacting, always a step behind. The aim isn’t perfection. It’s to avoid falling too far behind when things start to move quickly.

What I find more honest about this approach is that it doesn’t assume ideal behavior. It assumes that under the right conditions, people will push limits, automate aggressively, and look for any edge they can find. That’s not a flaw in the design. It’s a reflection of how these environments actually work.

Still, none of these mechanisms can guarantee safety. They can’t remove incentives, and they can’t fully prevent coordination. What they can do is slow things down, raise costs, and make it harder for problems to scale instantly.