I kept coming back to this thought while looking at SIGN. We often frame the problem as identity or verification, but that’s not really where things break. Most systems are actually pretty good at verifying people. The real issue shows up after that.

They don’t share that trust.

At first, I assumed SIGN was just another attempt to streamline onboarding. Maybe reduce friction, maybe make compliance easier. That’s usually where these conversations end. But the more I sat with it, the more it felt like the problem is less about efficiency and more about isolation.

Every system operates like its own closed environment. You go through the process, you get approved, and that approval stays locked there. The moment you step into a different system, it’s as if none of it happened.

It’s not logical, but it’s become normal.

And when you scale that across platforms, regions, or institutions, it starts to create real limitations. Not just inconvenience, but barriers to participation. Especially in places trying to build faster, connect markets, and bring more people into digital systems.

That’s where SIGN starts to shift the perspective.

It doesn’t try to make one system dominant. It doesn’t force a universal standard. Instead, it introduces this idea that verification can exist as something reusable something that doesn’t lose meaning when it moves.

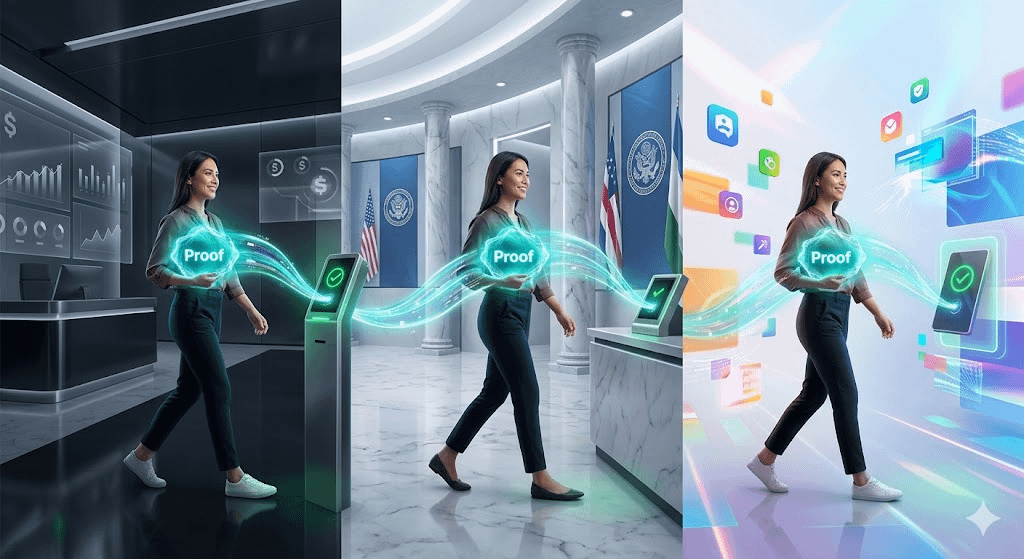

So rather than sending your data everywhere, you carry a form of proof. Something that says, “this has already been checked,” without exposing everything again.

It sounds small, but it changes the interaction between systems.

Because now, the question isn’t “who verified you?”

It becomes “do I trust the verification you’re presenting?”

That shift matters.

It opens the door for systems to stay independent while still recognizing each other’s outcomes. Not perfectly, but enough to reduce repetition. Enough to make movement between systems feel less like starting over.

But this is also where things get complicated.

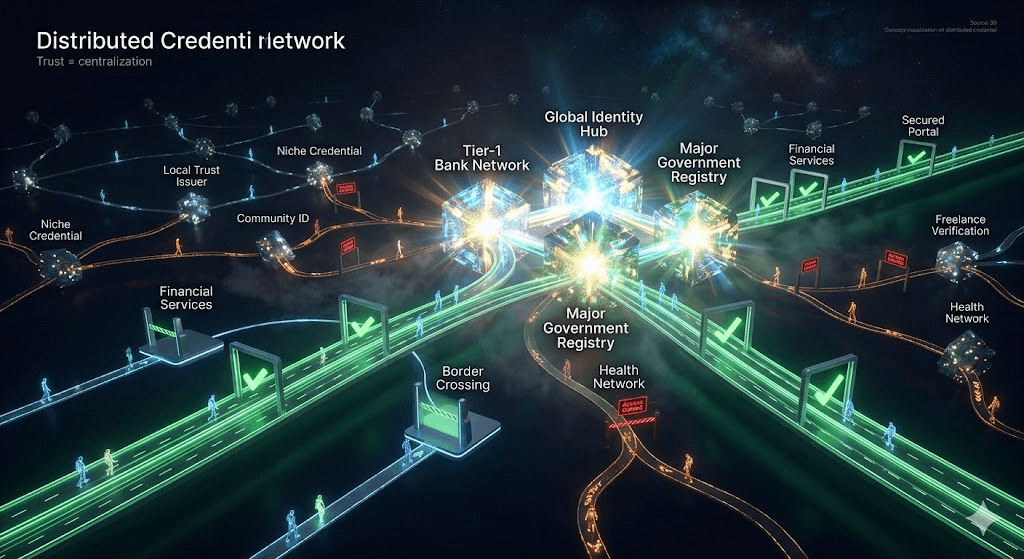

Trust doesn’t just appear because a system allows it. It has to be earned, and more importantly, agreed upon. Different institutions will have different thresholds. Some will accept external proofs easily. Others won’t.

And that creates a layered reality. Some credentials move freely. Others don’t.

There’s also the risk that certain issuers become more influential than others. If a few entities are consistently trusted across systems, they naturally become central points. Not by design, but by usage.

So even in a model that aims to distribute trust, patterns of concentration can still emerge.

Then there’s the practical side.

Getting systems to integrate something like this isn’t trivial. It requires alignment, not just technically but operationally. Processes need to adapt. Standards need to be understood. And people both users and institutions need to feel comfortable relying on something they didn’t build themselves.

That takes time.

Especially in regions where growth is fast but structures are still evolving, like parts of the Middle East. The opportunity is there, but so is the complexity. Different systems, different expectations, different levels of readiness.

So I don’t see this as a quick shift.

But I do see the direction.

If verification can become something that persists instead of resets, it changes how systems connect. It reduces friction in a way that’s not immediately visible, but becomes obvious over time.

Still, I’m cautious.

Because ideas like this depend less on design and more on adoption. If enough systems start to rely on shared proofs, it works. If they don’t, it stays another layer that people ignore.

So for now, I’m watching how it gets used, not how it’s described.

Because in the end, trust between systems isn’t a feature. It’s a behavior.

And behavior is always harder to change.

$SIGN #SignDigitalSovereignInfra