The first time I worked with Sign Network, the split between on-chain data and storage on IPFS and Arweave didn’t feel like an architectural choice. It felt like something I had to constantly negotiate with. You define an attestation, anchor its hash on-chain, push the full payload off-chain, and everything looks clean until you actually try to rely on it across multiple reads.

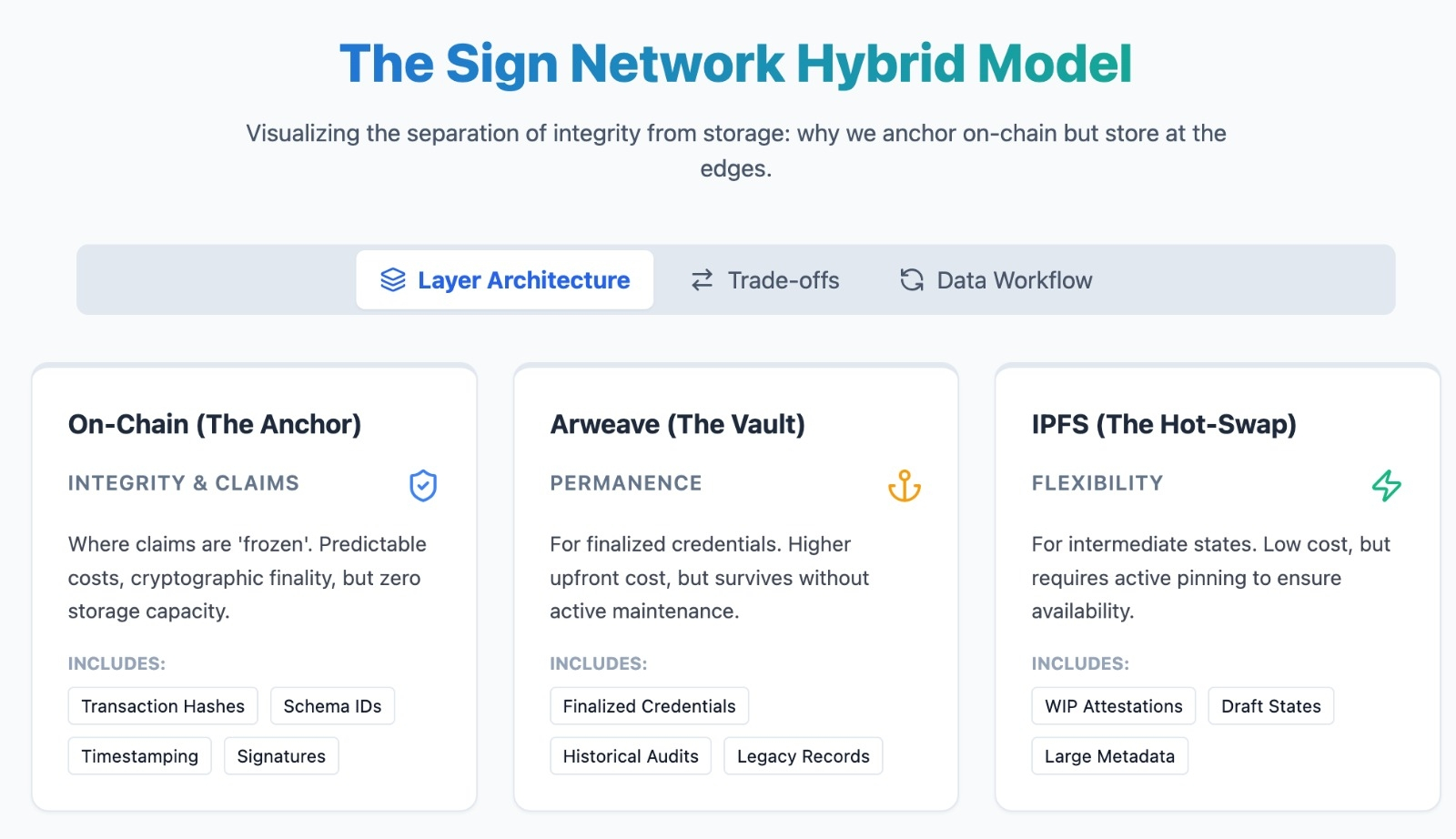

At some point it becomes obvious that the chain is not where your data lives. It is where your claims are frozen.

That distinction sounds subtle, but it changes how you build. In one flow, we were issuing attestations for intermediate states, not just final outcomes. Roughly five to six events per user action instead of one. On-chain, the cost stayed predictable because only hashes were recorded. Off-chain, the payload expanded quickly across IPFS. Retrieval still worked, but the behavior shifted. We stopped asking “can we afford to store this” and started asking “can we afford to interpret this later.”

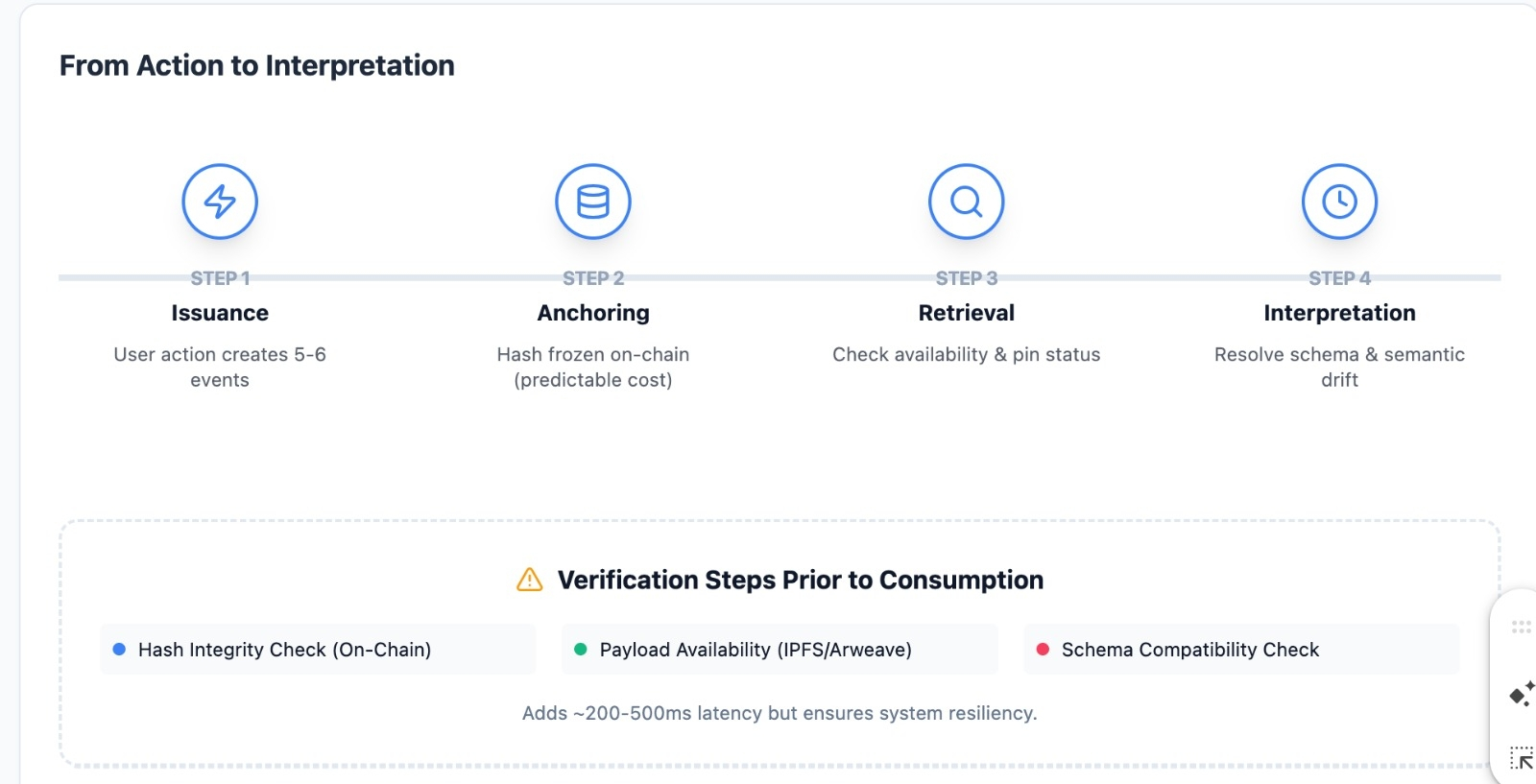

The friction shows up when you need to read the data back in a meaningful way. The hash on-chain tells you something exists and hasn’t been tampered with. It does not tell you if the underlying data is still accessible, pinned, or even consistently structured across environments. In one case, two identical attestations pointed to IPFS objects that had diverged slightly because of schema evolution. Same hash logic at issuance time, different interpretation at read time.

Nothing broke technically. But the system stopped feeling deterministic.

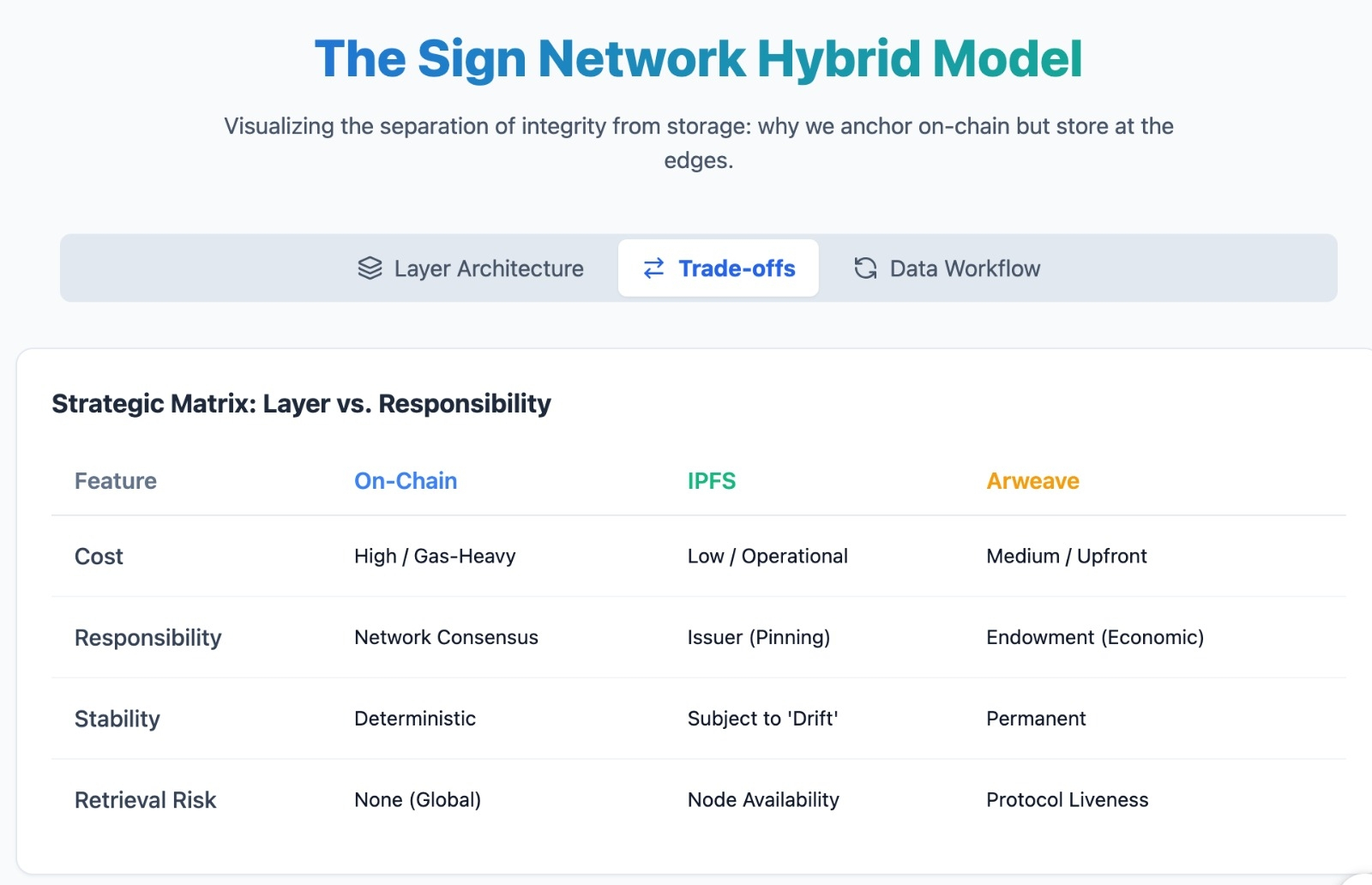

That is where combining on-chain anchoring with IPFS and Arweave starts to make more sense. You are not just saving gas. You are distributing responsibility. The chain guarantees integrity. IPFS offers flexibility with lower cost but requires active pinning strategies. Arweave leans toward permanence, but introduces its own economic assumptions about long-term storage. Each layer absorbs a different type of risk.

In practice, I noticed teams quietly choosing between IPFS and Arweave not based on ideology, but based on how much they trusted future retrieval. For frequently updated attestations, IPFS made sense because we could re-pin and update references as needed. For records that should not move, like finalized credentials, Arweave felt safer even if it cost more upfront. The difference was not theoretical. It showed up when one dataset disappeared temporarily due to unpinned IPFS nodes while another remained accessible because it had been pushed to Arweave.

So the hybrid model is less about optimization and more about failure containment.

There is a tradeoff hiding in the middle though. Once you move data off-chain, you introduce a silent dependency on retrieval layers that are not enforced by the same consensus guarantees. You gain scalability, but you lose a certain kind of finality. The chain can prove something existed. It cannot force the network to serve it reliably.

That changes how you design workflows. We ended up adding a verification step before consuming attestations, not just checking hashes but also confirming availability and schema compatibility. It added latency. Maybe a few hundred milliseconds per read in some cases. Small, but noticeable when scaled across many interactions. The system became more resilient, but less immediate. I still find myself unsure about where the balance should sit.

There is also a subtle shift in how trust is perceived. When everything is fully on-chain, users assume completeness. When part of the data lives off-chain, they are trusting a combination of cryptographic guarantees and storage assumptions. That blend is harder to explain and even harder to standardize across applications.

Try this as a quick test. Take the same attestation, anchor it on-chain, store the payload on IPFS in one case and Arweave in another. Then simulate a retrieval after a few weeks without actively maintaining the IPFS pin. See which one you trust more when you need it under pressure.

Or another one. Issue a series of attestations with slightly evolving schemas, all anchored correctly. Come back later and try to aggregate them into a single coherent dataset. Notice how much of the effort shifts from verification to interpretation.

Even the economics start to feel different once you spend time inside this setup. The token layer in Sign eventually comes into view, not as a trading asset but as a coordination tool. Someone has to pay for anchoring, for storage decisions, for maintaining access over time. The moment you realize that, the token stops being optional. It becomes part of how these tradeoffs are sustained. But it does not resolve the underlying tension.

What stays with me is that this architecture pushes complexity out of the chain and into the edges. You save on execution costs and gain flexibility, but you inherit a new kind of uncertainty around meaning and availability. The system remains verifiable, yet slightly harder to rely on in a uniform way.

I am not convinced that is a drawback. It might just be where systems naturally end up once scale forces them to separate integrity from storage.

Still, I keep wondering whether we are trading one kind of determinism for another. Not breaking things, just shifting where they can quietly drift.

@SignOfficial #SignDigitalSovereignInfra $SIGN